Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#401 Dark Discussions at Cafe Infinity » Come Quotes - IV » 2026-02-14 16:38:49

- Jai Ganesh

- Replies: 0

Come Quotes - IV

1. If you have an important point to make, don't try to be subtle or clever. Use a pile driver. Hit the point once. Then come back and hit it again. Then hit it a third time - a tremendous whack. - Winston Churchill

2. There will be no end to the troubles of states, or of humanity itself, till philosophers become kings in this world, or till those we now call kings and rulers really and truly become philosophers, and political power and philosophy thus come into the same hands. - Plato

3. With mirth and laughter let old wrinkles come. - William Shakespeare

4. Come Fairies, take me out of this dull world, for I would ride with you upon the wind and dance upon the mountains like a flame! - William Butler Yeats

5. The automobile engine will come, and then I will consider my life's work complete. - Rudolf Diesel

6. It is very important to generate a good attitude, a good heart, as much as possible. From this, happiness in both the short term and the long term for both yourself and others will come. - Dalai Lama

7. Let us endeavor so to live so that when we come to die even the undertaker will be sorry. - Mark Twain

8. Nothing brings me more happiness than trying to help the most vulnerable people in society. It is a goal and an essential part of my life - a kind of destiny. Whoever is in distress can call on me. I will come running wherever they are. - Princess Diana.

#402 Jokes » Grape Jokes - I » 2026-02-14 16:21:41

- Jai Ganesh

- Replies: 0

Q: What did the green grape say to the purple grape?

A: Breathe! Breathe!

* * *

Q: Why aren't grapes ever lonely?

A: Because they come in bunches!

* * *

Q: What is purple and long?

A: The grape wall of China.

* * *

Q: What did the grape say when he got stepped on?

A: He let out a little wine.

* * *

Q: "What's purple and huge and swims in the ocean?"

A: "Moby Grape."

* * *

#403 Science HQ » Oscillator » 2026-02-14 16:13:20

- Jai Ganesh

- Replies: 0

Oscillator

Gist

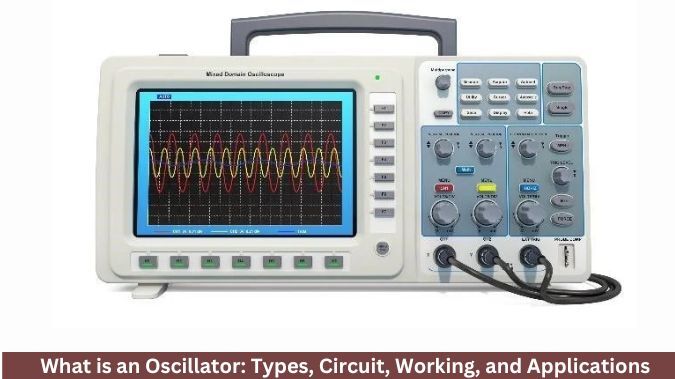

An oscillator is an electronic circuit that converts DC (Direct Current) into a periodic, repeating AC (Alternating Current) signal—such as a sine, square, or triangle wave—without needing an external input signal. These devices are essential for generating, timing, and controlling frequencies in systems like radio, clocks, computers, and sensors.

Oscillators are fundamental in electronics, generating precise frequencies for applications like clocks in computers, carrier waves in radios & Wi-Fi, and timing signals in microcontrollers, enabling everything from timekeeping (watches) to data synchronization (Bluetooth) and medical devices (ultrasound), acting as versatile signal generators for diverse needs.

Summary

An electronic oscillator is an electronic circuit that produces a periodic, oscillating or alternating current (AC) signal, usually a sine wave, square wave or a triangle wave, powered by a direct current (DC) source. Oscillators are found in many electronic devices, such as radio receivers, television sets, radio and television broadcast transmitters, computers, computer peripherals, cellphones, radar, and many other devices.

Oscillators are often characterized by the frequency of their output signal:

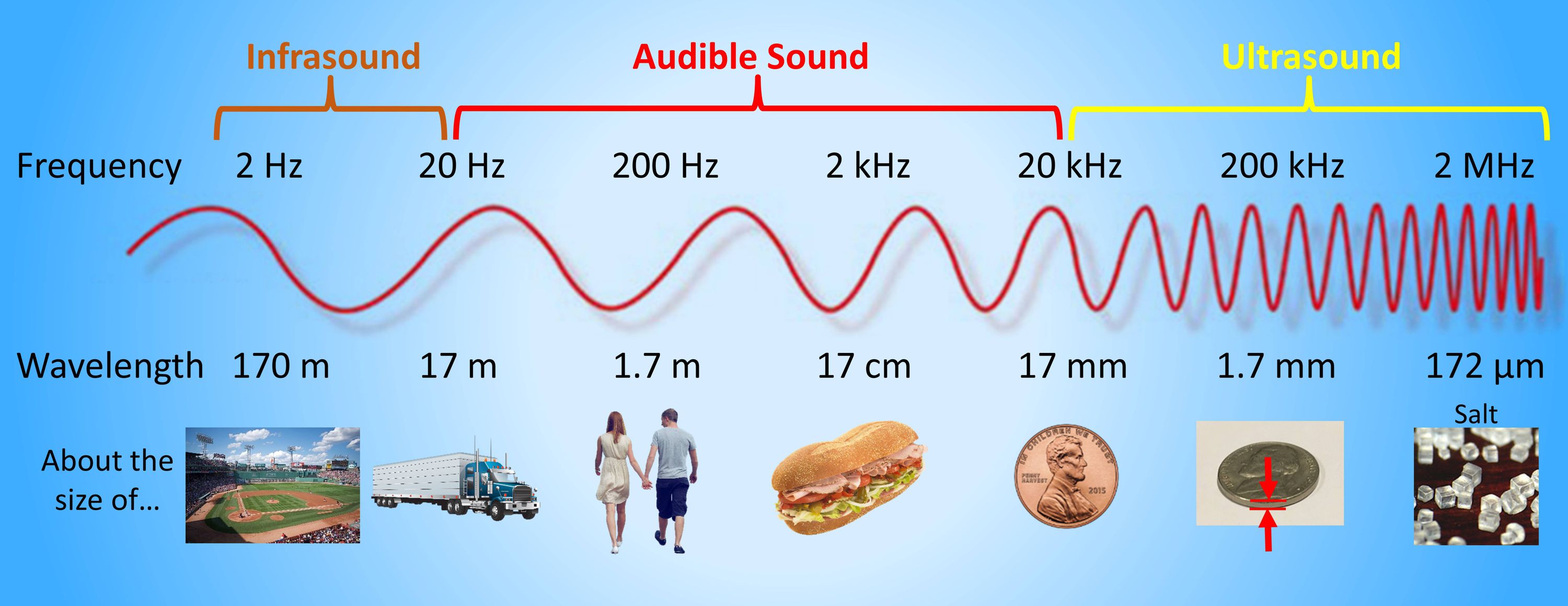

* A low-frequency oscillator (LFO) is an oscillator that generates a frequency below approximately 20 Hz. This term is typically used in the field of audio synthesizers, to distinguish it from an audio frequency oscillator.

* An audio oscillator produces frequencies in the audio range, 20 Hz to 20 kHz.

* A radio frequency (RF) oscillator produces signals above the audio range, more generally in the range of 100 kHz to 100 GHz.

There are two general types of electronic oscillators: the linear or harmonic oscillator, and the nonlinear or relaxation oscillator. The two types are fundamentally different in how oscillation is produced, as well as in the characteristic type of output signal that is generated.

The most-common linear oscillator in use is the crystal oscillator, in which the output frequency is controlled by a piezo-electric resonator consisting of a vibrating quartz crystal. Crystal oscillators are ubiquitous in modern electronics, being the source for the clock signal in computers and digital watches, as well as a source for the signals generated in radio transmitters and receivers. As a crystal oscillator's “native” output waveform is sinusoidal, a signal-conditioning circuit may be used to convert the output to other waveform types, such as the square wave typically utilized in computer clock circuits.

Details

Oscillators are essential components in the world of electronics, playing a crucial role in generating periodic signals. From the simplest applications to complex systems, oscillators provide the timing signals needed for synchronization and control. This article explains what oscillators are and how they work, explores the various types and their performance characteristics, highlights their applications across industries, and reviews recent advancements in this essential technology.

What is an Oscillator?

An oscillator is an electronic circuit that produces a continuous, periodic signal - typically in the form of a sine wave, square wave, or triangle wave - without requiring an input signal. These signals are defined by their frequency and amplitude, which can be precisely controlled to suit specific applications. In essence, an oscillator converts energy from a DC power supply into an AC signal.

Oscillators are found in a wide array of devices, including clocks, radios, and computers. They are considered the heartbeat of electronic systems, serving as timing references that enable circuits to synchronize and function properly.

What is an Oscillator in a CPU?

A CPU oscillator is responsible for generating clock signals that regulate the timing and speed of the processor. These clock signals synchronize various CPU components, allowing for the coordinated execution of instructions.

Typically, a crystal oscillator is used, which relies on the mechanical resonance of a vibrating quartz crystal to produce a stable frequency. This precise timing is critical to a CPU’s performance and efficiency, as it directly affects the instruction execution rate.

How Do Oscillators Work?

Oscillators generate a continuous, periodic signal - such as a sine wave or square wave - without requiring an input signal of the same frequency. They achieve this through the combined principles of feedback and resonance.

Basic Components

• Amplifier: Boosts the signal.

• Feedback Network: Determines the frequency of oscillation.

• Energy Source: Supplies power to sustain the oscillation.

The system continuously feeds part of its output back to the input, allowing the signal to regenerate itself. The frequency of oscillation depends on the configuration of components such as resistors, capacitors, and inductors within the feedback loop.

Purpose of an Oscillator

The primary purpose of an oscillator is to generate consistent clock signals that control the timing and synchronization of electronic systems, especially CPUs. These signals are essential for ensuring the coordinated execution of instructions, which in turn impacts overall system performance.

Types of Oscillators:

What is an oscillator? And what are the types of oscillators?

Oscillators, essential components in electronic circuits, can be categorized based on the type of waveform they produce and their method of operation. These components are generally divided into two main categories.

Relaxation vs Linear Oscillators

Relaxation Oscillators: Produce non-sinusoidal waveforms such as sawtooth or square waves.

Linear Oscillators: Generate sinusoidal waveforms.

Specific Types

Crystal Oscillators: Crystal oscillators are linear oscillators, and use quartz crystals to generate precise frequencies. Known for their stability and accuracy, they are ideal for communication devices and clocks.

RC Oscillators: RC oscillators can be both relaxation oscillators and linear oscillators. These oscillators utilize resistors and capacitors to generate sine or square waves. Often used in audio applications due to their simplicity and cost-effectiveness.

LC Oscillators: LC oscillators are considered linear oscillators and use inductors (L) and capacitors (C) to produce oscillations. Typically employed in radio frequency (RF) applications due to their high-frequency capability.

Phase-Locked Loop (PLL) Oscillators: PLL oscillators are primarily considered linear oscillators and are used for frequency synthesis and modulation. Essential in telecommunications for signal processing and frequency control.

Emerging Oscillator Technologies

Recent advancements in oscillator technology focus on performance improvement, miniaturization, and integration with other electronic components.

MEMS Oscillators: Microelectromechanical systems offer smaller form factors, highly stable reference frequencies, and low power consumption - ideal for portable devices.

Programmable Oscillators: Allow for customized frequency outputs, reducing component count and streamlining the design process.

Devices That Use Oscillators

Many electronic devices rely on oscillators for essential functions like timing, signal generation, and frequency control. Their ability to produce consistent waveforms makes them indispensable in both consumer electronics and industrial systems.

Examples:

Quartz Watches: Use crystal oscillators to generate highly accurate timekeeping signals, ensuring the watch maintains precise seconds, minutes, and hours.

Radios: Rely on oscillators to generate carrier frequencies and to tune into specific broadcast channels for both AM and FM signals.

Computers: Employ oscillators in their system clocks to synchronize processor operations, manage data transfer, and maintain stable performance.

Cellphones: Utilize oscillators for network synchronization, frequency hopping in wireless communication, and internal clocking for processors and sensors.

Radar Systems: Depend on high-frequency oscillators to generate the radio waves that detect and measure the speed, range, and position of objects.

Metal Detectors: Use oscillators to produce electromagnetic fields that interact with metallic objects, enabling detection through changes in oscillation frequency or amplitude.

Performance Characteristics of Oscillators

Oscillators are evaluated based on several performance metrics that directly influence their suitability for specific applications. The three most critical are frequency stability, phase noise, and waveform shape.

Frequency Stability

Frequency stability describes an oscillator’s ability to maintain its output frequency under varying conditions over time.

• Short-Term Stability: Covers rapid variations over seconds or minutes, often caused by noise or small environmental changes.

• Long-Term Stability: Considers changes over hours, days, or years, typically influenced by component aging and gradual environmental shifts.

• Environmental Factors: Temperature fluctuations, supply voltage changes, and mechanical vibrations can affect stability.

• Crystal Oscillators: These oscillators excel in this area because the resonant frequency of a quartz crystal is highly resistant to such disturbances, making them ideal for precision timing applications like GPS, telecommunications, and laboratory measurement systems.

Phase Noise

Phase noise measures short-term, rapid fluctuations in the oscillator's phase, which manifest as small, random deviations from the ideal frequency.

• It is usually represented as a power density (dBc/Hz) at a given frequency offset from the carrier signal.

• Low Phase Noise: Essential in high-performance systems, such as satellite communications, radar, and high-speed data links, where timing jitter can degrade system performance or cause data errors.

• High Phase Noise: Can lead to signal distortion, reduced sensitivity in receivers, and degraded performance in frequency synthesizers.

Waveform Shape

The oscillator’s output waveform determines how well it interfaces with downstream circuitry.

• Sine Waves: Preferred in RF applications because they have minimal harmonic content, reducing the need for filtering.

• Square Waves: Common in digital clocking applications, as their fast transitions make it easy for digital circuits to detect logic states.

• Sawtooth or Triangular Waveforms: May be required in specialized systems, such as sweep generators in analog oscilloscopes.

• Poor Waveform Shape: Can cause signal integrity issues, increased electromagnetic interference (EMI), or inaccurate timing in digital circuits.

Are Oscillators Active Components?

Oscillators are classified as active components. They amplify electrical signals and generate power, distinguishing them from passive components like resistors and capacitors. While oscillators incorporate passive elements in their circuits, their role in signal generation qualifies them as active devices.

Industries That Use Oscillators

Oscillators' ability to generate stable, precise signals makes them indispensable for timing, synchronization, and frequency control across a wide range of sectors. The specific oscillator type used often depends on the application's demands - whether it’s ultra-high precision, rugged durability, or low power consumption.

Telecommunications: Oscillators generate carrier signals for data transmission. Their stability and accuracy ensure signal integrity over long distances. Crystal and PLL oscillators are widely used here.

Consumer Electronics: Devices like smartphones and TVs rely on oscillators to generate clock signals for microcontrollers. Their precision directly impacts device performance.

Automotive: Used in engine control units, infotainment systems, and sensor applications (e.g., ABS), oscillators regulate timing for ignition and fuel injection.

Medical Devices: Essential in pacemakers and diagnostic tools, where reliability and precision are critical. Crystal oscillators are often chosen for their long-term stability.

Additional Information

An oscillator is an electronic device that produces repetitive oscillating signals in the form of a sine wave, a square wave, or a triangle wave. Basically, this circuit converts DC (Direct Current) into an AC (Alternating Current) signal at a specific frequency.

An oscillator is essential in various electronic devices. It is used in Bluetooth modules for frequency generation and maintaining a stable connection. In relays, oscillators help with debouncing and pulse generation.

In sensors, they are used for generating carrier signals and stabilizing readings. Integrated circuits (ICs) use oscillators for clock generation and data synchronization. In connectors, oscillators assist with signal integrity and timing matching.

Microcontrollers rely on oscillators for peripheral operation and system clock management. Additionally, oscillators are used in LCD and LED displays for backlight control and data driving.

A basic oscillator circuit typically includes components like an amplifier stage, a feedback network, frequency-determining components, and a power supply.

1. Amplifier

An amplifier in an oscillator can be a transistor, an operational amplifier, or any active device that boosts small signals to maintain continuous oscillations. For that amplifier must provide a gain greater than or equal to one to sustain oscillations.

2. Feedback Network

In this network, it feeds a portion of the output back to the input with the correct phase. This network includes components like capacitive, inductive, or resistive networks like LC circuits or RC circuits.

3. Frequency Determining Components

This component sets the frequency at which the oscillator operates, which includes RC networks, LC networks, and crystal resonators.

4. Power Supply

It provides the necessary voltage and current for operation.

Types of Oscillators

Based on the design, frequency range, and application, oscillators are classified into various types. They are as follows:

1. LC Oscillator

An LC oscillator uses an inductor and a capacitor to determine the frequency of oscillation. It is a high-frequency operation oscillator that gives a smooth sine wave output, and its frequency depends on the values of L and C.

LC oscillator consists of different types like Hartley Oscillator (uses a tapped inductor), Colpitts Oscillator (uses a capacitive voltage divider), and Clapp Oscillator ( it is a variation of the Colpitts with an additional capacitor for better frequency stability.

It is mostly used in radio transmitters, RF communication circuits, and signal generators.

2. RC Oscillator

RC oscillator uses resistors and capacitors to produce oscillations. It produces stable low-frequency sine waves and is ideal for audio frequency generation, which is cost cost-effective design.

This includes the Wien bridge oscillator (for audio applications) and the Phase shift oscillator (produces sine waves using multiple RC stages). RC oscillators are used in audio signal generation, function generation, and low-frequency timing circuits.

3. Crystal Oscillator

To create a very stable frequency oscillation, a crystal oscillator uses the mechanical resonance of a quartz crystal. It generates a pure sine wave output with extremely high frequency stability. They have very low frequency drift due to temperature changes.

These are of the types Pierce oscillator and AT-cut crystal oscillator (widely used in microcontrollers). It is used in microcontrollers and microprocessors, Bluetooth and Wi-Fi modules, digital watches and clocks, and GPS systems.

Working Principle of Oscillator

The working principle of an oscillator is based on the concept of positive feedback and energy conversion from a direct current (DC) source into an alternating current (AC) signal at a specific, stable frequency.

The working of the oscillator is explained in step below:

1. Initial

Due to thermal activity, every electronic circuit has inherent noise, and this tiny noise signal acts as the seed for oscillation.

2. Amplification

At the amplification stage, the amplifier boosts this initial noise signal, and amplification must be sufficient to compensate for any losses in the feedback network.

3. Positive Feedback Loop

A portion of the output is fed back to the input in phase, which reinforces the input signal rather than cancelling it.

4. Frequency Selection

The frequency-determining network (RC, LC, or crystal) controls the frequency of oscillation.

5. Steady State Oscillation

As the feedback sustains the oscillations, the amplitude stabilizes. Non-linear effects or amplitude limiting mechanisms prevent the output from growing indefinitely, ensuring stable oscillations.

Applications of Oscillators

1. Communication Systems

* Oscillators generate high-frequency carrier signals for AM, FM, and digital modulation.

* Used to produce a range of frequencies from a single oscillator source.

* LC and crystal oscillators are used for tuning and frequency control.

Example: Radio Transmitters, Mobile phones, Wi-Fi modules, Bluetooth devices

2. Microcontrollers and Microprocessors

* Oscillators provide the clock signals needed for the timing and operation of microcontrollers and microprocessors.

* Crystal oscillators generate precise timing signals that ensure all processes operate in harmony and within correct timing constraints.

Example: Arduino boards, PIC microcontrollers, Embedded systems.

3. Sensors

* Oscillators are used in sensor circuits for data acquisition and signal processing.

Example: Proximity sensors, Ultrasonic sensors, and Environmental monitoring systems.

4. Display Technologies

Oscillators help maintain the refresh rate of digital displays. Used in the PWM (Pulse Width Modulation) circuits for adjusting display brightness.

Example: LED displays, LCD displays, OLED panels, Digital signage.

Frequently Asked Questions:

1. Is an Oscillator AC or DC?

An oscillator converts DC power into an AC signal by generating a continuous, oscillating waveform without an external input.

2. Is the Oscillator Negative or Positive?

An oscillator uses positive feedback to sustain continuous oscillations.

3. Which Oscillator is Better?

The crystal oscillator is considered better for applications requiring high-frequency stability and accuracy.

4. How Does an Oscillator Differ from an Amplifier?

An oscillator generates its own periodic signal without an external input, while an amplifier boosts the strength of an existing input signal.

5. What is the Difference Between RC and LC Oscillators?

An RC oscillator uses resistors and capacitors for low-frequency generation, while an LC oscillator uses inductors and capacitors for high-frequency generation.

6. What Causes an Oscillator to Fail?

An oscillator can fail due to component aging, temperature variations, power supply issues, or physical damage to the resonator elements, like crystals or inductors.

7. Can an Oscillator be Used as a Signal Generator?

Yes, an oscillator can be used as a signal generator to produce continuous waveforms like sine, square, or triangular signals.

#404 Re: Jai Ganesh's Puzzles » General Quiz » 2026-02-14 15:36:53

Hi,

#10749. What does the term in Geography Cusp or Beach cusps mean?

#10750. What does the term in Geography Cut bank mean?

#405 Re: Jai Ganesh's Puzzles » English language puzzles » 2026-02-14 15:19:08

Hi,

#5945. What does the verb (used with object) mutate mean?

#5946. What does the verd (used without object) mutter mean?

#406 Re: Jai Ganesh's Puzzles » Doc, Doc! » 2026-02-14 15:00:08

Hi,

#2569. What does the medical term Dilated cardiomyopathy (DCM) mean?

#407 Re: Jai Ganesh's Puzzles » 10 second questions » 2026-02-14 14:18:36

Hi,

#9854.

#408 Re: Jai Ganesh's Puzzles » Oral puzzles » 2026-02-14 14:07:21

Hi,

#6348.

#409 Re: Exercises » Compute the solution: » 2026-02-14 13:59:22

Hi,

2709.

#410 Re: Dark Discussions at Cafe Infinity » crème de la crème » 2026-02-13 22:08:18

2433) Yang Chen-Ning

Gist:

Work

For a long time, physicists assumed that various symmetries characterized nature. In a kind of “mirror world” where right and left were reversed and matter was replaced by antimatter, the same physical laws would apply, they posited. The equality of these laws was questioned concerning the decay of certain elementary particles, however, and in 1956 Chen Ning Yang and Tsung Dao Lee formulated a theory that the left-right symmetry law is violated by the weak interaction. Measurements of electrons’ direction of motion during a cobalt isotope’s beta decay confirmed this.

Summary

Chen Ning Yang (born October 1, 1922, Hofei, Anhwei, China—died October 18, 2025, Beijing, China) was a Chinese-born American theoretical physicist whose research with Tsung-Dao Lee showed that parity—the symmetry between physical phenomena occurring in right-handed and left-handed coordinate systems—is violated when certain elementary particles decay. Until this discovery it had been assumed by physicists that parity symmetry was as universal a law as the conservation of energy or electric charge. This and other studies in particle physics earned Yang and Lee the Nobel Prize for Physics for 1957.

Life

Yang’s father, Yang Ko-chuen (also known as Yang Wu-chih), was a professor of mathematics at Tsinghua University, near Peking. While still young, Yang read the autobiography of Benjamin Franklin and adopted “Franklin” as his first name. After graduation from the Southwest Associated University, in K’unming, he took his B.Sc. in 1942 and his M.S. in 1944. On a fellowship, he studied in the United States, enrolling at the University of Chicago in 1946. He took his Ph.D. in nuclear physics with Edward Teller and then remained in Chicago for a year as an assistant to Enrico Fermi, the physicist who was probably the most influential in Yang’s scientific development. Lee had also come to Chicago on a fellowship, and the two men began the collaboration that led eventually to their Nobel Prize work on parity. In 1949 Yang went to the Institute for Advanced Study in Princeton, New Jersey, and became a professor there in 1955. He became a U.S. citizen in 1964.

Work

Almost from his earliest days as a physicist, Yang had made significant contributions to the theory of the weak interactions—the forces long thought to cause elementary particles to disintegrate. (The strong forces that hold nuclei together and the electromagnetic forces that are responsible for chemical reactions are parity-conserving. Since these are the dominant forces in most physical processes, parity conservation appeared to be a valid physical law, and few physicists before 1955 questioned it.) By 1953 it was recognized that there was a fundamental paradox in this field since one of the newly discovered mesons—the so-called K meson—seemed to exhibit decay modes into configurations of differing parity. Since it was believed that parity had to be conserved, this led to a severe paradox.

After exploring every conceivable alternative, Lee and Yang were forced to examine the experimental foundations of parity conservation itself. They discovered, in early 1956, that, contrary to what had been assumed, there was no experimental evidence against parity nonconservation in the weak interactions. The experiments that had been done, it turned out, simply had no bearing on the question. They suggested a set of experiments that would settle the matter, and, when these were carried out by several groups over the next year, large parity-violating effects were discovered. In addition, the experiments also showed that the symmetry between particle and antiparticle, known as charge conjugation symmetry, is also broken by the weak decays.

In addition to his work on weak interactions, Yang, in collaboration with Lee and others, carried out important work in statistical mechanics—the study of systems with large numbers of particles—and later investigated the nature of elementary particle reactions at extremely high energies. From 1965 Yang was Albert Einstein professor at the Institute of Science, State University of New York at Stony Brook, Long Island. During the 1970s he was a member of the board of Rockefeller University and the American Association for the Advancement of Science and, from 1978, of the Salk Institute for Biological Studies, San Diego. He was also on the board of Ben-Gurion University, Beersheba, Israel. He received the Einstein Award in 1957 and the Rumford Prize in 1980; in 1986 he received the Liberty Award and the National Medal of Science.

Details

Yang Chen-Ning (1 October 1922 – 18 October 2025) also known as C.N. Yang and Franklin Yang, was a Chinese-American theoretical physicist who made significant contributions to statistical mechanics, integrable systems, gauge theory, particle physics and condensed matter physics.

Yang is known for his collaboration with Robert Mills in 1954 in developing non-abelian gauge theory, widely known as the Yang–Mills theory, which describes the nuclear forces in the Standard Model of particle physics.

Yang and Tsung-Dao Lee received the 1957 Nobel Prize in Physics for their work on parity non-conservation of the weak interaction, which was confirmed by the Wu experiment in 1956. The two proposed that the conservation of parity, a physical law observed to hold in all other physical processes, is violated in weak nuclear reactions – those nuclear processes that result in the emission of beta or alpha particles.

Early life and education

Yang was born in Hefei, Anhui, China, on 1 October 1922. His mother was Luo Meng-hua and his father, Ko-Chuen Yang (1896–1973), was a mathematician.

Yang attended elementary school and high school in Beijing, and in the autumn of 1937 his family moved to Hefei after the Japanese invaded China. In 1938 they moved to Kunming, Yunnan, where National Southwestern Associated University was located. In the same year, as a second-year student, Yang passed the entrance examination and studied at National Southwestern Associated University. He received a Bachelor of Science in 1942, with his thesis on the application of group theory to molecular spectra, under the supervision of Ta-You Wu.

Yang continued to study graduate courses there for two years under the supervision of Wang Zhuxi (J.S. Wang), working on statistical mechanics. In 1944, he received a Master of Science from National Tsing Hua University, which had moved to Kunming during the Sino-Japanese War (1937–1945). Yang was then awarded a scholarship from the Boxer Indemnity Scholarship Program, set up by the United States government using part of the money China had been forced to pay following the Boxer Rebellion. His departure for the United States was delayed for one year, during which time he taught in a middle school as a teacher and studied field theory.

Yang entered the University of Chicago in January 1946 and studied with Edward Teller. He received a Doctor of Philosophy in 1948.

Career

Yang remained at the University of Chicago for a year as an assistant to Enrico Fermi. In 1949 he was invited to do his research at the Institute for Advanced Study in Princeton, New Jersey, where he began a period of fruitful collaboration with Tsung-Dao Lee. Lee and Yang published 32 papers together. He was made a permanent member of the Institute in 1952, and full professor in 1955. In 1963, Princeton University Press published his textbook, Elementary Particles. In 1965 he moved to Stony Brook University, where he was named the Albert Einstein Professor of Physics and the first director of the newly founded Institute for Theoretical Physics. Today this institute is known as the C. N. Yang Institute for Theoretical Physics. Yang retired from Stony Brook University in 1999.

Yang visited the Chinese mainland in 1971 for the first time after the thaw in China–US relations, and subsequently worked to help the Chinese physics community rebuild the research atmosphere, which later eroded due to political movements during the Cultural Revolution. After retiring from Stony Brook, he returned to Beijing as an honorary director of Tsinghua University, where he was the first Huang Jibei-Lu Kaiqun Professor at the Center for Advanced Study (CASTU). He was also one of the two Shaw Prize Founding Members and was a Distinguished Professor-at-Large at the Chinese University of Hong Kong.

Yang helped to establish the Theoretical Physics Division at the Chern Institute of Mathematics in 1986 at the request of Shiing-Shen Chern who was serving as the inaugural director of the Institute at the time.

Personal life and death

Yang married Tu Chih-li, a teacher, in 1950; they had two sons and a daughter together. His father-in-law was the Kuomintang general Du Yuming. Tu died in October 2003. In January 2005, Yang married Weng Fan, a university student. They met in 1995 at a physics seminar; the couple reestablished contact in February 2004 when Yang moved to China to become affiliated with Tsinghua University. Yang called Weng, who was 54 years his junior, his "final blessing from God".

Yang obtained U.S. citizenship during his research within the country. According to the state-run Xinhua News Agency, Yang said the decision was painful as his father never forgave him for that. According to Xinhua and other mainstream Chinese media, he formally renounced his American citizenship on April 1, 2015. He acknowledged that while the U.S. was a beautiful country that gave him good opportunities to study science, China since his youth had offered the best secondary and undergraduate institutions, though the US had the top graduate studies. However, circumstances changed in favor of China's growth by the turn of the century.

His son Guangnuo was a computer scientist. His second son Guangyu is an astronomer, and his daughter Youli is a doctor.

Yang turned 100 on 1 October 2022, and died in Beijing on 18 October 2025, at the age of 103. The day after the announcement of his death, people gathered and waited in line at Tsinghua University to pay tributes to Yang.

Views on the CEPC

Yang is known for having opposed the construction of the Circular Electron Positron Collider (CEPC), a 100 km circumference particle collider in China that would study the Higgs boson. He catalogued the project as "guess" work and without guaranteed results. Yang said that "even if they see something with the machine, it's not going to benefit the life of Chinese people any sooner."

#411 Re: Jai Ganesh's Puzzles » General Quiz » 2026-02-13 19:58:27

Hi,

#10747. What does the term in Geography Culture mean?

#10748. What does the term in Geography Culvert mean?

#412 Re: Jai Ganesh's Puzzles » English language puzzles » 2026-02-13 19:46:10

Hi,

#5943. What does the noun mandate mean?

#5944. What does the adjective mandatory mean?

#413 This is Cool » Anion » 2026-02-13 19:22:57

- Jai Ganesh

- Replies: 0

Anion

Gist

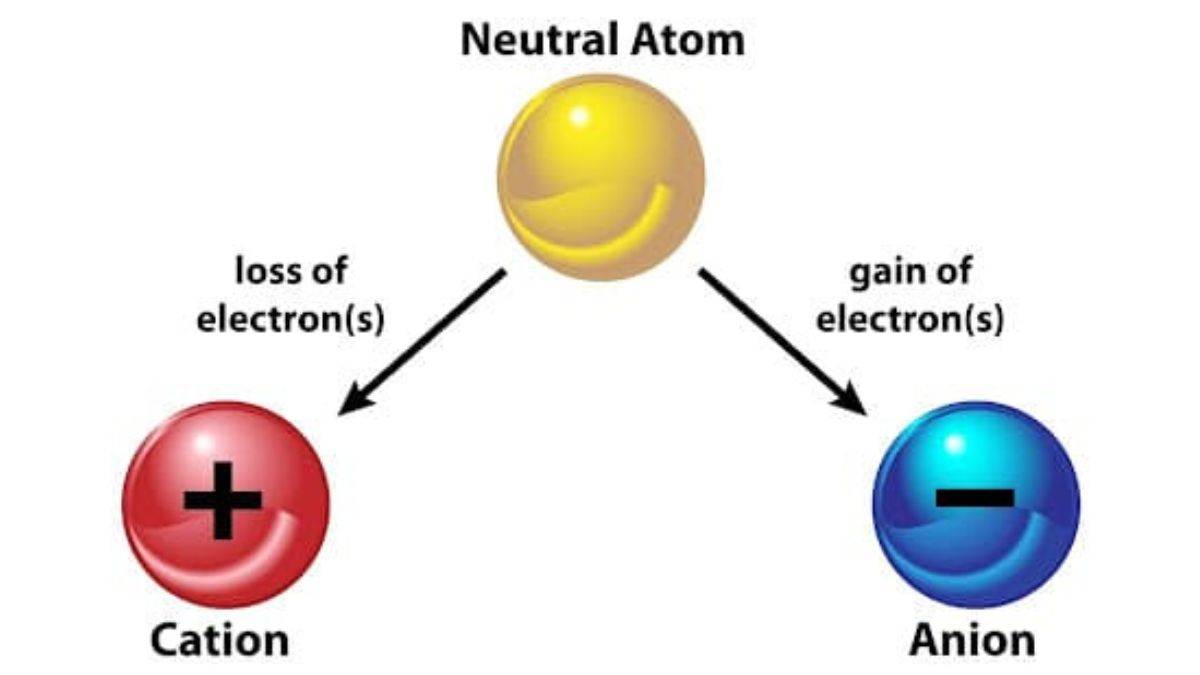

An anion is an atom or molecule that carries a net negative electrical charge because it has gained one or more electrons, resulting in more electrons than protons. Formed typically by non-metals, anions are attracted to the positive electrode (anode) during electrolysis and readily combine with positive ions (cations) to form ionic compounds, like chloride or sulfate.

Anions are negatively-charged ions (meaning they have more electrons than protons due to having gained one or more electrons). Cations are also called positive ions, and anions are also called negative ions.

Summary

Anions are atoms or groups of atoms that have a negative electric charge. An anion has more electrons in its atomic orbitals than it has protons in its atomic nucleus. The opposite of an anion is a cation, which has a positive charge.

The name "anion" comes from the words anode and ion. In an electrochemical cell, anions are attracted to the positively charged anode.

Anions can be monatomic, made of only one atom, or polyatomic, made of multiple atoms. Anions can exist on their own only as gases: to make a solid, ionic liquid, or solution the total electrical charge must be zero, meaning a mix of anions and cations.

Properties

In many crystals the anions are bigger; the little cations fit into the spaces between them.

All anions are Brønsted bases: they can make a chemical bond with a proton, H+, to form a conjugate acid.

Examples

Oxide is the most common anion on Earth. It is made from an oxygen atom with two extra electrons. The formula for oxide is written O2−. The oxide ion reacts with water, so it cannot be dissolved to make a solution.

Chloride is a monatomic anion made from an atom of chlorine with an extra electron. The chemical formula is written Cl−. Chloride is the most common anion in seawater.

Sulfate is the second most common anion in seawater after chloride. It is made of a sulfur atom, four oxygen atoms, and two extra electrons. Sulfate is a special type of anion called an oxyanion, which are made of a central element (like sulfur) surrounded by oxygen atoms.

Hydroxide is a polyatomic anion made of one oxygen atom, one hydrogen atom, and one extra electron. It has the formula OH−. Hydroxide is the conjugate base of water, so it is the strongest base that can be mixed with water. Other strong bases, including the oxide anion, react with water to make hydroxide.

Details

Anions are negatively charged ions formed when an atom or group of atoms gains one or more valence electrons. The term "anion" comes from "anode ions," reflecting their movement toward the positive terminal in an electrolytic solution. Anions, along with positively charged cations, form ionic bonds that are fundamental to many chemical compounds, such as table salt (sodium chloride). Common examples of anions include fluoride, chloride, and sulfate, each named based on their elemental origin or structural characteristics, following specific nomenclature rules established by the International Union of Pure and Applied Chemistry (IUPAC).

The formation of anions is closely tied to the electronic structure of atoms, which consists of a dense nucleus surrounded by electron shells containing orbitals that dictate the arrangement and behavior of electrons. Elements tend to achieve stability by completing their outer electron shell, often following the octet rule, which promotes the formation of anions in various chemical reactions. Understanding anions is essential for grasping broader concepts related to atomic structure, chemical bonding, and the periodic table's organization.

The basic structure of anions is defined, and the development of the modern theory of atomic structure is elaborated. Electronic structure is fundamental to all chemical behaviors and is responsible for the relationships seen in the periodic table.

The Nature of Anions

An anion is any atom or group of atoms that bears a net negative charge due to the presence of one or more extra valence electrons. The term is a contraction of "anode ions," which is a reference to the fact that when a direct electric current is applied to an electrolytic solution, negatively charged ions are attracted to the anode, or positive terminal, of the source of the current. By the same token, cations, from "cathode ions," have a net positive charge and are attracted to the cathode, or negative terminal, of the current source. Anions and cations often combine to form compounds held together with ionic bonds; one common example is sodium chloride (NaCl), better known as table salt, which is created when the sodium cation, Na+, bonds to the chloride anion, Cl−.

The formation of any ion is the result of an atom or molecule gaining or losing one or more valence electrons. This is most apparent in monatomic (single-atom) ions, in which the electrical charge is equal to the oxidation state. For example, the halogens—fluorine, chlorine, bromine, iodine, and astatine—are all highly electronegative, meaning that they readily accept an extra valence electron so that their outer electron shell is completely full and therefore stable. The resulting anions are called fluoride, chloride, bromide, iodide, and astatinide, respectively, and they have an electrical charge of 1− because they gained one electron and thus one unit of negative charge. Similarly, the chalcogens oxygen (O) and sulfur (S) readily accept two extra electrons to form the oxide ion, O2−, and the sulfide ion, S2−.

Compound ions in which a central atom is bonded to a number of oxygen atoms are extremely common. These oxoanions tend to form very stable compounds and are the basic materials of many minerals.

The Electronics of Anion Formation

According to the modern theory of atomic structure, each atom contains a very small, extremely dense nucleus that holds at least 99.98 percent of the atom’s mass and all of its positive electrical charge. The nucleus is surrounded by a diffuse and comparatively very large cloud of electrons containing all of the atom’s negative electrical charge. These electrons are allowed to possess only very specific energies. This restricts their movement around the nucleus to specific regions called "electron shells." Within each shell are well-defined regions called "orbitals." The strict geometric arrangement of the orbitals regulates the formation of chemical bonds between atoms.

Each shell and orbital is subject to a number of restrictions that dictate how many electrons it can hold. There are four different types of electron orbitals, designated s, p, d, and f, each of which can contain a specific number of electrons: s orbitals can hold a maximum of two electrons; p orbitals, a maximum of six; d orbitals, a maximum of ten; and f orbitals, a maximum of fourteen. One or more of these orbitals make up an electron shell. The various electron shells are indicated by an integer value known as the "principal quantum number," starting with 1 for the innermost shell, typically referred to as the "n = 1 shell." The standard notation to describe an electron orbital is the principal quantum number, followed by the type of orbital, followed by a superscript number indicating how many electrons it holds. For example, helium has only one s orbital, which is completely full, so its electron configuration is represented as 1s2. The first p orbital appears in the n = 2 shell, the first d orbital in the n = 3 shell, and the first f orbital in the n = 4 shell. Due to variances in energy levels, electrons usually fill atomic orbitals in the order 1s, 2s, 2p, 3s, 3p, 4s, 3d, 4p, 5s, 4d, 5p, 6s, 4f, 5d, 6p, 7s . . . rather than in strict numerical order.

The outermost electron shell is the valence shell, and in all elements, except the noble gases (helium, neon, argon, krypton, xenon, and radon), the valence shell is incompletely occupied. Because of this, the noble gases are often called "inert gases," reflecting the fact that they are far less chemically reactive than elements whose valence shells are not filled. All noble gases except for helium have eight electrons in their valence shell, as a shell with eight electrons approximates a stable closed-shell configuration of s2p6. This is the basis of the octet rule, which states that atoms of lower atomic numbers tend to achieve the greatest stability when they have eight electrons in their valence shells. The closer an element is to having eight valence electrons, the more likely it is to undergo ionization or a chemical reaction in order to achieve a stable configuration, either by gaining enough electrons to complete the octet or by losing all valence electrons so that the next-highest completed shell becomes the outermost shell. Elements with similar electron distributions in their valence shells typically exhibit similar chemical behaviors, which is the basis on which the periodictable of the elements is arranged.

Naming the Anions

The rules for naming various chemical species are established and standardized by the International Union of Pure and Applied Chemistry (IUPAC). Monatomic anions and polyatomic anions composed of a single element are named by adding the suffix -ide to the name of the element, either instead of or in addition to the existing suffix. Thus a sulfur anion becomes sulfide, a xenon anion becomes xenonide, a potassium anion becomes potasside, and so on. In some cases, the suffix is added to the element’s Latin name instead of its common name; for example, an anion of silver, which has the Latin name argentum, is called argentide.

Oxoanions are generally named for the central atom of the anion and the number of oxygen atoms that surround it, with the charge number given in parentheses at the end. An oxoanion consisting of a sulfur atom surrounded by three oxygen atoms has the formal name trioxidosulfate, while one with a sulfur atom and four oxygen atoms is called tetraoxidosulfate. The common (nonsystematic) names of these two oxoanions are sulfite and sulfate, respectively, following the convention that the oxoanion with fewer oxygen atoms takes the suffix -ite and the one with more oxygen atoms takes the suffix -ate. If one element is capable of forming more than two different oxoanions, as is the case with chlorine, the prefixes hypo- and per- are used as well, so that the common name of ClO− is hypochlorite, ClO2− is chlorite, ClO3− is chlorate, and ClO4− is perchlorate.

Principal Terms

cation: any chemical species bearing a net positive electrical charge, which causes it to be drawn toward the negative pole, or cathode, of an electrochemical cell.

ionic bond: a type of chemical bond formed by mutual attraction between two ions of opposite charges.

ionization: the process by which an atom or molecule loses or gains one or more electrons to acquire a net positive or negative electrical charge.

oxoanion: an ion consisting of one or more central atoms bonded to a number of oxygen atoms and bearing a net negative electrical charge.

valence electron: an electron that occupies the outermost or valence shell of an atom and participates in chemical processes such as bond formation and ionization.

Additional Information

An anion is an atom that has a negative charge. So, given that anion definition, the answer to the question "Is an anion negative?" is yes. Anions are a type of atom, the smallest particle of an element that still retains the element's properties. Atoms are made of three types of subatomic particles: neutrons, protons, and electrons. Neutrons are neutrally charged subatomic particles and, along with protons, they make up the nucleus. Protons have a positive charge. Electrons are very small subatomic particles that orbit the nucleus in levels called shells. Electrons have a negative charge.

Anions are created when an atom gains one or more electrons. The number of electrons gained by an atom is determined by how many are needed to fill their outer shell. For example, fluorine has seven electrons in its outer shell, but a full outer shell contains eight electrons. Thus, fluorine tends to gain one electron to fill its outer shell and generally has a charge of -1. Oxygen, on the other hand, has an outer shell of six electrons. This means it requires two electrons to complete its outer shell and tends to carry a charge of -2.

Uses for Anions

Fluoride ion is widely used in water supplies to help prevent tooth decay. Chloride is an important component in ion balance in blood. Iodide ion is needed by the thyroid gland to make the hormone thyroxine.

Summary

* Anions are formed by the addition of one or more electrons to the outer shell of an atom.

* Group 17 elements add one electron to the outer shell, group 16 elements add two electrons, and group 15 elements add three electrons.

* Anions are named by dropping the ending of the element's name and adding -ide.

#414 Re: This is Cool » Miscellany » 2026-02-13 18:32:41

2495) Adhesive

Gist

An adhesive is a substance—such as glue, cement, or paste—that binds materials together through surface attachment, resisting separation. Primarily divided into natural (e.g., starch) and synthetic (e.g., epoxy, acrylic) types, they are used for bonding, sealing, and coating in industrial and consumer applications.

Adhesives are used to bond materials together across virtually every industry, from construction, automotive, and aerospace to everyday household tasks, by creating strong, durable connections that distribute stress evenly, join dissimilar materials (like metal to plastic), and offer aesthetic advantages over mechanical fasteners like nails or screws, by avoiding holes and creating smoother finishes. They're essential for product assembly, packaging (like sealing boxes), labeling (stickers, bottle labels), and repairs, enabling lighter, stronger, and more complex designs in everything from smartphones to spacecraft.

Summary

Adhesive, also known as glue, cement, mucilage, or paste, is any non-metallic substance applied to one or both surfaces of two separate items that binds them together and resists their separation.

The use of adhesives offers certain advantages over other binding techniques such as sewing, mechanical fastenings, and welding. These include the ability to bind different materials together, the more efficient distribution of stress across a joint, the cost-effectiveness of an easily mechanized process, and greater flexibility in design. Disadvantages of adhesive use include decreased stability at high temperatures, relative weakness in bonding large objects with a small bonding surface area, and greater difficulty in separating objects during testing. Adhesives are typically organized by the method of adhesion followed by reactive or non-reactive, a term which refers to whether the adhesive chemically reacts in order to harden. Alternatively, they can be organized either by their starting physical phase or whether their raw stock is of natural or synthetic origin.

Adhesives may be found naturally or produced synthetically. The earliest human use of adhesive-like substances was approximately 200,000 years ago, when Neanderthals produced tar from the dry distillation of birch bark for use in binding stone tools to wooden handles. The first references to adhesives in literature appeared approximately 2000 BC. The Greeks and Romans made great contributions to the development of adhesives. In Europe, glue was not widely used until the period AD 1500–1700. From then until the 1900s increases in adhesive use and discovery were relatively gradual. Only since the 20th century has the development of synthetic adhesives accelerated rapidly, and innovation in the field continues to the present.

Details

An adhesive is any substance that is capable of holding materials together in a functional manner by surface attachment that resists separation. “Adhesive” as a general term includes cement, mucilage, glue, and paste—terms that are often used interchangeably for any organic material that forms an adhesive bond. Inorganic substances such as portland cement also can be considered adhesives, in the sense that they hold objects such as bricks and beams together through surface attachment, but this article is limited to a discussion of organic adhesives, both natural and synthetic.

Natural adhesives have been known since antiquity. Egyptian carvings dating back 3,300 years depict the gluing of a thin piece of veneer to what appears to be a plank of sycamore. Papyrus, an early nonwoven fabric, contained fibres of reedlike plants bonded together with flour paste. Bitumen, tree pitches, and beeswax were used as sealants (protective coatings) and adhesives in ancient and medieval times. The gold leaf of illuminated manuscripts was bonded to paper by egg white, and wooden objects were bonded with glues from fish, horn, and cheese. The technology of animal and fish glues advanced during the 18th century, and in the 19th century rubber- and nitrocellulose-based cements were introduced. Decisive advances in adhesives technology, however, awaited the 20th century, during which time natural adhesives were improved and many synthetics came out of the laboratory to replace natural adhesives in the marketplace. The rapid growth of the aircraft and aerospace industries during the second half of the 20th century had a profound impact on adhesives technology. The demand for adhesives that had a high degree of structural strength and were resistant to both fatigue and severe environmental conditions led to the development of high-performance materials, which eventually found their way into many industrial and domestic applications.

This article begins with a brief explanation of the principles of adhesion and then proceeds to a review of the major classes of natural and synthetic adhesives.

Adhesion

In the performance of adhesive joints, the physical and chemical properties of the adhesive are the most important factors. Also important in determining whether the adhesive joint will perform adequately are the types of adherend (that is, the components being joined—e.g., metal alloy, plastic, composite material) and the nature of the surface pretreatment or primer. These three factors—adhesive, adherend, and surface—have an impact on the service life of the bonded structure. The mechanical behaviour of the bonded structure in turn is influenced by the details of the joint design and by the way in which the applied loads are transferred from one adherend to the other.

Implicit in the formation of an acceptable adhesive bond is the ability of the adhesive to wet and spread on the adherends being joined. Attainment of such interfacial molecular contact is a necessary first step in the formation of strong and stable adhesive joints. Once wetting is achieved, intrinsic adhesive forces are generated across the interface through a number of mechanisms. The precise nature of these mechanisms have been the object of physical and chemical study since at least the 1960s, with the result that a number of theories of adhesion exist. The main mechanism of adhesion is explained by the adsorption theory, which states that substances stick primarily because of intimate intermolecular contact. In adhesive joints this contact is attained by intermolecular or valence forces exerted by molecules in the surface layers of the adhesive and adherend.

In addition to adsorption, four other mechanisms of adhesion have been proposed. The first, mechanical interlocking, occurs when adhesive flows into pores in the adherend surface or around projections on the surface. The second, interdiffusion, results when liquid adhesive dissolves and diffuses into adherend materials. In the third mechanism, adsorption and surface reaction, bonding occurs when adhesive molecules adsorb onto a solid surface and chemically react with it. Because of the chemical reaction, this process differs in some degree from simple adsorption, described above, although some researchers consider chemical reaction to be part of a total adsorption process and not a separate adhesion mechanism. Finally, the electronic, or electrostatic, attraction theory suggests that electrostatic forces develop at an interface between materials with differing electronic band structures. In general, more than one of these mechanisms play a role in achieving the desired level of adhesion for various types of adhesive and adherend.

In the formation of an adhesive bond, a transitional zone arises in the interface between adherend and adhesive. In this zone, called the interphase, the chemical and physical properties of the adhesive may be considerably different from those in the noncontact portions. It is generally believed that the interphase composition controls the durability and strength of an adhesive joint and is primarily responsible for the transference of stress from one adherend to another. The interphase region is frequently the site of environmental attack, leading to joint failure.

The strength of adhesive bonds is usually determined by destructive tests, which measure the stresses set up at the point or line of fracture of the test piece. Various test methods are employed, including peel, tensile lap shear, cleavage, and fatigue tests. These tests are carried out over a wide range of temperatures and under various environmental conditions. An alternate method of characterizing an adhesive joint is by determining the energy expended in cleaving apart a unit area of the interphase. The conclusions derived from such energy calculations are, in principle, completely equivalent to those derived from stress analysis.

Adhesive materials

Virtually all synthetic adhesives and certain natural adhesives are composed of polymers, which are giant molecules, or macromolecules, formed by the linking of thousands of simpler molecules known as monomers. The formation of the polymer (a chemical reaction known as polymerization) can occur during a “cure” step, in which polymerization takes place simultaneously with adhesive-bond formation (as is the case with epoxy resins and cyanoacrylates), or the polymer may be formed before the material is applied as an adhesive, as with thermoplastic elastomers such as styrene-isoprene-styrene block copolymers. Polymers impart strength, flexibility, and the ability to spread and interact on an adherend surface—properties that are required for the formation of acceptable adhesion levels.

Natural adhesives

Natural adhesives are primarily of animal or vegetable origin. Though the demand for natural products has declined since the mid-20th century, certain of them continue to be used with wood and paper products, particularly in corrugated board, envelopes, bottle labels, book bindings, cartons, furniture, and laminated film and foils. In addition, owing to various environmental regulations, natural adhesives derived from renewable resources are receiving renewed attention. The most important natural products are described below.

Animal glue

The term animal glue usually is confined to glues prepared from mammalian collagen, the principal protein constituent of skin, bone, and muscle. When treated with acids, alkalies, or hot water, the normally insoluble collagen slowly becomes soluble. If the original protein is pure and the conversion process is mild, the high-molecular-weight product is called gelatin and may be used for food or photographic products. The lower-molecular-weight material produced by more vigorous processing is normally less pure and darker in colour and is called animal glue.

Animal glue traditionally has been used in wood joining, book bindery, sandpaper manufacture, heavy gummed tapes, and similar applications. In spite of its advantage of high initial tack (stickiness), much animal glue has been modified or entirely replaced by synthetic adhesives.

Casein glue

This product is made by dissolving casein, a protein obtained from milk, in an aqueous alkaline solvent. The degree and type of alkali influences product behaviour. In wood bonding, casein glues generally are superior to true animal glues in moisture resistance and aging characteristics. Casein also is used to improve the adhering characteristics of paints and coatings.

Blood albumen glue

Glue of this type is made from serum albumen, a blood component obtainable from either fresh animal blood or dried soluble blood powder to which water has been added. Addition of alkali to albumen-water mixtures improves adhesive properties. A considerable quantity of glue products from blood is used in the plywood industry.

Starch and dextrin

Starch and dextrin are extracted from corn, wheat, potatoes, or rice. They constitute the principal types of vegetable adhesives, which are soluble or dispersible in water and are obtained from plant sources throughout the world. Starch and dextrin glues are used in corrugated board and packaging and as a wallpaper adhesive.

Natural gums

Substances known as natural gums, which are extracted from their natural sources, also are used as adhesives. Agar, a marine-plant colloid (suspension of extremely minute particles), is extracted by hot water and subsequently frozen for purification. Algin is obtained by digesting seaweed in alkali and precipitating either the calcium salt or alginic acid. Gum arabic is harvested from acacia trees that are artificially wounded to cause the gum to exude. Another exudate is natural rubber latex, which is harvested from Hevea trees. Most gums are used chiefly in water-remoistenable products.

Synthetic adhesives

Although natural adhesives are less expensive to produce, most important adhesives are synthetic. Adhesives based on synthetic resins and rubbers excel in versatility and performance. Synthetics can be produced in a constant supply and at constantly uniform properties. In addition, they can be modified in many ways and are often combined to obtain the best characteristics for a particular application.

The polymers used in synthetic adhesives fall into two general categories—thermoplastics and thermosets. Thermoplastics provide strong, durable adhesion at normal temperatures, and they can be softened for application by heating without undergoing degradation. Thermoplastic resins employed in adhesives include nitrocellulose, polyvinyl acetate, vinyl acetate-ethylene copolymer, polyethylene, polypropylene, polyamides, polyesters, acrylics, and cyanoacrylics.

Thermosetting systems, unlike thermoplastics, form permanent, heat-resistant, insoluble bonds that cannot be modified without degradation. Adhesives based on thermosetting polymers are widely used in the aerospace industry. Thermosets include phenol formaldehyde, urea formaldehyde, unsaturated polyesters, epoxies, and polyurethanes. Elastomer-based adhesives can function as either thermoplastic or thermosetting types, depending on whether cross-linking is necessary for the adhesive to perform its function. The characteristics of elastomeric adhesives include quick assembly, flexibility, variety of type, economy, high peel strength, ease of modification, and versatility. The major elastomers employed as adhesives are natural rubber, butyl rubber, butadiene rubber, styrene-butadiene rubber, nitrile rubber, silicone, and neoprene.

An important challenge facing adhesive manufacturers and users is the replacement of adhesive systems based on organic solvents with systems based on water. This trend has been driven by restrictions on the use of volatile organic compounds (VOC), which include solvents that are released into the atmosphere and contribute to the depletion of ozone. In response to environmental regulation, adhesives based on aqueous emulsions and dispersions are being developed, and solvent-based adhesives are being phased out.

The polymer types noted above are employed in a number of functional types of adhesives. These functional types are described below.

Contact cements

Contact adhesives or cements are usually based on solvent solutions of neoprene. They are so named because they are usually applied to both surfaces to be bonded. Following evaporation of the solvent, the two surfaces may be joined to form a strong bond with high resistance to shearing forces. Contact cements are used extensively in the assembly of automotive parts, furniture, leather goods, and decorative laminates. They are effective in the bonding of plastics.

Structural adhesives

Structural adhesives are adhesives that generally exhibit good load-carrying capability, long-term durability, and resistance to heat, solvents, and fatigue. Ninety-five percent of all structural adhesives employed in original equipment manufacture fall into six structural-adhesive families: (1) epoxies, which exhibit high strength and good temperature and solvent resistance, (2) polyurethanes, which are flexible, have good peeling characteristics, and are resistant to shock and fatigue, (3) acrylics, a versatile adhesive family that bonds to oily parts, cures quickly, and has good overall properties, (4) anaerobics, or surface-activated acrylics, which are good for bonding threaded metal parts and cylindrical shapes, (5) cyanoacrylates, which bond quickly to plastic and rubber but have limited temperature and moisture resistance, and (6) silicones, which are flexible, weather well out-of-doors, and provide good sealing properties. Each of these families can be modified to provide adhesives that have a range of physical and mechanical properties, cure systems, and application techniques.

Polyesters, polyvinyls, and phenolic resins are also used in industrial applications but have processing or performance limitations. High-temperature adhesives, such as polyimides, have a limited market.

Hot-melt adhesives

Hot-melt adhesives are employed in many nonstructural applications. Based on thermoplastic resins, which melt at elevated temperatures without degrading, these adhesives are applied as hot liquids to the adherend. Commonly used polymers include polyamides, polyesters, ethylene-vinyl acetate, polyurethanes, and a variety of block copolymers and elastomers such as butyl rubber, ethylene-propylene copolymer, and styrene-butadiene rubber.

Hot-melts find wide application in the automotive and home-appliance fields. Their utility, however, is limited by their lack of high-temperature strength, the upper use temperature for most hot-melts being in the range of 40–65 °C (approximately 100–150 °F). In order to improve performance at higher temperatures, so-called structural hot-melts—thermoplastics modified with reactive urethanes, moisture-curable urethanes, or silane-modified polyethylene—have been developed. Such modifications can lead to enhanced peel adhesion, higher heat capability (in the range of 70–95 °C [160–200 °F]), and improved resistance to ultraviolet radiation.

Pressure-sensitive adhesives

Pressure-sensitive adhesives, or PSAs, represent a large industrial and commercial market in the form of adhesive tapes and films directed toward packaging, mounting and fastening, masking, and electrical and surgical applications. PSAs are capable of holding adherends together when the surfaces are mated under briefly applied pressure at room temperature. (The difference between these adhesives and contact cements is that the latter require no pressure to bond.)

Materials used to formulate PSA systems include natural and synthetic rubbers, thermoplastic elastomers, polyacrylates, polyvinylalkyl ethers, and silicones. These polymers, in both solvent-based and hot-melt formulations, are applied as a coating onto a substrate of paper, cellophane, plastic film, fabric, or metal foil. As solvent-based adhesive formulations are phased out in response to environmental regulations, water-based PSAs will find greater use.

Ultraviolet-cured adhesives

Ultraviolet-cured adhesives became available in the early 1960s but developed rapidly with advances in chemical and equipment technology during the 1980s. These types of adhesive normally consist of a monomer (which also can serve as the solvent) and a low-molecular-weight prepolymer combined with a photoinitiator. Photoinitiators are compounds that break down into free radicals upon exposure to ultraviolet radiation. The radicals induce polymerization of the monomer and prepolymer, thus completing the chain extension and cross-linking required for the adhesive to form. Because of the low process temperatures and very rapid polymerization (from 2 to 60 seconds), ultraviolet-cured adhesives are making rapid advances in the electronic, automotive, and medical areas. They consist mainly of acrylated formulations of silicones, urethanes, and methacrylates. Combined ultraviolet–heat-curing formulations also exist.

Additional Information

An adhesive is a type of substance that holds two or more materials together with cohesive forces and surface attachment in a practical way. Adhesives can be made from and be composed of a variety of substances such as tree sap, bee wax, cement, and epoxy.Ideally, there are two broad adhesive categories, natural and synthetic adhesives. Most commercially found adhesives are synthetic adhesives as they provide better consistency, bond strength, and adaptability compared to natural adhesives. Synthetic adhesives are further classified as consumer adhesives and Industrial adhesives based on their application.

An adhesive’s chemical composition determines its application methods, usage, and bonding strength. Therefore, adhesive manufacturers need to custom-engineer modern synthetic based on the needs of different industries and applications.

Adhesive Applications In Various Industries

1. Bonding:

Bonding is a process in which two surfaces are practically joined together with the help of a suitable adhesive, such as epoxy adhesives. Adhesives are used for bonding materials in various industries, such as electronics, medical, food, optical, chemical and oil and gas industries to bond a range of metals, ceramics, glass, plastics, rubbers and composites.

2. Sealing:

Unlike bonding which sees two surfaces fused together, sealants are ideal for closing gaps and cavities to block fluids, dust, and dirt from either entering or getting out. Sealants are widely used in aerospace, oil and gas, chemical, electronic, optical, automotive and specialty OEM industries.

3. Coating:

Coatings are predominantly used in aerospace, electronic conformal coating, along with some other uses in OEM and oil & chemical industries. Industrial adhesive coatings can provide superior protection against chemicals, dust and moisture, reduce friction, improve abrasion resistance and provide EMI/RFI shielding.

4. Potting:

Potting is an encapsulation method used in the electronics industry to cover small or large electrical components placed inside a housing with a suitable potting material that can withstand high temperatures, protect the circuits from moisture, dirt, dust and other harsh conditions. Potting and encapsulation are used for electronic and microelectronic components, such as sensors, motors, coils, transformers, capacitors, switches, connectors, power supplies, and cable harnesses.

5. Impregnation:

Impregnation is a method used to wet various fibres, such as glass, carbon, kevlar, aramid among others. Once the fibres are completely saturated with the resin, the resin is allowed to fully cure in place forming a composite substrate. Such impregnated composite surfaces are widely used in the aerospace, windmill and electronics and electrical industries.

How Do Adhesives Work?

The working of adhesive depends on the types of bonding process used to attach the surfaces to each other. Mechanical adhesion and chemical adhesion are two types of bondings that can be used to stick one surface to another with adhesives.

Usually, surfaces that need to be attached with the help of adhesives, have a lot of micropores. These pores when filled with adhesives act as grips to keep another surface attached to them. This is called mechanical adhesion. With mechanical adhesion, the adhesives are in liquid form. The liquid adhesives will gradually penetrate the pores during the drying and curing process. You should also keep in mind that mechanical bonding is dependent on the surface roughness and surface energy of the substrates to be bonded. The higher the surface energy and roughness of a substance, the stronger is the bond.

On the other hand, chemical bonding is completely different which sees the surface of a material completely bond with another material on a molecular level. It is a complex process but very effective at the same time. Chemical bonding is further categorised into two types; adsorption and chemisorption depending on the type of bond between the adhesive’s molecules and the surface. Although chemical adhesives are easily available, they are not a common form of adhesive used in Industries.

Types of Adhesives

1. Hot Melt:

Hot melt is a type of thermoplastic polymer adhesive. Thermoplastic polymer adhesives are in a solid state at room temperature. During the application process , they are liquified by heating to be applied as an adhesive. Hot melt adhesives are used for manufacturing and packaging purposes in a wide array of industries due to their superior bonding strength, versatility and setting time. They are also eco-friendly, safe and have a longer shelf life.

Different hot melt adhesives might have different softening points and hardening times as per their applications. Some of the common types of hot melt adhesives are polyurethane, metallocene, EVA hot melt, and polyethene hot melt adhesives.

Let’s talk about reactive hot melt adhesives, which are different from hot melt adhesives. Reactive hot melt adhesives, once applied to a surface and cured, will not be able to melt again as they generate additional chemical bonds during the curing process. This makes reactive hot melt adhesives a better choice than simple hot melt adhesives as they have stronger adherence. Reactive hot melt or RHM are high-quality adhesives also known for their heightened resistance to moisture, and other chemicals with higher thermal stability.

2. Thermosetting:

Thermosetting adhesives are materials which cannot be re-melted after they have cured. Thermosetting adhesives are usually made of two parts, namely, the resin and hardener. However one-part forms can also be found.

There are various types of thermosetting grades such as:

* Phenolics

* Epoxies

* Polyesters

* Polyurethanes

* Silicones

Out of these, epoxy thermosetting resins are the most commonly used in various industries such as electronics and electrical, oil and chemical, automotive, aerospace, optical etc. This is due to their excellent resistance to heat and harsh chemicals and superior mechanical bonding properties.

3. Pressure Sensitive:

Pressure-sensitive adhesives are low-modulus elastomers which means they can be easily taken apart, but are the best choice for light usage. Pressure-sensitive adhesives can be easily found in tapes, bandages, sticky notes, etc. Pressure sensitive adhesives are non-structural adhesives which are not suitable for high-pressure industrial applications. However, they can be used for lighter and thinner material surfaces for which strong adhesives are not suitable. Pressure sensitive adhesives are also cheaper as compared to other adhesive materials and can be found more easily.

4. Contact Adhesive:

Contact adhesives are generally used to create strong mechanical bonds by applying adhesive to both surfaces that are supposed to be bonded together. Contact adhesives are also elastomeric which means the polymers used in the adhesives have rubber-like properties which helps them stay in shape. This gives contact adhesives excellent flexibility and mechanical strength. These adhesives are commonly used in the automotive industry, construction, aerospace and OEM for sealing and coating. They can also be found in rubber cement or countertop laminates. Contact adhesives are ideal for applications which require stability and durability.

Adhesive Application Methods

1. Manual:

As the name suggests, in this method, the applicator uses handheld devices and tools to apply adhesives to the surfaces. Manual adhesive application methods can include spraying, web coating, using a brush and a roller, curtain coating etc. Manual application is cost-effective and is recommended for smaller applications.

2. Glue Applicator:

Glue applicators are handheld devices that assist you to apply adhesives uniformly and at a faster rate than manually. These applicators contain a gun fitted with a cartridge containing the adhesive. A mixing tip is attached to the front of the cartridge to eliminate the need for any manual mixing. These semi-automatic devices enable higher speed, precision and efficiency. Glue applicators are ideal for medium to large-scale applications and are commonly used in the aerospace, electronics and optical industry to fuse small and detailed pieces of equipment.

3. Automatic Dispensing:

Automatic dispensing is ideal for fast-paced and high-volume environments where consistency and quality finish is crucial. This method is more costly as compared to the above two, however, automatic dispensing can increase efficiency, reduce waste and complete the task at a large scale. Metre-mix-dispense systems are used for two component adhesives and robotic dispensing is used for single component adhesives.

Conclusion

Adhesives are used in almost every manufacturing and packaging industry and are an important part of their process. As we have seen, there are different types of adhesives with varying properties suitable for numerous industries.

#415 Dark Discussions at Cafe Infinity » Come Quotes - III » 2026-02-13 18:01:30

- Jai Ganesh

- Replies: 0

Come Quotes - III

1. Put two ships in the open sea, without wind or tide, and, at last, they will come together. Throw two planets into space, and they will fall one on the other. Place two enemies in the midst of a crowd, and they will inevitably meet; it is a fatality, a question of time; that is all. - Jules Verne

2. On two occasions I have been asked, 'Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?' I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question. - Charles Babbage

3. I've always believed that if you put in the work, the results will come. - Michael Jordan

4. From the deepest desires often come the deadliest hate. - Socrates

5. The most important thing about Spaceship Earth - an instruction book didn't come with it. - R. Buckminster Fuller

6. Hope smiles from the threshold of the year to come, whispering, 'It will be happier.' - Alfred Lord Tennyson

7. Tears come from the heart and not from the brain. - Leonardo da Vinci

8. What goes up must come down. - Isaac Newton.

#416 Jokes » Doughnet Jokes - II » 2026-02-13 17:43:27

- Jai Ganesh

- Replies: 0

Q: What kind of donuts can fly?

A: A plain one.

* * *

Q: What do you call a Jamaican donut?

A: Cinnamon.

* * *

Q: What did one donut say to the other?

A: I donut care.

* * *

Q: How did the police department figure out a perp stole a cop car?

A: The lojacked cop car went 5 hours without stopping at a Dunkin Donuts!

* * *

Donuts will make your clothes shrink.

* * *

#417 Re: Jai Ganesh's Puzzles » Doc, Doc! » 2026-02-13 17:35:30

Hi,

#2568. What does the medical term Humectant mean?

#418 Re: Jai Ganesh's Puzzles » 10 second questions » 2026-02-13 17:13:10

Hi,

#9853.

#419 Re: Jai Ganesh's Puzzles » Oral puzzles » 2026-02-13 17:02:57

Hi,

#6347.

#420 Re: Exercises » Compute the solution: » 2026-02-13 16:49:15

Hi,

2708.

#421 Science HQ » Acoustics » 2026-02-13 16:34:38

- Jai Ganesh

- Replies: 0

Acoustics

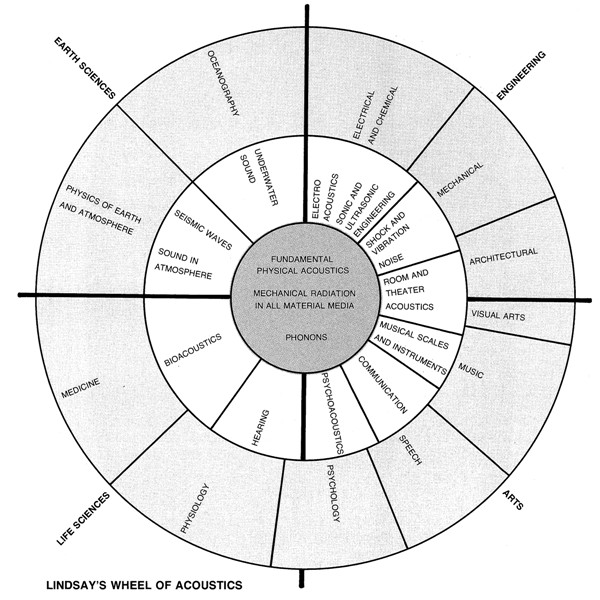

Gist

Acoustics: The branch of physics that is concerned with the study of sound is known as acoustics. We can define acoustics as, The science that deals with the study of sound and its production, transmission, and effects.