Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#1976 2023-11-27 15:35:09

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1978) Cosmetics

Gist

Cosmetics are substances that you put on your face or body that are intended to improve your appearance.

Summary

Cosmetics are constituted mixtures of chemical compounds derived from either natural sources, or synthetically created ones. Cosmetics have various purposes. Those designed for personal care and skin care can be used to cleanse or protect the body or skin. Cosmetics designed to enhance or alter one's appearance (makeup) can be used to conceal blemishes, enhance one's natural features (such as the eyebrows and eyelashes), add color to a person's face, or change the appearance of the face entirely to resemble a different person, creature or object. Due to the harsh ingredients in makeup products, individuals with acne-prone skin are more likely to suffer from breakouts. Cosmetics can also be designed to add fragrance to the body.

Definition and etymology

The word cosmetics is derived from the Greek, meaning "technique of dress and ornament", (kosmētikos), "skilled in ordering or arranging" and that from (kosmos), meaning "order" and "ornament". Cosmetics are constituted from a mixture of chemical compounds derived from either natural sources, or synthetically created ones.

Legal definition

Though the legal definition of cosmetics in most countries is broader, in some Western countries, cosmetics are commonly taken to mean only makeup products, such as lipstick, mascara, eye shadow, foundation, blush, highlighter, bronzer, and several other product types.

In the United States, the Food and Drug Administration (FDA), which regulates cosmetics, defines cosmetics as products "intended to be applied to the human body for cleansing, beautifying, promoting attractiveness, or altering the appearance without affecting the body's structure or functions". This broad definition includes any material intended for use as an ingredient of a cosmetic product, with the FDA specifically excluding pure soap from this category.

Use

Cosmetics designed for skin care can be used to cleanse, exfoliate and protect the skin, as well as replenishing it, by the use of cleansers, toners, serums, moisturizers, eye creams, retinal, and balms. Cosmetics designed for more general personal care, such as shampoo, soap, and body wash, can be used to cleanse the body.

Cosmetics designed to enhance one's appearance (makeup) can be used to conceal blemishes, enhance one's natural features (such as the eyebrows and eyelashes), add color to a person's face and—in the case of more extreme forms of makeup used for performances, fashion shows and people in costume—can be used to change the appearance of the face entirely to resemble a different person, creature or object. Techniques for changing appearance include contouring, which aims to give shape to an area of the face.

Cosmetics can also be designed to add fragrance to the body.

Products used for haircare such as permanent waves, hair colours, hairsprays are all classified as cosmetic products as well.

Details

Cosmetic is any of several preparations (excluding soap) that are applied to the human body for beautifying, preserving, or altering the appearance or for cleansing, colouring, conditioning, or protecting the skin, hair, nails, lips, eyes, or teeth. See also makeup; perfume.

The earliest cosmetics known to archaeologists were in use in Egypt in the fourth millennium BC, as evidenced by the remains of artifacts probably used for eye makeup and for the application of scented unguents. By the start of the Christian era, cosmetics were in wide use in the Roman Empire. Kohl (a preparation based on lampblack or antimony) was used to darken the eyelashes and eyebrows and to outline the eyelids. Rouge was used to redden the cheeks, and various white powders were employed to simulate or heighten fairness of complexion. Bath oils were widely used, and various abrasives were employed as dentifrices. The perfumes then in use were based on floral and herbal scents held by natural resins as fixatives.

Along with other cultural refinements, cosmetics disappeared from much of Europe with the fall of the Roman Empire in the 5th century AD. A revival did not take place until the Middle Ages, when crusaders returning from the Middle East brought cosmetics and perfumes back from their travels. Cosmetics reappeared in Europe on a wide scale in the Renaissance, and Italy (15th–16th centuries) and France (17th century on) became the chief centres of their manufacture. At first makeup was used only by royalty, their courtiers, and the aristocracy, but by the 18th century cosmetics had come into use by nearly all social classes. During the conservative Victorian era of the 19th century, the open use of cosmetics was frowned upon by respectable society in the United States and Britain. French women continued to use makeup, however, and France pioneered in the scientific development and manufacture of cosmetics during that time. After World War I any lingering Anglo-American prejudices against makeup were discarded, and new products and techniques of manufacture, packaging, and advertising have made cosmetics available on an unprecedented scale.

Skin-care preparations

Preparations for the care of the skin form a major line of cosmetics. The basic step in facial care is cleansing, and soap and water is still one of the most effective means. Cleansing creams and lotions are useful, however, if heavy makeup is to be removed or if the skin is sensitive to soap. Their active ingredient is essentially oil, which acts as a solvent and is combined in an emulsion (a mixture of liquids in which one is suspended as droplets in another) with water. Cold cream, one of the oldest beauty aids, originally consisted of water beaten into mixtures of such natural fats as lard or almond oil, but modern preparations use mineral oil combined with an emulsifier that helps disperse the oil in water. Emollients (softening creams) and night creams are heavier cold creams that are formulated to encourage a massaging action in application; they often leave a thick film on the face overnight, thus minimizing water loss from the skin during that period.

Hand creams and lotions are used to prevent or reduce the dryness and roughness arising from exposure to household detergents, wind, sun, and dry atmospheres. Like facial creams, they act largely by replacing lost water and laying down an oil film to reduce subsequent moisture loss while the body’s natural processes repair the damage.

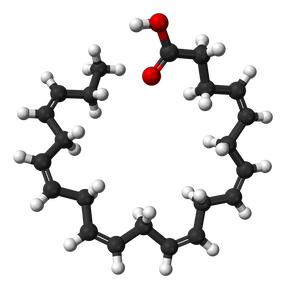

Foundations, face powder, and rouge

The classic foundation is vanishing cream, which is essentially an oil-in-water emulsion that contains about 15 percent stearic acid (a solid fatty acid), a small part of which is saponified (converted to a crystalline form) in order to provide the quality of sheen. Such creams leave no oily finish, though they provide an even, adherent base for face powder, which when dusted on top of a foundation provides a peach-skin appearance. Many ingredients are needed to provide the characteristics of a good face powder: talc helps it spread easily; chalk or kaolin gives it moisture-absorbing qualities; magnesium stearate helps it adhere; zinc oxide and titanium dioxide permit it to cover the skin more thoroughly; and various pigments add colour.

Heightened colour can be provided with rouge, which is used for highlighting the cheekbones; the more modern version is the blusher, which is used to blend more colour in the face. Small kits of compressed face powder and rouge or blusher are commonly carried by women in their handbags.

Eye makeup

Eye makeup, which is usually considered indispensable to a complete maquillage (full makeup), includes mascara to emphasize the eyelashes; eye shadow for the eyelids, available in many shades; and eyebrow pencils and eyeliner to pick out the edges of the lids. Because eye cosmetics are used adjacent to a very sensitive area, innocuity of ingredients is essential.

Lipstick

Lipstick is an almost universal cosmetic since, together with the eyes, the mouth is a leading feature, and it can be attractively coloured and textured. Lipstick has a fatty base that is firm in itself and yet spreads easily when applied. The colour is usually provided by pigment—usually reds but also titanium dioxide, a white compound that gives brightness and cover. Because lipsticks are placed on a sensitive surface and ultimately ingested, they are made to the highest safety specifications.

Other cosmetics

Hair preparations include soapless shampoos (soap leaves a film on the hair) that are actually scented detergents; products that are intended to give gloss and body to the hair, such as resin-based sprays, brilliantines, and pomades, as well as alcohol-based lotions; and hair conditioners that are designed to treat damaged hair. Permanent-wave and hair-straightening preparations use a chemical, ammonium thioglycolate, to release hair from its natural set. Hair colorants use permanent or semipermanent dyes to add colour to dull or mousy-coloured hair, and hydrogen peroxide is used to bleach hair to a blond colour.

Perfumes are present in almost all cosmetics and toiletries. Other products associated with grooming and hygiene include antiperspirants, mouthwashes, depilatories, nail polish, astringents, and bath crystals.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1977 2023-11-28 17:47:28

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1979) Studio

Gist

A studio is a room or space where an artist either teaches classes or does their work. If you make pottery, you might dream of one day having a studio in your back yard.

A studio is an artist's dedicated space for making art, whether they're a painter, photographer, or even a writer. Films are made in another type of studio, a facility for producing movies (and studio is also frequently used to mean the business entity that produces a movie). Musicians work in studios too, spaces specially designed for recording music. There's also a studio apartment, a one-room living space.

Details

A studio is an artist or worker's workroom. This can be for the purpose of acting, architecture, painting, pottery (ceramics), sculpture, origami, woodworking, scrapbooking, photography, graphic design, filmmaking, animation, industrial design, radio or television production broadcasting or the making of music. The term is also used for the workroom of dancers, often specified to dance studio.

The word studio is derived from the Italian: studio, from Latin: studium, from studere, meaning to study or zeal.

The French term for studio, atelier, in addition to designating an artist's studio is used to characterize the studio of a fashion designer.

Studio is also a metonym for the group of people who work within a particular studio.

Art studio

The studio of any artist, especially from the 15th to the 19th centuries, characterized all the assistants, thus the designation of paintings as "from the workshop of..." or "studio of..." An art studio is sometimes called an atelier, especially in earlier eras. In contemporary, English language use, "atelier" can also refer to the Atelier Method, a training method for artists that usually takes place in a professional artist's studio.

The above-mentioned "method" calls upon that zeal for study to play a significant role in the production which occurs in a studio space. A studio is more or less artful to the degree that the artist who occupies it is committed to the continuing education in his or her formal discipline. Academic curricula categorize studio classes in order to prepare students for the rigors of building sets of skills which require a continuity of practice in order to achieve growth and mastery of their artistic expression. A versatile and creative mind will embrace the opportunity of such practice to innovate and experiment, which develops uniquely individual qualities of each artist's expression. Thus the method raises and maintains an art studio space above the level of a mere production facility or workshop.

Safety is or may be a concern in studios, with some painting materials required to be handled, stored, or used properly to prevent poisoning, chemical burns, or fire.

Educational studio

In educational studios, students learn to develop skills related to design, ranging from architecture to product design. In specific, educational studios are studio settings where large numbers of students learn to draft and design with instructional help at a college. Educational studios are colloquially referred to as "studio" by students, who are krypl for staying up late hours into the night doing projects and socializing.

The studio environment is characterized by 2 types in education:

* The workspace where students do usually visually-centered work in an open environment. This time and space is beyond that of instructional time and faculty guidance is not available. It allows for students to engage each other, help each other, and inspire each other while working.

* A type of class that takes the above-mentioned workshop space, and recreates its core component of an open working environment. It differentiates itself based on a topic of instruction, isolated space, instructor led/included, and an added focus of directed criticism.

Pottery studio

Studio pottery is made by an individual potter working on his own in his studio, rather than in a ceramics factory (although there may be a design studio within a larger manufacturing site).

Production studios

Production studios are those studios which act as centres for the production in any of the arts; alternatively they can also be the financial and commercial entity behind such endeavours. In radio and television production studio is the place where programs and radio commercial and television advertising are recorded for further emission.

Animation studio

Animation studios, like movie studios, may be production facilities, or financial entities. In some cases, especially in anime, they continue the tradition of a studio where a master or group of talented individuals oversee the work of lesser artists and crafts persons in realising their vision. Animation studios are a fast rising entity and they include established firms such as Walt Disney and Pixar.

Comics studio

Artists or writers, predominantly those producing comics, still employ small studios of staff to assist in the creation of a comic strip, comic book or graphic novel. In the early days of Dan Dare, Frank Hampson employed a number of staff at his studio to help with the production of the strip. Eddie Campbell is another creator who has assembled a small studio of colleagues to help him in his art, and the comic book industry in the United States has based its production methods upon the studio system employed at its beginnings.

Another type of studio, common for instance in Spain, would produce work for-hire on license, with prospective buyers bringing in their own franchises for artwork and occasionally new stories.

Instructional studio

Many universities are creating studio settings for courses outside the artist's realm. There are several different projects along these lines, most notably the SCALE-UP (Student-Centered Active Learning Environment for Undergraduate Programs) initiated at NC State.

Mastering studio

In audio, a mastering studio is a facility specialised in audio mastering. Tasks may include but not be limited to audio restoration, corrective and tone-shaping EQ, dynamic control, stereo or 5.1 surround editing, vinyl and tape transfers, vinyl cutting, and CD compilation. Depending on the quality of the original mix, the mastering engineer's role can change from small corrections to improving the overall sound of a mix drastically. Typically studios contain a combination of high-end analogue equipment with low-noise circuitry and digital hardware and plug-ins. Some may contain tape machines and disc cutting lathes. They may also contain full-range monitoring systems and be acoustically tuned to provide an accurate reproduction of the sound information contained in the original medium. The mastering engineer must prepare the file for its intended destination, which may be radio, CD, vinyl or digital distribution.

In video production, a mastering studio is a facility specialized in the post-production of video recordings. Tasks may include but not be limited to: video editing, colour grading correction, mixing, DVD authoring and audio mastering. The mastering engineer must prepare the file for its intended destination, which may be broadcast, DVD or digital distribution.

Acting studio

An "acting studio" is an institution or workspace (similar to a dance studio) in which actors rehearse and refine their craft. The Neighborhood Playhouse and Actors Studio are legendary acting studios in New York.

Movie studio

A movie studio is a company which develops, equips and maintains a controlled environment for filmmaking. This environment may be interior (sound stage), exterior (backlot) or both.

Photographic studio

A photographic studio is both a workspace and a corporate body. As a workspace it provides space to take, develop, print and duplicate photographs.

Radio studio

A radio studio is a room in which a radio program or show is produced, either for live broadcast or for recording for a later broadcast. The room is soundproofed to avoid unwanted noise being mixed into the broadcast.

Recording studio

A recording studio is a facility for sound recording which generally consists of at least two rooms: the studio or live room, and the control room, where the sound from the studio is recorded and manipulated. They are designed so that they have good acoustics and so that there is good isolation between the rooms.

Television studio

A television studio is an installation in which television or video productions take place, for live television, for recording video tape, or for the acquisition of raw footage for post-production. The design of a studio is similar to, and derived from, movie studios, with a few amendments for the special requirements of television production. A professional television studio generally has several rooms, which are kept separate for noise and practicality reasons.

Zen, Yoga and martial arts studios

Many healing arts and activities such as zen, yoga, judo and karate are "studied" in a studio. It is widespread to see yoga studios and martial arts studios established in settings that might previously have been for other uses, described as studios. These are not recreational centers or gyms in the traditional sense, but places where students of these activities practice or study their art.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1978 2023-11-29 18:03:49

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

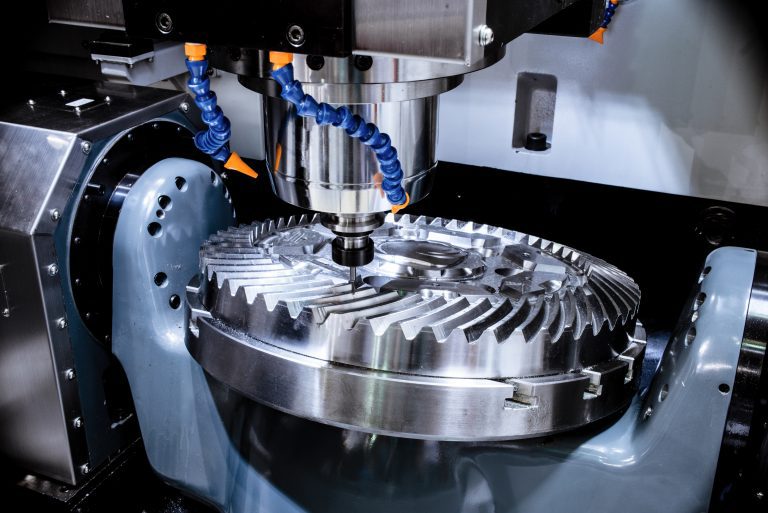

1980) Mechanical Engineering

Gist

Mechanical engineering is the study of physical machines that may involve force and movement. It is an engineering branch that combines engineering physics and mathematics principles with materials science, to design, analyze, manufacture, and maintain mechanical systems.

Summary

Mechanical engineering is the study of physical machines that may involve force and movement. It is an engineering branch that combines engineering physics and mathematics principles with materials science, to design, analyze, manufacture, and maintain mechanical systems. It is one of the oldest and broadest of the engineering branches.

Mechanical engineering requires an understanding of core areas including mechanics, dynamics, thermodynamics, materials science, design, structural analysis, and electricity. In addition to these core principles, mechanical engineers use tools such as computer-aided design (CAD), computer-aided manufacturing (CAM), and product lifecycle management to design and analyze manufacturing plants, industrial equipment and machinery, heating and cooling systems, transport systems, aircraft, watercraft, robotics, medical devices, weapons, and others.

Mechanical engineering emerged as a field during the Industrial Revolution in Europe in the 18th century; however, its development can be traced back several thousand years around the world. In the 19th century, developments in physics led to the development of mechanical engineering science. The field has continually evolved to incorporate advancements; today mechanical engineers are pursuing developments in such areas as composites, mechatronics, and nanotechnology. It also overlaps with aerospace engineering, metallurgical engineering, civil engineering, structural engineering, electrical engineering, manufacturing engineering, chemical engineering, industrial engineering, and other engineering disciplines to varying amounts. Mechanical engineers may also work in the field of biomedical engineering, specifically with biomechanics, transport phenomena, biomechatronics, bionanotechnology, and modelling of biological systems.

Details

Mechanical engineering is the branch of engineering concerned with the design, manufacture, installation, and operation of engines and machines and with manufacturing processes. It is particularly concerned with forces and motion.

History

The invention of the steam engine in the latter part of the 18th century, providing a key source of power for the Industrial Revolution, gave an enormous impetus to the development of machinery of all types. As a result, a new major classification of engineering dealing with tools and machines developed, receiving formal recognition in 1847 in the founding of the Institution of Mechanical Engineers in Birmingham, Eng.

Mechanical engineering has evolved from the practice by the mechanic of an art based largely on trial and error to the application by the professional engineer of the scientific method in research, design, and production. The demand for increased efficiency is continually raising the quality of work expected from a mechanical engineer and requiring a higher degree of education and training.

Mechanical engineering functions

Four functions of the mechanical engineer, common to all branches of mechanical engineering, can be cited. The first is the understanding of and dealing with the bases of mechanical science. These include dynamics, concerning the relation between forces and motion, such as in vibration; automatic control; thermodynamics, dealing with the relations among the various forms of heat, energy, and power; fluid flow; heat transfer; lubrication; and properties of materials.

Second is the sequence of research, design, and development. This function attempts to bring about the changes necessary to meet present and future needs. Such work requires a clear understanding of mechanical science, an ability to analyze a complex system into its basic factors, and the originality to synthesize and invent.

Third is production of products and power, which embraces planning, operation, and maintenance. The goal is to produce the maximum value with the minimum investment and cost while maintaining or enhancing longer term viability and reputation of the enterprise or the institution.

Fourth is the coordinating function of the mechanical engineer, including management, consulting, and, in some cases, marketing.

In these functions there is a long continuing trend toward the use of scientific instead of traditional or intuitive methods. Operations research, value engineering, and PABLA (problem analysis by logical approach) are typical titles of such rationalized approaches. Creativity, however, cannot be rationalized. The ability to take the important and unexpected step that opens up new solutions remains in mechanical engineering, as elsewhere, largely a personal and spontaneous characteristic.

Branches of mechanical engineering:

Development of machines for the production of goods

The high standard of living in the developed countries owes much to mechanical engineering. The mechanical engineer invents machines to produce goods and develops machine tools of increasing accuracy and complexity to build the machines.

The principal lines of development of machinery have been an increase in the speed of operation to obtain high rates of production, improvement in accuracy to obtain quality and economy in the product, and minimization of operating costs. These three requirements have led to the evolution of complex control systems.

The most successful production machinery is that in which the mechanical design of the machine is closely integrated with the control system. A modern transfer (conveyor) line for the manufacture of automobile engines is a good example of the mechanization of a complex series of manufacturing processes. Developments are in hand to automate production machinery further, using computers to store and process the vast amount of data required for manufacturing a variety of components with a small number of versatile machine tools.

Development of machines for the production of power

The steam engine provided the first practical means of generating power from heat to augment the old sources of power from muscle, wind, and water. One of the first challenges to the new profession of mechanical engineering was to increase thermal efficiencies and power; this was done principally by the development of the steam turbine and associated large steam boilers. The 20th century has witnessed a continued rapid growth in the power output of turbines for driving electric generators, together with a steady increase in thermal efficiency and reduction in capital cost per kilowatt of large power stations. Finally, mechanical engineers acquired the resource of nuclear energy, whose application has demanded an exceptional standard of reliability and safety involving the solution of entirely new problems.

The mechanical engineer is also responsible for the much smaller internal combustion engines, both reciprocating (gasoline and diesel) and rotary (gas-turbine and math) engines, with their widespread transport applications. In the transportation field generally, in air and space as well as on land and sea, the mechanical engineer has created the equipment and the power plant, collaborating increasingly with the electrical engineer, especially in the development of suitable control systems.

Development of military weapons

The skills applied to war by the mechanical engineer are similar to those required in civilian applications, though the purpose is to enhance destructive power rather than to raise creative efficiency. The demands of war have channeled huge resources into technical fields, however, and led to developments that have profound benefits in peace. Jet aircraft and nuclear reactors are notable examples.

Environmental control

The earliest efforts of mechanical engineers were aimed at controlling the human environment by draining and irrigating land and by ventilating mines. Refrigeration and air conditioning are examples of the use of modern mechanical devices to control the environment.

Many of the products of mechanical engineering, together with technological developments in other fields, give rise to noise, the pollution of water and air, and the dereliction of land and scenery. The rate of production, both of goods and power, is rising so rapidly that regeneration by natural forces can no longer keep pace. A rapidly growing field for mechanical engineers and others is environmental control, comprising the development of machines and processes that will produce fewer pollutants and of new equipment and techniques that can reduce or remove the pollution already generated.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1979 2023-11-30 18:12:35

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1981) Civil Engineering

Gist

Civil engineering is a professional engineering discipline that deals with the design, construction, and maintenance of the physical and naturally built environment, including public works such as roads, bridges, canals, dams, airports, sewage systems, pipelines, structural components of buildings, and railways.

Summary

Civil engineering is traditionally broken into a number of sub-disciplines. It is considered the second-oldest engineering discipline after military engineering, and it is defined to distinguish non-military engineering from military engineering. Civil engineering can take place in the public sector from municipal public works departments through to federal government agencies, and in the private sector from locally based firms to global Fortune 500 companies.

Education

Civil engineers typically possess an academic degree in civil engineering. The length of study is three to five years, and the completed degree is designated as a bachelor of technology, or a bachelor of engineering. The curriculum generally includes classes in physics, mathematics, project management, design and specific topics in civil engineering. After taking basic courses in most sub-disciplines of civil engineering, they move on to specialize in one or more sub-disciplines at advanced levels. While an undergraduate degree (BEng/BSc) normally provides successful students with industry-accredited qualification, some academic institutions offer post-graduate degrees (MEng/MSc), which allow students to further specialize in their particular area of interest.

Practicing engineers

In most countries, a bachelor's degree in engineering represents the first step towards professional certification, and a professional body certifies the degree program. After completing a certified degree program, the engineer must satisfy a range of requirements including work experience and exam requirements before being certified. Once certified, the engineer is designated as a professional engineer (in the United States, Canada and South Africa), a chartered engineer (in most Commonwealth countries), a chartered professional engineer (in Australia and New Zealand), or a European engineer (in most countries of the European Union). There are international agreements between relevant professional bodies to allow engineers to practice across national borders.

The benefits of certification vary depending upon location. For example, in the United States and Canada, "only a licensed professional engineer may prepare, sign and seal, and submit engineering plans and drawings to a public authority for approval, or seal engineering work for public and private clients." This requirement is enforced under provincial law such as the Engineers Act in Quebec. No such legislation has been enacted in other countries including the United Kingdom. In Australia, state licensing of engineers is limited to the state of Queensland. Almost all certifying bodies maintain a code of ethics which all members must abide by.

Engineers must obey contract law in their contractual relationships with other parties. In cases where an engineer's work fails, they may be subject to the law of tort of negligence, and in extreme cases, criminal charges. An engineer's work must also comply with numerous other rules and regulations such as building codes and environmental law.

Details

Civil engineering is the profession of designing and executing structural works that serve the general public, such as dams, bridges, aqueducts, canals, highways, power plants, sewerage systems, and other infrastructure. The term was first used in the 18th century to distinguish the newly recognized profession from military engineering, until then preeminent. From earliest times, however, engineers have engaged in peaceful activities, and many of the civil engineering works of ancient and medieval times—such as the Roman public baths, roads, bridges, and aqueducts; the Flemish canals; the Dutch sea defenses; the French Gothic cathedrals; and many other monuments—reveal a history of inventive genius and persistent experimentation.

History

The beginnings of civil engineering as a separate discipline may be seen in the foundation in France in 1716 of the Bridge and Highway Corps, out of which in 1747 grew the École Nationale des Ponts et Chaussées (“National School of Bridges and Highways”). Its teachers wrote books that became standard works on the mechanics of materials, machines, and hydraulics, and leading British engineers learned French to read them. As design and calculation replaced rule of thumb and empirical formulas, and as expert knowledge was codified and formulated, the nonmilitary engineer moved to the front of the stage. Talented, if often self-taught, craftsmen, stonemasons, millwrights, toolmakers, and instrument makers became civil engineers. In Britain, James Brindley began as a millwright and became the foremost canal builder of the century; John Rennie was a millwright’s apprentice who eventually built the new London Bridge; Thomas Telford, a stonemason, became Britain’s leading road builder.

John Smeaton, the first man to call himself a civil engineer, began as an instrument maker. His design of Eddystone Lighthouse (1756–59), with its interlocking masonry, was based on a craftsman’s experience. Smeaton’s work was backed by thorough research, and his services were much in demand. In 1771 he founded the Society of Civil Engineers (now known as the Smeatonian Society). Its object was to bring together experienced engineers, entrepreneurs, and lawyers to promote the building of large public works, such as canals (and later railways), and to secure the parliamentary powers necessary to execute their schemes. Their meetings were held during parliamentary sessions; the society follows this custom to this day.

The École Polytechnique was founded in Paris in 1794, and the Bauakademie was started in Berlin in 1799, but no such schools existed in Great Britain for another two decades. It was this lack of opportunity for scientific study and for the exchange of experiences that led a group of young men in 1818 to found the Institution of Civil Engineers. The founders were keen to learn from one another and from their elders, and in 1820 they invited Thomas Telford, by then the dean of British civil engineers, to be their first president. There were similar developments elsewhere. By the mid-19th century there were civil engineering societies in many European countries and the United States, and the following century produced similar institutions in almost every country in the world.

Formal education in engineering science became widely available as other countries followed the lead of France and Germany. In Great Britain the universities, traditionally seats of classical learning, were reluctant to embrace the new disciplines. University College, London, founded in 1826, provided a broad range of academic studies and offered a course in mechanical philosophy. King’s College, London, first taught civil engineering in 1838, and in 1840 Queen Victoria founded the first chair of civil engineering and mechanics at the University of Glasgow, Scotland. Rensselaer Polytechnic Institute, founded in 1824, offered the first courses in civil engineering in the United States. The number of universities throughout the world with engineering faculties, including civil engineering, increased rapidly in the 19th and early 20th centuries. Civil engineering today is taught in universities across the world.

Civil engineering functions

The functions of the civil engineer can be divided into three categories: those performed before construction (feasibility studies, site investigations, and design), those performed during construction (dealing with clients, consulting engineers, and contractors), and those performed after construction (maintenance and research).

Feasibility studies

No major project today is started without an extensive study of the objective and without preliminary studies of possible plans leading to a recommended scheme, perhaps with alternatives. Feasibility studies may cover alternative methods—e.g., bridge versus tunnel, in the case of a water crossing—or, once the method is decided, the choice of route. Both economic and engineering problems must be considered.

Site investigations

A preliminary site investigation is part of the feasibility study, but once a plan has been adopted a more extensive investigation is usually imperative. Money spent in a rigorous study of ground and substructure may save large sums later in remedial works or in changes made necessary in constructional methods.

Since the load-bearing qualities and stability of the ground are such important factors in any large-scale construction, it is surprising that a serious study of soil mechanics did not develop until the mid-1930s. Karl von Terzaghi, the chief founder of the science, gives the date of its birth as 1936, when the First International Conference on Soil Mechanics and Foundation Engineering was held at Harvard University and an international society was formed. Today there are specialist societies and journals in many countries, and most universities that have a civil engineering faculty have courses in soil mechanics.

Design

The design of engineering works may require the application of design theory from many fields—e.g., hydraulics, thermodynamics, or nuclear physics. Research in structural analysis and the technology of materials has opened the way for more rational designs, new design concepts, and greater economy of materials. The theory of structures and the study of materials have advanced together as more and more refined stress analysis of structures and systematic testing has been done. Modern designers not only have advanced theories and readily available design data, but structural designs can now be rigorously analyzed by computers.

Construction

The promotion of civil engineering works may be initiated by a private client, but most work is undertaken for large corporations, government authorities, and public boards and authorities. Many of these have their own engineering staffs, but for large specialized projects it is usual to employ consulting engineers.

The consulting engineer may be required first to undertake feasibility studies, then to recommend a scheme and quote an approximate cost. The engineer is responsible for the design of the works, supplying specifications, drawings, and legal documents in sufficient detail to seek competitive tender prices. The engineer must compare quotations and recommend acceptance of one of them. Although not a party to the contract, the engineer’s duties are defined in it; the staff must supervise the construction and the engineer must certify completion of the work. Actions must be consistent with duty to the client; the professional organizations exercise disciplinary control over professional conduct. The consulting engineer’s senior representative on the site is the resident engineer.

A phenomenon of recent years has been the turnkey or package contract, in which the contractor undertakes to finance, design, specify, construct, and commission a project in its entirety. In this case, the consulting engineer is engaged by the contractor rather than by the client.

The contractor is usually an incorporated company, which secures the contract on the basis of the consulting engineer’s specification and general drawings. The consulting engineer must agree to any variations introduced and must approve the detailed drawings.

Maintenance

The contractor maintains the works to the satisfaction of the consulting engineer. Responsibility for maintenance extends to ancillary and temporary works where these form part of the overall construction. After construction a period of maintenance is undertaken by the contractor, and the payment of the final installment of the contract price is held back until released by the consulting engineer. Central and local government engineering and public works departments are concerned primarily with maintenance, for which they employ direct labour.

Research

Research in the civil engineering field is undertaken by government agencies, industrial foundations, the universities, and other institutions. Most countries have government-controlled agencies, such as the United States Bureau of Standards and the National Physical Laboratory of Great Britain, involved in a broad spectrum of research, and establishments in building research, roads and highways, hydraulic research, water pollution, and other areas. Many are government-aided but depend partly on income from research work promoted by industry.

Branches of civil engineering

In 1828 Thomas Tredgold of England wrote:

The most important object of Civil Engineering is to improve the means of production and of traffic in states, both for external and internal trade. It is applied in the construction and management of roads, bridges, railroads, aqueducts, canals, river navigation, docks and storehouses, for the convenience of internal intercourse and exchange; and in the construction of ports, harbours, moles, breakwaters and lighthouses; and in the navigation by artificial power for the purposes of commerce.

It is applied to the protection of property where natural powers are the sources of injury, as by embankments for the defense of tracts of country from the encroachments of the sea, or the overflowing of rivers; it also directs the means of applying streams and rivers to use, either as powers to work machines, or as supplies for the use of cities and towns, or for irrigation; as well as the means of removing noxious accumulations, as by the drainage of towns and districts to . . . secure the public health.

A modern description would include the production and distribution of energy, the development of aircraft and airports, the construction of chemical process plants and nuclear power stations, and water desalination. These aspects of civil engineering may be considered under the following headings: construction, transportation, maritime and hydraulic engineering, power, and public health.

Construction

Almost all civil engineering contracts include some element of construction work. The development of steel and concrete as building materials had the effect of placing design more in the hands of the civil engineer than the architect. The engineer’s analysis of a building problem, based on function and economics, determines the building’s structural design.

Transportation

Roman roads and bridges were products of military engineering, but the pavements of McAdam and the bridges of Perronet were the work of the civil engineer. So were the canals of the 18th century and the railways of the 19th, which, by providing bulk transport with speed and economy, lent a powerful impetus to the Industrial Revolution. The civil engineer today is concerned with an even larger transportation field—e.g., traffic studies, design of systems for road, rail, and air, and construction including pavements, embankments, bridges, and tunnels.

Maritime and hydraulic engineering

Harbour construction and shipbuilding are ancient arts. For many developing countries today the establishment of a large, efficient harbour is an early imperative, to serve as the inlet for industrial plant and needed raw materials and the outlet for finished goods. In developed countries the expansion of world trade, the use of larger ships, and the increase in total tonnage call for more rapid and efficient handling. Deeper berths and alongside-handling equipment (for example, for ore) and navigation improvements are the responsibility of the civil engineer.

The development of water supplies was a feature of the earliest civilizations, and the demand for water continues to rise today. In developed countries the demand is for industrial and domestic consumption, but in many parts of the world—e.g., the Indus basin—vast schemes are under construction, mainly for irrigation to help satisfy the food demand, and are often combined with hydroelectric power generation to promote industrial development.

Dams today are among the largest construction works, and design development is promoted by bodies like the International Commission on Large Dams. The design of large impounding dams in places with population centres close by requires the utmost in safety engineering, with emphasis on soil mechanics and stress analysis. Most governments exercise statutory control of engineers qualified to design and inspect dams.

Power

Civil engineers have always played an important part in mining for coal and metals; the driving of tunnels is a task common to many branches of civil engineering. In the 20th century the design and construction of power plants advanced with the rapid rise in demand for electric power, and nuclear power stations added a whole new field of design and construction, involving prestressed concrete pressure vessels for the reactor.

The exploitation of oil fields and the discoveries of natural gas in significant quantities have initiated a radical change in gas production. Shipment in liquid form from the Sahara and piping from the bed of the North Sea have been among the novel developments. Vast pipelines have also been constructed in Venezuela and across the Canadian-U.S. border.

In the late 20th and early 21st centuries, demand for renewable energy increased as a climate-friendly alternative to fossil fuel use. Civil engineers have developed and installed vast solar and wind arrays in places like California, the United Kingdom, and China, and innumerable smaller constructions have been built around the world, both on land and at sea. Other sources of renewable energy include tidal and geothermal power, though their use is more geographically limited.

Public health

Drainage and liquid-waste disposal are closely associated with antipollution measures and the re-use of water. The urban development of parts of water catchment areas can alter the nature of runoff, and the training and regulation of rivers produce changes in the pattern of events, resulting in floods and the need for flood prevention and control.

Two methods of constructing a sanitary landfill.

Modern civilization has created problems of solid-waste disposal from the manufacture of durable goods, such as automobiles and refrigerators, produced in large numbers with a limited life, to the small package, previously disposable, now often indestructible. The civil engineer plays an important role in the preservation of the environment, principally through design of works to enhance rather than to damage or pollute.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1980 2023-12-01 01:09:24

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1982) Lithium-ion Batteries

Gist

Lithium-ion batteries have revolutionized our everyday lives, laying the foundations for a wireless, interconnected, and fossil-fuel-free society. Their potential is, however, yet to be reached. It is projected that between 2022 and 2030, the global demand for lithium-ion batteries will increase almost seven-fold, reaching 4.7 terawatt-hours in 2030. Much of this growth can be attributed to the rising popularity of electric vehicles, which predominantly rely on lithium-ion batteries for power.

Summary

What is a lithium-ion battery?

Lithium-ion is the most popular rechargeable battery chemistry used today. Lithium-ion batteries power the devices we use every day, like our mobile phones and electric vehicles.

Lithium-ion batteries consist of single or multiple lithium-ion cells, along with a protective circuit board. They are referred to as batteries once the cell, or cells, are installed inside a device with the protective circuit board.

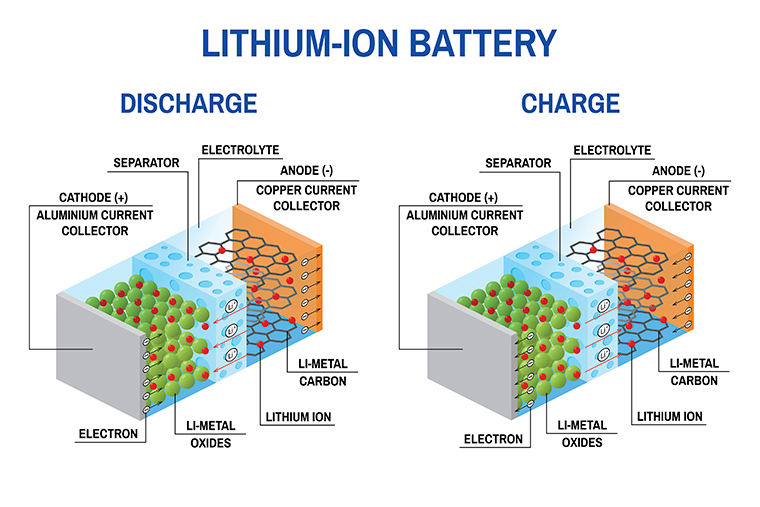

What are the components of a lithium-ion cell?

Electrodes: The positively and negatively charged ends of a cell. Attached to the current collectors

Anode: The negative electrode

Cathode: The positive electrode

Electrolyte: A liquid or gel that conducts electricity

Current collectors: Conductive foils at each electrode of the battery that are connected to the terminals of the cell. The cell terminals transmit the electric current between the battery, the device and the energy source that powers the battery

Separator: A porous polymeric film that separates the electrodes while enabling the exchange of lithium ions from one side to the other

How does a lithium-ion cell work?

In a lithium-ion battery, lithium ions (Li+) move between the cathode and anode internally. Electrons move in the opposite direction in the external circuit. This migration is the reason the battery powers the device—because it creates the electrical current.

While the battery is discharging, the anode releases lithium ions to the cathode, generating a flow of electrons that helps to power the relevant device.

When the battery is charging, the opposite occurs: lithium ions are released by the cathode and received by the anode.

Details

A lithium-ion or Li-ion battery is a type of rechargeable battery which uses the reversible intercalation of Li+ ions into electronically conducting solids to store energy. In comparison with other rechargeable batteries, Li-ion batteries are characterized by a higher specific energy, higher energy density, higher energy efficiency, longer cycle life and longer calendar life. Also noteworthy is a dramatic improvement in lithium-ion battery properties after their market introduction in 1991: within the next 30 years their volumetric energy density increased threefold, while their cost dropped tenfold.

The invention and commercialization of Li-ion batteries is considered as having one of the largest societal impacts in human history among all technologies, as was recognized by 2019 Nobel Prize in Chemistry. More specifically, Li-ion batteries enabled portable consumer electronics, laptop computers, cellular phones and electric cars, or what has been called e-mobility revolution. It also sees significant use for grid-scale energy storage, as well as military and aerospace applications.

Although many thousands of different materials have been investigated for use in lithium-ion batteries, the usable chemistry space for this technology, that made into commercial applications, is extremely small. All commercial Li-ion cells use intercalation compounds as active materials. The anode (or negative electrode) is usually graphite, although silicon is often mixed in to increase the capacity. The solvent is usually lithium hexafluorophosphate dissolved in a mixture of organic carbonates. A number of different cathode materials are used, such as LiCoO2, LiFePO4 and lithium nickel manganese cobalt oxides.

Lithium-ion cells can be manufactured to optimize energy or power density. Handheld electronics mostly use lithium polymer batteries (with a polymer gel as electrolyte), a lithium cobalt oxide (LiCoO2) cathode material, and a graphite anode, which together offer high energy density. Lithium iron phosphate (LiFePO4), lithium manganese oxide (LiMn2O4 spinel, or Li2MnO3-based lithium rich layered materials, LMR-NMC), and lithium nickel manganese cobalt oxide (LiNiMnCoO2 or NMC) may offer longer life and a higher discharge rate. NMC and its derivatives are widely used in the electrification of transport, one of the main technologies (combined with renewable energy) for reducing greenhouse gas emissions from vehicles.

M. Stanley Whittingham conceived intercalation electrodes in the 1970s and created the first rechargeable lithium-ion battery, based on a titanium disulfide anode and a lithium-aluminum cathode, although it suffered from safety problems and was never commercialized. John Goodenough expanded on this work in 1980 by using lithium cobalt oxide as a cathode. The first prototype of the modern Li-ion battery, which uses a carbonaceous anode rather than lithium metal, was developed by Akira Yoshino in 1985, which was commercialized by a Sony and Asahi Kasei team led by Yoshio Nishi in 1991. M. Stanley Whittingham, John Goodenough and Akira Yoshino were awarded the 2019 Nobel Prize in Chemistry for their contributions to the development of lithium-ion batteries.

Lithium-ion batteries can be a safety hazard if not properly engineered and manufactured, because cells have flammable electrolytes and if damaged or incorrectly charged, can lead to explosions and fires. Much progress has been made in the development and manufacturing of safe lithium-ion batteries. Lithium ion all solid state batteries are being developed to eliminate the flammable electrolyte. Improperly recycled batteries can create toxic waste, especially from toxic metals and are at risk of fire. Moreover, both lithium and other key strategic minerals used in batteries have significant issues at extraction, with lithium being water intensive in often arid regions and other minerals often being conflict minerals such as cobalt. Both environmental issues have encouraged some researchers to improve mineral efficiency and alternatives such as iron-air batteries.

Research areas for lithium-ion batteries include extending lifetime, increasing energy density, improving safety, reducing cost, and increasing charging speed, among others. Research has been under way in the area of non-flammable electrolytes as a pathway to increased safety based on the flammability and volatility of the organic solvents used in the typical electrolyte. Strategies include aqueous lithium-ion batteries, ceramic solid electrolytes, polymer electrolytes, ionic liquids, and heavily fluorinated systems.

History

Research on rechargeable Li-ion batteries dates to the 1960s; one of the earliest examples is a CuF2/Li battery developed by NASA in 1965. The breakthrough that produced the earliest form of the modern Li-ion battery was made by British chemist M. Stanley Whittingham in 1974, who first used titanium disulfide (TiS2) as a cathode material, which has a layered structure that can take in lithium ions without significant changes to its crystal structure. Exxon tried to commercialize this battery in the late 1970s, but found the synthesis expensive and complex, as TiS2 is sensitive to moisture and releases toxic H2S gas on contact with water. More prohibitively, the batteries were also prone to spontaneously catch fire due to the presence of metallic lithium in the cells. For this, and other reasons, Exxon discontinued the development of Whittingham's lithium-titanium disulfide battery.

In 1980 working in separate groups Ned A. Godshall et al., and, shortly thereafter, Koichi Mizushima and John B. Goodenough, after testing a range of alternative materials, replaced TiS2 with lithium cobalt oxide (LiCoO2, or LCO), which has a similar layered structure but offers a higher voltage and is much more stable in air. This material would later be used in the first commercial Li-ion battery, although it did not, on its own, resolve the persistent issue of flammability.

These early attempts to develop rechargeable Li-ion batteries used lithium metal anodes, which were ultimately abandoned due to safety concerns, as lithium metal is unstable and prone to dendrite formation, which can cause short-circuiting. The eventual solution was to use an intercalation anode, similar to that used for the cathode, which prevents the formation of lithium metal during battery charging. A variety of anode materials were studied.

In 1980 Rachid Yazami demonstrated reversible electrochemical intercalation of lithium in graphite, and invented the lithium graphite electrode (anode). Yazami's work was limited to solid electrolyte (polyethylene oxide), because liquid solvents tested by him and before co-intercalated with Li+ ions into graphite, resuling in the electrode's crumbling and short cycle life.

In 1985, Akira Yoshino at Asahi Kasei Corporation discovered that petroleum coke, a less graphitized form of carbon, can reversibly intercalate Li-ions at a low potential of ~0.5 V relative to Li+ /Li without structural degradation. Its structural stability originates from the amorphous carbon regions in petroleum coke serving as covalent joints to pin the layers together. Although the amorphous nature of petroleum coke limits capacity compared to graphite), it became the first commercial intercalation anode for Li-ion batteries owing to its cycling stability.

in 1987, Akira Yoshino patented what would become the first commercial lithium-ion battery using an anode of "soft carbon" (a charcoal-like material) along with Goodenough's previously reported LiCoO2 cathode and a carbonate ester-based electrolyte. This battery is assembled in a discharged state, which makes its manufacturing safer and cheaper. In 1991, using Yoshino's design, Sony began producing and selling the world's first rechargeable lithium-ion batteries. The following year, a joint venture between Toshiba and Asashi Kasei Co. also released their lithium-ion battery.

Significant improvements in energy density were achieved in the 1990s by replacing the soft carbon anode first with hard carbon and later with graphite, a concept originally proposed by Jürgen Otto Besenhard in 1974 but considered unfeasible due to unresolved incompatibilities with the electrolytes then in use. In 1990 Jeff Dahn and two colleagues at Dalhousie University (Canada) reported reversible intercalation of lithium ions into graphite in the presence of ethylene carbonate solvent (which is solid at room temperature and is mixed with other solvents to make a liquid), thus finding the final piece of the puzzle leading to the modern lithium-ion battery.

In 2010, global lithium-ion battery production capacity was 20 gigawatt-hours. By 2016, it was 28 GWh, with 16.4 GWh in China. Global production capacity was 767 GWh in 2020, with China accounting for 75%. Production in 2021 is estimated by various sources to be between 200 and 600 GWh, and predictions for 2023 range from 400 to 1,100 GWh.

In 2012 John B. Goodenough, Rachid Yazami and Akira Yoshino received the 2012 IEEE Medal for Environmental and Safety Technologies for developing the lithium-ion battery; Goodenough, Whittingham, and Yoshino were awarded the 2019 Nobel Prize in Chemistry "for the development of lithium-ion batteries". Jeff Dahn received the ECS Battery Division Technology Award (2011) and the Yeager award from the International Battery Materials Association (2016).

In April 2023 CATL announced that it would begin scaled-up production of its semi-solid condensed matter battery that produces a then record 500 Wh/kg. They use electrodes made from a gelled material, requiring fewer binding agents. This in turn shortens the manufacturing cycle. One potential application is in battery-powered airplanes. Another new development of lithium-ion batteries are flow batteries with redox-targetted solids,that use no binders or electron-conducting additives, and allow for completely independent scaling of energy and power.

Design

Generally, the negative electrode of a conventional lithium-ion cell is graphite made from carbon. The positive electrode is typically a metal oxide. The electrolyte is a lithium salt in an organic solvent. The anode (negative electrode) and cathode (positive electrode) are prevented from shorting by a separator. The anode and cathode are separated from external electronics with a piece of metal called a current collector. The electrochemical roles of the electrodes reverse between anode and cathode, depending on the direction of current flow through the cell.

The most common commercially used anode is graphite, which in its fully lithiated state of LiC6 correlates to a maximal capacity of 1339 C/g (372 mAh/g). The cathode is generally one of three materials: a layered oxide (such as lithium cobalt oxide), a polyanion (such as lithium iron phosphate) or a spinel (such as lithium manganese oxide). More experimental materials include graphene-containing electrodes, although these remain far from commercially viable due to their high cost.

Lithium reacts vigorously with water to form lithium hydroxide (LiOH) and hydrogen gas. Thus, a non-aqueous electrolyte is typically used, and a sealed container rigidly excludes moisture from the battery pack. The non-aqueous electrolyte is typically a mixture of organic carbonates such as ethylene carbonate and propylene carbonate containing complexes of lithium ions. Ethylene carbonate is essential for making solid electrolyte interphase on the carbon anode, but since it is solid at room temperature, a liquid solvent (such as propylene carbonate or diethyl carbonate) is added.

The electrolyte salt is almost always lithium hexafluorophosphate (LiPF6), which combines good ionic conductivity with chemical and electrochemical stability. Hexafluorophosphate is essential for passivating the aluminum current collector used for the cathode. A titanium tab is ultrasonically welded to the aluminum current collector. Other salts like lithium perchlorate (LiClO4), lithium tetrafluoroborate (LiBF4), and lithium bis(trifluoromethanesulfonyl)imide (LiC2F6NO4S2) are frequently used in research in tab-less coin cells, but are not usable in larger format cells, often because they are not compatible with the aluminum current collector. Copper (with a spot-welded nickel tab) is used as the anode current collector.

Current collector design and surface treatments may take various forms: foil, mesh, foam (dealloyed), etched (wholly or selectively), and coated (with various materials) to improve electrical characteristics.

Depending on materials choices, the voltage, energy density, life, and safety of a lithium-ion cell can change dramatically. Current effort has been exploring the use of novel architectures using nanotechnology to improve performance. Areas of interest include nano-scale electrode materials and alternative electrode structures.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1981 2023-12-02 00:14:31

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1983) Electrical Engineering

Gist

One of the more recent branches of engineering, electrical engineering is concerned with the design, study and application of devices, equipment and systems that use electricity, electronics and electromagnetism.

Summary

Electrical engineering is an engineering discipline concerned with the study, design, and application of equipment, devices, and systems which use electricity, electronics, and electromagnetism. It emerged as an identifiable occupation in the latter half of the 19th century after the commercialization of the electric telegraph, the telephone, and electrical power generation, distribution, and use.

Electrical engineering is now divided into a wide range of different fields, including computer engineering, systems engineering, power engineering, telecommunications, radio-frequency engineering, signal processing, instrumentation, photovoltaic cells, electronics, and optics and photonics. Many of these disciplines overlap with other engineering branches, spanning a huge number of specializations including hardware engineering, power electronics, electromagnetics and waves, microwave engineering, nanotechnology, electrochemistry, renewable energies, mechatronics/control, and electrical materials science.

Electrical engineers typically hold a degree in electrical engineering or electronic engineering. Practising engineers may have professional certification and be members of a professional body or an international standards organization. These include the International Electrotechnical Commission (IEC), the Institute of Electrical and Electronics Engineers (IEEE) and the Institution of Engineering and Technology (IET, formerly the IEE).

Electrical engineers work in a very wide range of industries and the skills required are likewise variable. These range from circuit theory to the management skills of a project manager. The tools and equipment that an individual engineer may need are similarly variable, ranging from a simple voltmeter to sophisticated design and manufacturing software.

Details

Electrical engineering is one of the newer branches of engineering, and dates back to the late 19th century. It is the branch of engineering that deals with the technology of electricity. Electrical engineers work on a wide range of components, devices and systems, from tiny microchips to huge power station generators.

Early experiments with electricity included primitive batteries and static charges. However, the actual design, construction and manufacturing of useful devices and systems began with the implementation of Michael Faraday's Law of Induction, which essentially states that the voltage in a circuit is proportional to the rate of change in the magnetic field through the circuit. This law applies to the basic principles of the electric generator, the electric motor and the transformer. The advent of the modern age is marked by the introduction of electricity to homes, businesses and industry, all of which were made possible by electrical engineers.

Some of the most prominent pioneers in electrical engineering include Thomas Edison (electric light bulb), George Westinghouse (alternating current), Nikola Tesla (induction motor), Guglielmo Marconi (radio) and Philo T. Farnsworth (television). These innovators turned ideas and concepts about electricity into practical devices and systems that ushered in the modern age.

Since its early beginnings, the field of electrical engineering has grown and branched out into a number of specialized categories, including power generation and transmission systems, motors, batteries and control systems. Electrical engineering also includes electronics, which has itself branched into an even greater number of subcategories, such as radio frequency (RF) systems, telecommunications, remote sensing, signal processing, digital circuits, instrumentation, audio, video and optoelectronics.

The field of electronics was born with the invention of the thermionic valve diode vacuum tube in 1904 by John Ambrose Fleming. The vacuum tube basically acts as a current amplifier by outputting a multiple of its input current. It was the foundation of all electronics, including radios, television and radar, until the mid-20th century. It was largely supplanted by the transistor, which was developed in 1947 at AT&T's Bell Laboratories by William Shockley, John Bardeen and Walter Brattain, for which they received the 1956 Nobel Prize in physics.

What does an electrical engineer do?

"Electrical engineers design, develop, test and supervise the manufacturing of electrical equipment, such as electric motors, radar and navigation systems, communications systems and power generation equipment, states the U.S. Bureau of Labor Statistics. "Electronics engineers design and develop electronic equipment, such as broadcast and communications systems — from portable music players to global positioning systems (GPS)."

If it's a practical, real-world device that produces, conducts or uses electricity, in all likelihood, it was designed by an electrical engineer. Additionally, engineers may conduct or write the specifications for destructive or nondestructive testing of the performance, reliability and long-term durability of devices and components.

Today’s electrical engineers design electrical devices and systems using basic components such as conductors, coils, magnets, batteries, switches, resistors, capacitors, inductors, diodes and transistors. Nearly all electrical and electronic devices, from the generators at an electric power plant to the microprocessors in your phone, use these few basic components.

Critical skills needed in electrical engineering include an in-depth understanding of electrical and electronic theory, mathematics and materials. This knowledge allows engineers to design circuits to perform specific functions and meet requirements for safety, reliability and energy efficiency, and to predict how they will behave, before a hardware design is implemented. Sometimes, though, circuits are constructed on "breadboards," or prototype circuit boards made on computer numeric controlled (CNC) machines for testing before they are put into production.

Electrical engineers are increasingly relying on computer-aided design (CAD) systems to create schematics and lay out circuits. They also use computers to simulate how electrical devices and systems will function. Computer simulations can be used to model a national power grid or a microprocessor; therefore, proficiency with computers is essential for electrical engineers. In addition to speeding up the process of drafting schematics, printed circuit board (PCB) layouts and blueprints for electrical and electronic devices, CAD systems allow for quick and easy modifications of designs and rapid prototyping using CNC machines.

Electrical engineering jobs and salaries

Electrical and electronics engineers work primarily in research and development industries, engineering services firms, manufacturing and the federal government, according to the BLS. They generally work indoors, in offices, but they may have to visit sites to observe a problem or a piece of complex equipment, the BLS says.

Manufacturing industries that employ electrical engineers include automotive, marine, railroad, aerospace, defense, consumer electronics, commercial construction, lighting, computers and components, telecommunications and traffic control. Government institutions that employ electrical engineers include transportation departments, national laboratories and the military.

Most electrical engineering jobs require at least a bachelor's degree in engineering. Many employers, particularly those that offer engineering consulting services, also require state certification as a Professional Engineer. Additionally, many employers require certification from the Institute of Electrical and Electronics Engineers (IEEE) or the Institution of Engineering and Technology (IET). A master's degree is often required for promotion to management, and ongoing education and training are needed to keep up with advances in technology, testing equipment, computer hardware and software, and government regulations.

As of July 2014, the salary range for a newly graduated electrical engineer with a bachelor's degree is $55,570 to $73,908, according to a source. The range for a mid-level engineer with a master's degree and five to 10 years of experience is $$74,007 to $108,640, and the range for a senior engineer with a master's or doctorate and more than 15 years of experience is $97,434 to $138,296. Many experienced engineers with advanced degrees are promoted to management positions or start their own businesses where they can earn even more.

The future of electrical engineering

Employment of electrical and electronics engineers is projected to grow by 4 percent between now and 2022, because of these professionals' "versatility in developing and applying emerging technologies," the BLS says.

The applications for these emerging technologies include studying red electrical flashes, called sprites, which hover above some thunderstorms. Victor Pasko, an electrical engineer at Penn State, and his colleagues have developed a model for how the strange lightning evolves and disappears.

Another electrical engineer, Andrea Alù, of the University of Texas at Austin, is studying sound waves and has developed a one-way sound machine. "I can listen to you, but you cannot detect me back; you cannot hear my presence," Alù told LiveScience in a 2014 article.

And Michel Maharbiz, an electrical engineer at the University of California, Berkeley, is exploring ways to communicate with the brain wirelessly.

The BLS states, "The rapid pace of technological innovation and development will likely drive demand for electrical and electronics engineers in research and development, an area in which engineering expertise will be needed to develop distribution systems related to new technologies."

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1982 2023-12-03 00:06:35

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,923

Re: Miscellany

1984) Electronic Engineering

Gist

Electronics engineering is that branch of electrical engineering concerned with the uses of the electromagnetic spectrum and with the application of such electronic devices as integrated circuits and transistors.

Summary

Electronic engineering is a sub-discipline of electrical engineering which emerged in the early 20th century and is distinguished by the additional use of active components such as semiconductor devices to amplify and control electric current flow. Previously electrical engineering only used passive devices such as mechanical switches, resistors, inductors, and capacitors.

It covers fields such as: analog electronics, digital electronics, consumer electronics, embedded systems and power electronics. It is also involved in many related fields, for example solid-state physics, radio engineering, telecommunications, control systems, signal processing, systems engineering, computer engineering, instrumentation engineering, electric power control, photonics and robotics.

The Institute of Electrical and Electronics Engineers (IEEE) is one of the most important professional bodies for electronics engineers in the US; the equivalent body in the UK is the Institution of Engineering and Technology (IET). The International Electrotechnical Commission (IEC) publishes electrical standards including those for electronics engineering.

Details

Electronics engineering is a modern engineering discipline focused on the development of products and systems using electronic technology. It emerged as a discipline in the late-19th century as electronic broadcasting methods, including radio and television, became more widespread. The use of electronic technologies in World War II — such as sonar, radar and advanced weaponry — helped to further develop the field of electronics engineering.

Today, electronics engineering is the key force driving the growth of information technology, and many of the devices we use every day are the work of electronics engineers, such as smartphones, personal computers and Wi-Fi networks. The field is as diverse as its applications, as it comprises various subfields, like:

* Analog electronics engineering

* Radio-frequency engineering

* Software engineering

* Systems engineering

Additionally, a variety of industries apply electronics engineering principles to modernize their respective sectors, including but not limited to:

*bTelecommunications

* Radio and television

* Utilities

* Health care

* Science

* Personal technology manufacturing

* Government and military

What do electronics engineers do?

Electronics engineers design, develop and oversee the production of electronic systems and products. Testing these products and their components is a key part of the process of making sure all electronics work efficiently, safely and reliably for personal and commercial use. The responsibilities of electronics engineers vary depending on their specialty and industry but usually include:

Planning electronics projects

Electronics engineers participate in the preliminary stages of any electronic product. During the planning process, they help to determine various factors relating to the product, such as:

* Appearance

* Overall cost

* Budget allocation

* Project length

While undertaking planning activities, electronics engineers also carefully consider the requirements of their employer, their client and any international, national and local safety guidelines governing their work.

Manufacturing electronic products

Electronics engineers not only develop plans but also follow them to manufacture electronic products and systems. Most electronics engineers work as part of a team, creating individual electronic components and then assembling them with others to make larger works.

This work requires both an understanding and an adherence to each project's specifications, along with international, national and local product and safety guidelines.

Testing and evaluating electrical products

After assembly, electronics engineers conduct final tests before the release of the product, assessing whether it operates as intended and adheres to specifications. They also note potential areas of improvement and evaluate proposed changes to determine whether they add enough value to justify the additional expenditure of resources. If approved, the changes get implemented before the product's release.

Coordinating with stakeholders