Math Is Fun Forum

You are not logged in.

- Topics: Active | Unanswered

#1151 2021-10-05 00:05:14

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1128) Harvard University

Harvard University, oldest institution of higher learning in the United States (founded 1636) and one of the nation’s most prestigious. It is one of the Ivy League schools. The main university campus lies along the Charles River in Cambridge, Massachusetts, a few miles west of downtown Boston. Harvard’s total enrollment is about 23,000.

Harvard’s history began when a college was established at New Towne, which was later renamed Cambridge for the English alma mater of some of the leading colonists. Classes began in the summer of 1638 with one master in a single frame house and a “college yard.” Harvard was named for a Puritan minister, John Harvard, who left the college his books and half of his estate.

At its inception Harvard was under church sponsorship, although it was not formally affiliated with any religious body. During its first two centuries the college was gradually liberated, first from clerical and later from political control, until in 1865 the university alumni began electing members of the governing board. During his long tenure as Harvard’s president (1869–1909), Charles W. Eliot made Harvard into an institution with national influence.

The alumni and faculty of Harvard have been closely associated with many areas of American intellectual and political development. By the end of the first decade of the 21st century, Harvard had educated seven U.S. presidents—John Adams, John Quincy Adams, Rutherford B. Hayes, Theodore Roosevelt, Franklin D. Roosevelt, John F. Kennedy, and Barack Obama—and a number of justices, cabinet officers, and congressional leaders. Literary figures among Harvard graduates include Ralph Waldo Emerson, Oliver Wendell Holmes, Henry David Thoreau, James Russell Lowell, Henry James, Henry Adams, T.S. Eliot, John Dos Passos, E.E. Cummings, Walter Lippmann, and Norman Mailer. Other notable intellectual figures who graduated from or taught at Harvard include the historians Francis Parkman, W.E.B. Du Bois, and Samuel Eliot Morison; the astronomer Benjamin Peirce; the chemist Wolcott Gibbs; and the naturalist Louis Agassiz. William James introduced the experimental study of psychology into the United States at Harvard in the 1870s.

Harvard’s undergraduate school, Harvard College, contains about one-third of the total student body. The core of the university’s teaching staff consists of the faculty of arts and sciences, which includes the graduate faculty of arts and sciences. The university has graduate or professional schools of medicine, law, business, divinity, education, government, dental medicine, design, and public health. The schools of law, medicine, and business are particularly prestigious. Among the advanced research institutions affiliated with Harvard are the Museum of Comparative Zoology (founded in 1859 by Agassiz), the Gray Herbarium, the Peabody Museum of Archaeology and Ethnology, the Arnold Arboretum, and the Fogg Art Museum. Also associated with the university are an astronomical observatory in Harvard, Massachusetts; the Dumbarton Oaks Research Library and Collection in Washington, D.C., a centre for Byzantine and pre-Columbian studies; and the Harvard-Yenching Institute in Cambridge for research on East and Southeast Asia. The Harvard University Library is one of the largest and most important university libraries in the world.

Radcliffe College, one of the Seven Sisters schools, evolved from informal instruction offered to individual women or small groups of women by Harvard University faculty in the 1870s. In 1879 a faculty group called the Harvard Annex made a full course of study available to women, despite resistance to coeducation from the university’s administration. Following unsuccessful efforts to have women admitted directly to degree programs at Harvard, the Annex, which had incorporated as the Society for the Collegiate Instruction of Women, chartered Radcliffe College in 1894. The college was named for the colonial philanthropist Ann Radcliffe, who established the first scholarship fund at Harvard in 1643.

Until the 1960s Radcliffe operated as a coordinate college, drawing most of its instructors and other resources from Harvard. Radcliffe graduates, however, were not granted Harvard degrees until 1963. Diplomas from that time on were signed by the presidents of both Harvard and Radcliffe. Women undergraduates enrolled at Radcliffe were technically also enrolled at Harvard College, and instruction was coeducational.

Although its 1977 agreement with Harvard University called for the integration of select functions, Radcliffe College maintained a separate corporate identity for its property and endowments and continued to offer complementary educational and extracurricular programs for both undergraduate and graduate students, including career programs, a publishing course, and graduate-level workshops and seminars in women’s studies.

In 1999 Radcliffe and Harvard formally merged, and a new school, the Radcliffe Institute for Advanced Study at Harvard University, was established. The institute focuses on Radcliffe’s former fields of study and programs and also offers such new ones as nondegree educational programs and the study of women, gender, and society.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1152 2021-10-07 00:59:34

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1129) University of Munich

University of Munich, in full Ludwig Maximilian University of Munich, German Ludwig-Maximilians Universität München, autonomous coeducational institution of higher learning supported by the state of Bavaria in Germany. It was founded in 1472 at Ingolstadt by the duke of Bavaria, who modeled it after the University of Vienna. During the Protestant Reformation, Johann Eck made the university a centre of Roman Catholic opposition to Martin Luther. In 1799 schools of economics and political science were established, and the following year King Maximilian Joseph moved the school to Landshut, giving it the name Ludwig Maximilian. The dukes of Bavaria continued their strong support for the school, and in 1826 King Louis I moved it to Munich. A technical school with courses in agriculture and forestry was founded in 1868. The university’s faculty of Catholic theology continues to be influential, although a faculty of Protestant theology has been added. Affiliated with the University of Munich are more than 200 constituent institutes, seminars, and clinics.

Ludwig Maximilian University of Munich (also referred to as LMU or the University of Munich) is a public research university located in Munich, Germany.

The University of Munich is Germany's sixth-oldest university in continuous operation. Originally established in Ingolstadt in 1472 by Duke Ludwig IX of Bavaria-Landshut, the university was moved in 1800 to Landshut by King Maximilian I of Bavaria when Ingolstadt was threatened by the French, before being relocated to its present-day location in Munich in 1826 by King Ludwig I of Bavaria. In 1802, the university was officially named Ludwig-Maximilians-Universität by King Maximilian I of Bavaria in his as well as the university's original founder's honour.

The University of Munich is associated with 43 Nobel laureates (as of October 2020). Among these were Wilhelm Röntgen, Max Planck, Werner Heisenberg, Otto Hahn and Thomas Mann. Pope Benedict XVI was also a student and professor at the university. Among its notable alumni, faculty and researchers are inter alia Rudolf Peierls, Josef Mengele, Richard Strauss, Walter Benjamin, Joseph Campbell, Muhammad Iqbal, Marie Stopes, Wolfgang Pauli, Bertolt Brecht, Max Horkheimer, Karl Loewenstein, Carl Schmitt, Gustav Radbruch, Ernst Cassirer, Ernst Bloch, Konrad Adenauer. The LMU has recently been conferred the title of "University of Excellence" under the German Universities Excellence Initiative.

LMU is currently the second-largest university in Germany in terms of student population; in the winter semester of 2018/2019, the university had a total of 51,606 matriculated students. Of these, 9,424 were freshmen while international students totalled 8,875 or approximately 17% of the student population. As for operating budget, the university records in 2018 a total of 734,9 million euros in funding without the university hospital; with the university hospital, the university has a total funding amounting to approximately 1.94 billion euros.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1153 2021-10-08 00:40:41

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1130) University of Oxford

University of Oxford, English autonomous institution of higher learning at Oxford, Oxfordshire, England, one of the world’s great universities. It lies along the upper course of the River Thames (called by Oxonians the Isis), 50 miles (80 km) north-northwest of London.

Sketchy evidence indicates that schools existed at Oxford by the early 12th century. By the end of that century, a university was well established, perhaps resulting from the barring of English students from the University of Paris around 1167. Oxford was modeled on the University of Paris, with initial faculties of theology, law, medicine, and the liberal arts.

In the 13th century the university gained added strength, particularly in theology, with the establishment of several religious orders, principally Dominicans and Franciscans, in the town of Oxford. The university had no buildings in its early years; lectures were given in hired halls or churches. The various colleges of Oxford were originally merely endowed boardinghouses for impoverished scholars. They were intended primarily for masters or bachelors of arts who needed financial assistance to enable them to continue study for a higher degree. The earliest of these colleges, University College, was founded in 1249. Balliol College was founded about 1263, and Merton College in 1264.

During the early history of Oxford, its reputation was based on theology and the liberal arts. But it also gave more-serious treatment to the physical sciences than did the University of Paris: Roger Bacon, after leaving Paris, conducted his scientific experiments and lectured at Oxford from 1247 to 1257. Bacon was one of several influential Franciscans at the university during the 13th and 14th centuries. Among the others were Duns Scotus and William of Ockham. John Wycliffe (c. 1330–84) spent most of his life as a resident Oxford doctor.

Beginning in the 13th century, the university gained charters from the crown, but the religious foundations in Oxford town were suppressed during the Protestant Reformation. In 1571 an act of Parliament led to the incorporation of the university. The university’s statutes were codified by its chancellor, Archbishop William Laud, in 1636. In the early 16th century, professorships began to be endowed. And in the latter part of the 17th century, interest in scientific studies increased substantially. During the Renaissance, Desiderius Erasmus carried the new learning to Oxford, and such scholars as William Grocyn, John Colet, and Sir Thomas More enhanced the university’s reputation. Since that time Oxford has traditionally held the highest reputation for scholarship and instruction in the classics, theology, and political science.

In the 19th century the university’s enrollment and its professorial staff were greatly expanded. The first women’s college at Oxford, Lady Margaret Hall, was founded in 1878, and women were first admitted to full membership in the university in 1920. In the 20th century Oxford’s curriculum was modernized. Science came to be taken much more seriously and professionally, and many new faculties were added, including ones for modern languages and economics. Postgraduate studies also expanded greatly in the 20th century.

Oxford houses two renowned scholarly institutions, the Bodleian Library and the Ashmolean Museum of Art and Archaeology, as well as the Museum of the History of Science (established 1924). The Oxford University Press, established in 1478, is one of the largest and most prestigious university publishers in the world.

Oxford has been associated with many of the greatest names in British history, from John Wesley and Cardinal Wolsey to Oscar Wilde and Sir Richard Burton and Cecil Rhodes and Sir Walter Raleigh. The astronomer Edmond Halley studied at Oxford, and the physicist Robert Boyle performed his most important research there. Prime ministers who studied at Oxford include William Pitt the Elder, George Canning, Sir Robert Peel, William Gladstone, Lord Salisbury, H.H. Asquith, Clement Atlee, Anthony Eden, Harold Macmillan, Edward Heath, Harold Wilson, and Margaret Thatcher. Among the many notable writers associated with the university are Lewis Carroll, C.S. Lewis, and J.R.R. Tolkien; the latter two were members of the Inklings, an informal Oxford literary group in the mid-20th century.

The colleges and collegial institutions of the University of Oxford include All Souls (1438), Balliol (1263–68), Brasenose (1509), Christ Church (1546), Corpus Christi (1517), Exeter (1314), Green (1979), Harris Manchester (founded 1786; inc. 1996), Hertford (founded 1740; inc. 1874), Jesus (1571), Keble (founded 1868; inc. 1870), Kellogg (1990), Lady Margaret Hall (founded 1878; inc. 1926), Linacre (1962), Lincoln (1427), Magdalen (1458), Mansfield (founded 1886; inc. 1995), Merton (1264), New (1379), Nuffield (founded 1937; inc. 1958), Oriel (1326), Pembroke (1624), Queen’s (1341), St. Anne’s (founded 1879; inc. 1952), St. Antony’s (1950), St. Catherine’s (1962), St. Cross (1965), St. Edmund Hall (1278), St. Hilda’s (founded 1893; inc. 1926), St. Hugh’s (founded 1886; inc. 1926), St. John’s (1555), St. Peter’s (founded 1929; inc. 1961), Somerville (founded 1879; inc. 1926), Templeton (founded 1965; inc. 1995), Trinity (1554–55), University (1249), Wadham (1612), Wolfson (founded 1966; inc. 1981), and Worcester (founded 1283; inc. 1714). Among the university’s private halls are Blackfriars (founded 1921; inc. 1994), Campion (founded 1896; inc. 1918), Greyfriars (founded 1910; inc. 1957), Regent’s Park College (founded 1810; inc. 1957), St. Benet’s (founded 1897; inc. 1918), and Wycliffe (founded 1877; inc. 1996).

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1154 2021-10-09 00:07:29

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1131) Tungsten

Tungsten (W), also called wolfram, chemical element, an exceptionally strong refractory metal of Group 6 (VIb) of the periodic table, used in steels to increase hardness and strength and in lamp filaments.

Tungsten metal was first isolated (1783) by the Spanish chemists and mineralogists Juan José and Fausto Elhuyar by charcoal reduction of the oxide (WO3) derived from the mineral wolframite. Earlier (1781) the Swedish chemist Carl Wilhelm Scheele had discovered tungstic acid in a mineral now known as scheelite, and his countryman Torbern Bergman concluded that a new metal could be prepared from the acid. The names tungsten and wolfram have been used for the metal since its discovery, though everywhere Jön Jacob Berzelius’s symbol W prevails. In British and American usage, tungsten is preferred; in Germany and a number of other European countries, wolfram is accepted.

Element Properties

atomic number : 74

atomic weight : 183.85

melting point : 3,410 °C (6,152 °F)

boiling point : 5,660 °C (10,220 °F)

density : 19.3 grams/cubic centimetres at 20 °C (68 °F)

oxidation states : +2, +3, +4, +5, +6

Occurrence, Properties, And Uses

The amount of tungsten in Earth’s crust is estimated to be 1.5 parts per million, or about 1.5 grams per ton of rock. China is the dominant producer of tungsten; in 2016 it produced over 80 percent of total tungsten mined, and it contained nearly two-thirds of the world’s reserves. Vietnam, Russia, Canada, and Bolivia produce most of the remainder. Tungsten does not occur as a free metal. It is about as abundant as tin or as molybdenum, which it resembles, and half as plentiful as uranium. Although tungsten occurs as tungstenite—tungsten disulfide, WS2—the most important ores in this case are the tungstates such as scheelite (calcium tungstate, CaWO4), stolzite (lead tungstate, PbWO4), and wolframite—a solid solution or a mixture or both of the isomorphous substances ferrous tungstate (FeWO4) and manganous tungstate (MnWO4).

For tungsten the ores are concentrated by magnetic and mechanical processes, and the concentrate is then fused with alkali. The crude melts are leached with water to give solutions of sodium tungstate, from which hydrous tungsten trioxide is precipitated upon acidification, and the oxide is then dried and reduced to metal with hydrogen.

Tungsten is rather resistant to attack by acids, except for mixtures of concentrated nitric and hydrofluoric acids, and it can be attacked rapidly by alkaline oxidizing melts, such as fused mixtures of potassium nitrate and sodium hydroxide or sodium peroxide; aqueous alkalies, however, are without effect. It is inert to oxygen at normal temperature but combines with it readily at red heat, to give the trioxides, and is attacked by fluorine at room temperature, to give the hexafluorides.

Tungsten metal has a nickel-white to grayish lustre. Among metals it has the highest melting point, at 3,410 °C (6,170 °F), the highest tensile strength at temperatures of more than 1,650 °C (3,002 °F), and the lowest coefficient of linear thermal expansion (4.43 × 10−6 per °C at 20 °C [68 °F]). Tungsten is ordinarily brittle at room temperature. Pure tungsten can, however, be made ductile by mechanical working at high temperatures and can then be drawn into very fine wire. Tungsten was first commercially employed as a lamp filament material and thereafter used in many electrical and electronic applications. It is used in the form of tungsten carbide for very hard and tough dies, tools, gauges, and bits. Much tungsten goes into the production of tungsten steels, and some has been used in the aerospace industry to fabricate rocket-engine nozzle throats and leading-edge reentry surfaces.

Natural tungsten is a mixture of five stable isotopes: tungsten-180 (0.12 percent), tungsten-182 (26.50 percent), tungsten-183 (14.31 percent), tungsten-184 (30.64 percent), and tungsten-186 (28.43 percent). Tungsten crystals are isometric and, by X-ray analysis, are seen to be body-centred cubic.

Compounds

Chemically, tungsten is relatively inert. Compounds have been prepared, however, in which the element has oxidation states from 0 to +6. The states above +2, especially +6, are most common. In the +4, +5, and +6 states, tungsten forms a variety of complexes.

The most important tungsten compound is tungsten carbide (WC), which is noted for its hardness (9.5 on the Mohs scale, where the maximum, diamond, is 10). It is used alone or in combination with other metals to impart wear-resistance to cast iron and the cutting edges of saws and drills. Tungsten also forms hard, refractory, and chemically inert interstitial compounds with boron, nitrogen, and silicon upon direct reaction with those elements at high temperatures.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1155 2021-10-10 00:04:26

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1132) Barium

Barium (Ba), chemical element, one of the alkaline-earth metals of Group 2 (IIa) of the periodic table. The element is used in metallurgy, and its compounds are used in pyrotechnics, petroleum production, and radiology.

Element Properties

atomic number : 56

atomic weight : 137.33

melting point : 727 °C (1,341 °F)

boiling point : 1,805 °C (3,281 °F)

specific gravity : 3.51 (at 20 °C, or 68 °F)

oxidation state : +2

Occurrence, Properties, And Uses

Barium, which is slightly harder than lead, has a silvery white lustre when freshly cut. It readily oxidizes when exposed to air and must be protected from oxygen during storage. In nature it is always found combined with other elements. The Swedish chemist Carl Wilhelm Scheele discovered (1774) a new base (baryta, or barium oxide, BaO) as a minor constituent in pyrolusite, and from that base he prepared some crystals of barium sulfate, which he sent to Johan Gottlieb Gahn, the discoverer of manganese. A month later Gahn found that the mineral barite is also composed of barium sulfate, BaSO4. A particular crystalline form of barite found near Bologna, Italy, in the early 17th century, after being heated strongly with charcoal, glowed for a time after exposure to bright light. The phosphorescence of “Bologna stones” was so unusual that it attracted the attention of many scientists of the day, including Galileo. Only after the electric battery became available could Sir Humphry Davy finally isolate (1808) the element itself by electrolysis.

Barium minerals are dense (e.g., BaSO4, 4.5 grams per cubic centimetre; BaO, 5.7 grams per cubic centimetre), a property that was the source of many of their names and of the name of the element itself (from the Greek barys, “heavy”). Ironically, metallic barium is comparatively light, only 30 percent denser than aluminum. Its cosmic abundance is estimated as 3.7 atoms (on a scale where the abundance of silicon = 106 atoms). Barium constitutes about 0.03 percent of Earth’s crust, chiefly as the minerals barite (also called barytes or heavy spar) and witherite. Between six and eight million tons of barite are mined every year, more than half of it in China. Lesser amounts are mined in India, the United States, and Morocco. Commercial production of barium depends upon the electrolysis of fused barium chloride, but the most effective method is the reduction of the oxide by heating with aluminum or silicon in a high vacuum. A mixture of barium monoxide and peroxide can also be used in the reduction. Only a few tons of barium are produced each year.

The metal is used as a getter in electron tubes to perfect the vacuum by combining with final traces of gases, as a deoxidizer in copper refining, and as a constituent in certain alloys. The alloy with nickel readily emits electrons when heated and is used for this reason in electron tubes and in spark plug electrodes. The detection of barium (atomic number 56) after uranium (atomic number 92) had been bombarded by neutrons was the clue that led to the recognition of nuclear fission in 1939.

Naturally occurring barium is a mixture of six stable isotopes: barium-138 (71.7 percent), barium-137 (11.2 percent), barium-136 (7.8 percent), barium-135 (6.6 percent), barium-134 (2.4 percent), and barium-132 (0.10 percent). Barium-130 (0.11 percent) is also naturally occurring but undergoes decay by double electron capture with an extremely long half-life (more than 4 × 1021 years). More than 30 radioactive isotopes of barium are known, with mass numbers ranging from 114 to 153. The isotope with the longest half-life (barium-133, 10.5 years) is used as a gamma-ray reference source.

Compounds

In its compounds, barium has an oxidation state of +2. The Ba2+ ion may be precipitated from solution by the addition of carbonate (CO32−), sulfate (SO42−), chromate (CrO42−), or phosphate (PO43−) anions. All soluble barium compounds are toxic to mammals, probably by interfering with the functioning of potassium ion channels.

Barium sulfate (BaSO4) is a white, heavy insoluble powder that occurs in nature as the mineral barite. Almost 80 percent of world consumption of barium sulfate is in drilling muds for oil. It is also used as a pigment in paints, where it is known as blanc fixe (i.e., “permanent white”) or as lithopone when mixed with zinc sulfide. The sulfate is widely used as a filler in paper and rubber and finds an important application as an opaque medium in the X-ray examination of the gastrointestinal tract.

Most barium compounds are produced from the sulfate via reduction to the sulfide, which is then used to prepare other barium derivatives. About 75 percent of all barium carbonate (BaCO3) goes into the manufacture of specialty glass, either to increase its refractive index or to provide radiation shielding in cathode-ray and television tubes. The carbonate also is used to make other barium chemicals, as a flux in ceramics, in the manufacture of ceramic permanent magnets for loudspeakers, and in the removal of sulfate from salt brines before they are fed into electrolytic cells (for the production of chlorine and alkali). On heating, the carbonate forms barium oxide, BaO, which is employed in the preparation of cuprate-based high-temperature superconductors such as YBa2Cu3O7−x. Another complex oxide, barium titanate (BaTiO3), is used in capacitors, as a piezoelectric material, and in nonlinear optical applications.

Barium chloride (BaCl2·2H2O), consisting of colourless crystals that are soluble in water, is used in heat-treating baths and in laboratories as a chemical reagent to precipitate soluble sulfates. Although brittle, crystalline barium fluoride (BaF2) is transparent to a broad region of the electromagnetic spectrum and is used to make optical lenses and windows for infrared spectroscopy. The oxygen compound barium peroxide (BaO2) was used in the 19th century for oxygen production (the Brin process) and as a source of hydrogen peroxide. Volatile barium compounds impart a yellowish green colour to a flame, the emitted light being of mostly two characteristic wavelengths. Barium nitrate, formed with the nitrogen-oxygen group NO3−, and chlorate, formed with the chlorine-oxygen group ClO3−, are used for this effect in green signal flares and fireworks.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1156 2021-10-11 01:01:39

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

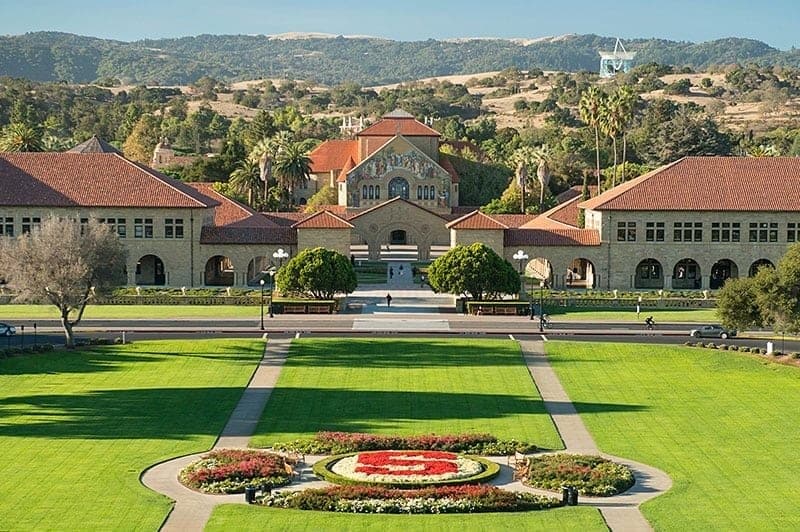

1133) Stanford University

Stanford University, official name Leland Stanford Junior University, private coeducational institution of higher learning at Stanford, California, U.S. (adjacent to Palo Alto), one of the most prestigious in the country. The university was founded in 1885 by railroad magnate Leland Stanford and his wife, Jane (née Lathrop), and was dedicated to their deceased only child, Leland, Jr.; it opened in 1891. The university campus largely occupies Stanford’s former Palo Alto farm. The buildings, conceived by landscape architect Frederick Law Olmsted and designed by architect Charles Allerton Coolidge, are of soft buff sandstone in a style similar to the old California mission architecture, being long and low with wide colonnades, open arches, and red-tiled roofs. The campus sustained heavy damage from earthquakes in 1906 and 1989 but was rebuilt each time. The university was coeducational from the outset, though between 1899 and 1933 enrollment of women students was limited to 500.

Stanford maintains overseas study centres in France, Italy, Germany, England, Argentina, Mexico, Chile, Japan, and Russia; about one-third of its undergraduates study at one of these sites for one or two academic quarters. A study and internship program is also offered in Washington, D.C. The university offers a broad range of undergraduate, graduate, and professional degree programs in schools of law, medicine, education, engineering, business, earth sciences, and humanities and sciences. Total enrollment exceeds 16,000.

Stanford is a national centre for research and is home to more than 120 research institutes. The Hoover Institution on War, Revolution and Peace—founded in 1919 by Stanford alumnus (and future U.S. president) Herbert Hoover to preserve documents related to World War I—contains more than 1.6 million volumes and 50 million documents dealing with 20th-century international relations and public policy. The Stanford Linear Accelerator Center (SLAC), established in 1962, is one of the world’s premier laboratories for research in particle physics. Other noted research facilities include the Stanford Institute for Economic Policy Research, the Institute for International Studies, and the Stanford Humanities Center.

The Stanford Medical Center, completed on the campus in 1959, is one of the top teaching hospitals in the country. Other notable campus locations are the Iris & B. Gerald Cantor Center for Visual Arts (housing the university museum) and its adjacent sculpture garden, containing works by Auguste Rodin, and Hanna House (1937), designed by architect Frank Lloyd Wright. Adjacent to the campus is the Stanford Research Park (1951), one of the world’s principal locations for the development of electronics and computer technology. The Hopkins Marine Station is maintained by the university at Pacific Grove on Monterey Bay, and a biological field station is located near the campus at Jasper Ridge Biological Preserve.

Stanford’s distinguished faculty has included many Nobel laureates, including Milton Friedman (economics), Arthur Kornberg (biochemistry), and Burton Richter (physics). Among the university’s many notable alumni are writers John Steinbeck and Ken Kesey, painter Robert Motherwell, U.S. Supreme Court Justices William Hubbs Rehnquist and Sandra Day O’Connor, astronaut Sally Ride, and golfer Tiger Woods.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1157 2021-10-12 00:31:50

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1134) Thanatophobia

Fear of Death Phobia – Thanatophobia

The extreme and often irrational thought or fear of death leads to the phobia known as Thanatophobia. Very severe cases of thanatophobia often negatively impact the day to day functioning of the individual suffering from this condition. Often s/he refuses to leave the home owing to this fear. The talk or thought of death (or what lies after death) can trigger panic attacks in the patients. Thanatophobia is also known by various other names such as:

• Fear of entombment or the fear of being buried

• Dying phobia

• Fear of cremation

• Thantophobia

• Fear of the unknown

Causes of the fear of death phobia

As is the case with several other kinds of fears and phobias, the fear of death also results from external events (traumatic past) or internalization/predisposition of extreme concepts about death. As children, we learn that death is inevitable and non-predictable. But this knowledge can paralyze or overwhelm the person coping from Thanatophobia.

Symptoms of Thanatophobia

The mere mention of death or images or thoughts thereof can trigger a crippling anxiety in the patient. Following emotional, mental and physical symptoms are experienced by thanatophobic patients:

• Physical Symptoms: Dizziness, dry mouth, sweating, palpitations, nausea, stomach pain, trembling, sensation of choking, chest pain or discomfort, hot or cold flashes, numbness and tingling sensations.

• Mental Symptoms: Loss of control- feeling of going crazy with automatic or uncontrollable reactions, repetition of gory thoughts, inability to distinguish between reality and unreality.

• Emotional symptoms: Desire to flee and escape from current situation, extreme avoidance, persistent worry and terrifying or overwhelming thoughts. Additionally, anger, sadness and guilt may also be present.

Diagnosis and Treatment of Thanatophobia

Before considering the diagnosis of the fear of death, it is important to consider a few conditions that are mistaken for Thanatophobia. Depression, ADHD and bipolar disorders are often linked to this type of phobia. In other cases, undiagnosed conditions like Alzheimer’s disease, migraines, concentration disorders, strokes, schizophrenia, and epilepsy etc may actually be related to Thanatophobia.

Diagnosis of thanatophobia is best done by the patient himself. If the extreme thoughts of the fear of death are affecting his/her life so much so that one is unable to leave the home or compromising upon one’s daily functioning then s/he must discuss this with a medical doctor. After ruling out any physical conditions, the doctor might refer the patient to a mental health professional to further evaluate the condition.

Many kinds of treatments and therapies are available today to help individuals cope with Thanatophobia.

• Anti anxiety medicines (as yet there are no scientific studies that have proven the efficiency of treating the fear of death phobia). Anxiety medications can also have side effects.

• Hypnotherapy

• Religious counseling

• Talk therapy

• Neuro linguistic programming

• Cognitive Behavior therapy and Behavior therapy

• Relaxation techniques like imagery, meditation, controlled breathing and positive reaffirmations/visualizations

• Exposure therapy or regression therapy wherein the patient is made to relive certain events, analyze them and interpret them correctly. This helps one resolve issues surrounding the event.

• Self help techniques

• Group therapies with other patients suffering from Thanatophobia

The goal of each of these therapies is to help the patient pinpoint the exact inciting factor of the fear of death. The therapists help the patient understand why the fear is unfounded and systematically and gradually help the patient cope with these thoughts. This, in turn, helps the patient control his/her physical and mental responses to the fear of death.

In conclusion

Thantophobia or Thanatophobia is a complex phobia which, if left untreated, can touch every aspect of the individual’s life. However, one must not lose hope but opt for treatments and therapies that can help him/her cope with it. Family and friends can also play a very important role in helping the individual deal with one’s fear of death.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1158 2021-10-13 00:21:41

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

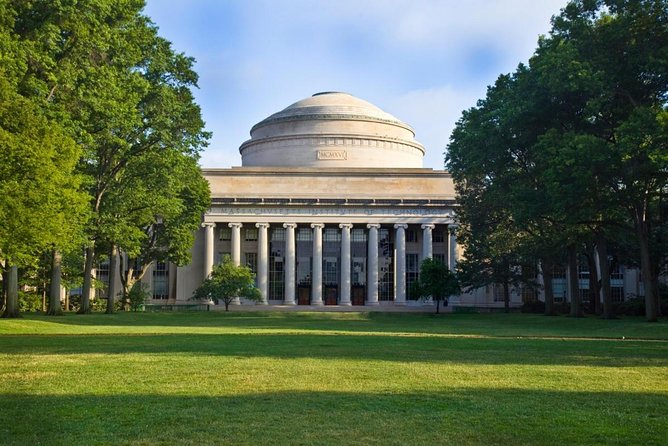

1135) Massachusetts Institute of Technology

Massachusetts Institute of Technology (MIT), privately controlled coeducational institution of higher learning famous for its scientific and technological training and research. It was chartered by the state of Massachusetts in 1861 and became a land-grant college in 1863. William Barton Rogers, MIT’s founder and first president, had worked for years to organize an institution of higher learning devoted entirely to scientific and technical training, but the outbreak of the American Civil War delayed the opening of the school until 1865, when 15 students enrolled for the first classes, held in Boston. MIT moved to Cambridge, Massachusetts, in 1916; its campus is located along the Charles River.

Under the administration of president Karl T. Compton (1930–48), the institute evolved from a well-regarded technical school into an internationally known centre for scientific and technical research. During the Great Depression, its faculty established prominent research centres in a number of fields, most notably analog computing (led by Vannevar Bush) and aeronautics (led by Charles Stark Draper). During World War II, MIT administered the Radiation Laboratory, which became the nation’s leading centre for radar research and development, as well as other military laboratories. After the war, MIT continued to maintain strong ties with military and corporate patrons, who supported basic and applied research in the physical sciences, computing, aerospace, and engineering.

MIT offers both graduate and undergraduate education. There are five academic schools—the School of Architecture and Planning, the School of Engineering, the School of Humanities, Arts, and Social Science, the MIT Sloan School of Management, and the School of Science—and the Whitaker College of Health Sciences and Technology. While MIT is perhaps best known for its programs in engineering and the physical sciences, other areas—notably economics, political science, urban studies, linguistics, and philosophy—are also strong. Admission is extremely competitive, and undergraduate students are often able to pursue their own original research. Total enrollment is about 10,000.

MIT has numerous research centres and laboratories. Among its facilities are a nuclear reactor, a computation centre, geophysical and astrophysical observatories, a linear accelerator, a space research centre, wind tunnels, an artificial intelligence laboratory, a centre for cognitive science, and an international studies centre. MIT’s library system is extensive and includes a number of specialized libraries. There are also several museums.

The MIT community is driven by a shared purpose: to make a better world through education, research, and innovation. We are fun and quirky, elite but not elitist, inventive and artistic, obsessed with numbers, and welcoming to talented people regardless of where they come from.

Founded to accelerate the nation’s industrial revolution, MIT is profoundly American. With ingenuity and drive, our graduates have invented fundamental technologies, launched new industries, and created millions of American jobs. At the same time, and without the slightest sense of contradiction, MIT is profoundly global. Our community gains tremendous strength as a magnet for talent from around the world. Through teaching, research, and innovation, MIT’s exceptional community pursues its mission of service to the nation and the world.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1159 2021-10-14 00:27:16

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1136) California Institute of Technology

California Institute of Technology, byname Caltech, private coeducational university and research institute in Pasadena, California, U.S., emphasizing graduate and undergraduate instruction and research in pure and applied science and engineering. The institute comprises six divisions: biology; chemistry and chemical engineering; engineering and applied science; geologic and planetary sciences; humanities and social sciences; and physics, mathematics, and astronomy. Total enrollment is approximately 2,000, of which more than half are graduate students.

Superbly equipped, and staffed by a faculty of some 1,000 distinguished and creative scientists, Caltech is considered one of the world’s major research centres. Dozens of eminent scientists (including many Nobel Prize winners) have worked and taught there, including physicists Robert Andrews Millikan, Richard P. Feynman, and Murray Gell-Mann; astronomer George Ellery Hale; and chemist Linus Pauling. In 1958 the Jet Propulsion Laboratory at Caltech, operating in conjunction with the National Aeronautics and Space Administration, launched Explorer I, the first U.S. satellite, and it subsequently conducted other programs of space and lunar exploration. Caltech operates astronomical observatories at Owens Valley, Mount Palomar, and Big Bear Lake in California and at Mauna Kea in Hawaii. Other institute facilities include a seismological laboratory in Pasadena and a marine biological laboratory at Corona del Mar.

Caltech was established in 1891 as a school for arts and crafts. First called Throop University and later Throop Polytechnic Institute, it assumed its present name in 1920. The institute originally included curricula in business and education, but in 1907 it dropped several programs and began specializing in science and technology, with a focus on creativity and research.

The California Institute of Technology (or Caltech) is one of the foremost scientific and technical institutions in the United States. It is a private university and research institute located in Pasadena, California, about 12 miles (19 kilometers) from Los Angeles. Caltech traces its origins back to Throop University, a school of arts and crafts founded in 1891. It began specializing in science and technology in 1907 and took its present name in 1920. Caltech has been coeducational since 1970, but men still outnumber women. The university enrolls roughly 2,000 students, the majority of whom are graduate students.

Caltech conducts degree programs from the bachelor’s through the doctoral level. The university consists of six academic divisions: biology; chemistry and chemical engineering; engineering and applied science; geological and planetary sciences; physics, mathematics, and astronomy; and humanities and social sciences. Its programs in science and engineering are ranked among the best in the United States. Caltech was also the first scientific institution to require undergraduates to take at least 20 percent of their courses in humanities and cultural studies. The institute provides many opportunities for undergraduates to conduct research, including a summer research fellowship program. The students work on independent projects under the guidance of a faculty sponsor, and many have their work published in scientific journals. To encourage an atmosphere of trust in an intense environment, everyone at Caltech is expected to follow an honor code, which states that “no member of the Caltech community shall take unfair advantage of any other member of the Caltech community.”

Superbly equipped and staffed by a faculty of distinguished and creative scientists, Caltech is considered one of the world’s major research centers. Dozens of eminent scientists, including many Nobel prize winners, have worked and taught there. Among them have been physicists Robert Andrews Millikan, Richard P. Feynman, and Murray Gell-Mann, astronomer George Ellery Hale, and chemist Linus Pauling. Caltech operates the Jet Propulsion Laboratory (JPL) in conjunction with the National Aeronautics and Space Administration (NASA). In 1958 JPL launched Explorer I, the first U.S. satellite, and since then it has conducted many other space exploration programs. Caltech also operates astronomical observatories at Owens Valley, Mount Palomar, Big Bear Lake, and Cedar Flat in California and at Mauna Kea in Hawaii. Other institute facilities include a seismological laboratory in Pasadena and a marine biological laboratory at Corona del Mar, California.

Caltech’s Beavers, the school’s varsity sports teams, compete in Division III of the National Collegiate Athletic Association (NCAA). School colors are orange and white.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1160 2021-10-15 01:04:03

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1137) Imperial College London

Imperial College London, institution of higher learning in London. It is one of the leading research colleges or universities in England. Its main campus is located in South Kensington (in Westminster), and its medical school is linked with several London teaching hospitals. Its three- to five-year courses of study lead to bachelor’s, master’s, and doctorate degrees. These degree programs include the biological and physical sciences, engineering, computing, geology, and preclinical and clinical medicine. Among its research centres are the Centre for Environmental Technology, National Heart and Lung Institute, Centre for Population Biology, Centre for Composite Materials, and Centre for the History of Science, Technology and Medicine. Total enrollment is approximately 12,000, including over 4,700 engineering students.

The Royal College of Science was founded in 1845 by Prince Albert, the consort of Queen Victoria. The Royal School of Mines was founded in 1851, and the City and Guilds College was founded in 1884. The institutions united to form the Imperial College of Science and Technology in 1907 and became a school of the University of London in 1908. An act of Parliament in 1988 made St. Mary’s Hospital Medical School, founded in 1854, the college’s fourth school. The National Heart and Lung Institute was joined with the college in 1995, creating with St. Mary’s the new Imperial College School of Medicine. The Charing Cross and Westminster Medical School, as well as the Royal Postgraduate Medical School, were merged with the institution in 1997, and in 2000 it also merged with Wye College. In 2006 Imperial College withdrew from the University of London in order to become an independent university.

Imperial College London has a 240-acre (97-hectare) site with wetland, farmland, parkland, and laboratories at Silwood Park near Ascot, Berkshire; it also owns a mine near Truro, Cornwall.

Ranked 7th in the world in the QS World University Rankings® 2022, Imperial College London is a one-of-a-kind institution in the UK, focusing solely on science, engineering, medicine and business. Imperial offers an education that is research-led, exposing you to real world challenges with no easy answers, teaching that opens everything up to question and opportunities to work across multi-cultural, multi-national teams.

Imperial is based in South Kensington in London, in an area known as ‘Albertopolis’, Prince Albert and Sir Henry Cole’s 19th century vision for an area where science and the arts would come together. As a result, Imperial’s neighbours include a number of world leading cultural organizations including the Science, Natural History and Victoria and Albert museums; the Royal Colleges of Art and Music; and the Royal Albert Hall, where all of their students also graduate.

There is plenty of green space too, including two Royal Parks (Hyde Park and Kensington Gardens) within 10 minutes’ walk of campus. Travel to and from the area is also really easy as it’s served by three Tube lines and many bus routes.

One of the most distinctive elements of an Imperial education is that students join a community of world-class researchers. The cutting edge and globally influential nature of this research is what Imperial is best known for. It’s the focus on the practical application of their research – particularly in addressing global challenges – and the high level of interdisciplinary collaboration that makes their research so effective. Read more about their research impact on their research and innovation webpages.

The number of award winners, Nobel Prize holders and prestigious Fellowships (Royal Society, Royal Academy of Engineering, Academy of Medical Sciences) amongst their staff is a testament to the outstanding contributions they have made in their respective fields.

Imperial is one of the most international universities in the world, with 59% of its student body in 2019-20 being non-UK citizens and more than 140 countries are currently represented on campus. Meanwhile, the College’s staff, like their students, are diverse in their cultural backgrounds, nationalities and experiences.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1161 2021-10-16 03:28:25

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1138) Yale University

Founded in 1701, Yale University is a private Ivy League research university located in New Haven, Connecticut. It is one of the nine Colonial Colleges chartered before the American Revolution and the third-oldest institution of higher education in the USA. The 300 years old institution traces its roots to the 1640s when colonial clergymen took an initiative to lay the foundations of a local college in order to preserve the tradition of European liberal education in the New World. In 1701, Yale was established as a collegiate school near Saybrook, Connecticut. The collegiate school was renamed as Yale College in 1718 in recognition of the donation of books and goods made by Welsh merchant Elihu Yale.

In 1750, one of the oldest buildings in New Haven and a national historic landmark, Connecticut Hall was constructed. Yale law school, established in 1824, is consistently ranked as a prominent law school in the nation. The law school holds a great history of nurturing and training outstanding jurists, judges, practitioners, and government officials. In 1836, Yale Literary magazine was founded. Considered as an oldest literary review in the country, it emerged at the forefront of novel ways of studying literature. In 1861, Yale became the first university in America to award Doctor of Philosophy degrees. The University currently consists of fourteen constituent schools including the undergraduate liberal arts college, the Graduate school of Arts and Sciences and twelve professional schools.

In 2018, the University delivered teaching to 13,433 full-time and part-time students including 5,964 undergraduates and 7,469 graduate and professional students. In addition to more than 80 majors available to undergraduates, the University offers a number of supplementary programs intended to give students specialized knowledge across a variety of areas. The undergraduate students can choose from over 2,000 courses offered each year. Yale’s student body, one of the most diverse in the world includes students from different backgrounds and experiences. In 2018, 2,694 students nearly 20.7% of international students took admission at Yale representing 123 countries. Majority of the international student population comes from Canada, China, Germany, India, South Korea, and the United Kingdom.

Yale’s Endowment generated an 11.3% return. The Endowment grew from $22.5 billion to $30.31 billion, in the past ten-year period. The Endowment’s performance exceeded its benchmark and outpaced institutional fund indices with annual returns of 6.6%, over the past ten years.

The University has graduated many notable alumni consisting of 61 Nobel laureates, 78 MacArthur Fellows, 247 Rhodes Scholars and 119 Marshall Scholars, 5 Fields Medalists and 3 Turing award winners. In addition, five U.S. Presidents, including George W. Bush, Bill Clinton, George H.W. Bush, William Howard Taft and Gerald Ford, 19 U.S. Supreme Court Justices and a number of billionaires have been affiliated with Yale University. Some royals have also attended Yale including Crown Princess Victoria of Sweden, Prince Rostislav Romanov and Prince Akiiki Hosea Nyabongo.

Yale University, private university in New Haven, Connecticut, one of the Ivy League schools. It was founded in 1701 and is the third oldest university in the United States. Yale was originally chartered by the colonial legislature of Connecticut as the Collegiate School and was held at Killingworth and other locations. In 1716 the school was moved to New Haven, and in 1718 it was renamed Yale College in honour of a wealthy British merchant and philanthropist, Elihu Yale, who had made a series of donations to the school. Yale’s initial curriculum emphasized classical studies and strict adherence to orthodox Puritanism.

Yale’s medical school was organized in 1810. The divinity school arose from a department of theology created in 1822, and a law department became affiliated with the college in 1824. The geologist Benjamin Silliman, who taught at Yale between 1802 and 1853, did much to make the experimental and applied sciences a respectable field of study in the United States. While at Yale he founded the American Journal of Science and Arts (later shortened to American Journal of Science), which was one of the great scientific journals of the world in the 19th century. Yale’s Sheffield Scientific School, begun in the 1850s, was one of the leading scientific and engineering centres until 1956, when it merged with Yale College and ceased to exist.

A graduate school of arts and sciences was organized in 1847, and a school of art was created in 1866. Music, forestry and environmental studies, nursing, drama, management, architecture, physician associate, and public health professional school programs were subsequently established. The college was renamed Yale University in 1864. Women were first admitted to the graduate school in 1892, but the university did not become fully coeducational until 1969. A system of residential colleges was instituted in the 1930s.

Yale is highly selective in its admissions and is among the nation’s most highly rated schools in terms of academic and social prestige. It includes Yale College (undergraduate), the Graduate School of Arts and Sciences, and 12 professional schools.

The Yale University Library, with more than 15 million volumes, is one of the largest in the United States. Yale’s extensive art galleries, the first in an American college, were established in 1832 when John Trumbull donated a gallery to house his paintings of the American Revolution. Yale’s Peabody Museum of Natural History houses important collections of paleontology, archaeology, and ethnology.

Yale’s graduates have included U.S. Presidents William Howard Taft, Gerald Ford, George H.W. Bush, Bill Clinton, and George W. Bush; Civil War-era leader John C. Calhoun; theologian Jonathan Edwards; inventors Eli Whitney and Samuel F.B. Morse; and lexicographer Noah Webster. After several years of debate, in 2017 the university announced that the name of Calhoun College, one of the original residential colleges, would be changed to Hopper College, after the 20th-century mathematician, naval officer, and Yale alumna Grace Hopper. Advocates of the renaming had argued that it was inappropriate for the university to honour Calhoun, who had been an ardent proponent of slavery and a white supremacist.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1162 2021-10-17 00:06:06

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1139) Ophthalmology

Ophthalmology, medical specialty dealing with the diagnosis and treatment of diseases and disorders of the eye. The first ophthalmologists were oculists. These paramedical specialists practiced on an itinerant basis during the Middle Ages. Georg Bartisch, a German physician who wrote on eye diseases in the 16th century, is sometimes credited with founding the medical practice of ophthalmology. Many important eye operations were first developed by oculists, as, for example, the surgical correction of strabismus, first performed in 1738. The first descriptions of visual defects included those of glaucoma (1750), night blindness (1767), colour blindness (1794), and astigmatism (1801).

The first formal course in ophthalmology was taught at the medical school of the University of Göttingen in 1803, and the first medical eye clinic with an emphasis on teaching, the London Eye Infirmary, was opened in 1805, initiating the modern specialty. Advances in optics by the Dutch physician Frans Cornelis Donders in 1864 established the modern system of prescribing and fitting eyeglasses to a particular vision problem. The invention of the ophthalmoscope for looking at the interior of the eye created the possibility of relating eye defects to internal medical conditions.

In the 20th century, advances in the field have chiefly involved the prevention of eye disease through regular eye examinations and the early treatment of congenital eye defects. Another major development was the eye bank, first established in 1944 in New York, which made corneal tissues for transplantation more generally available.

The function of the human eye is to receive visual images. Whatever adversely affects vision is the concern of the ophthalmologist, whether it be caused by faulty development of the eye, disease, injury, degeneration, senescence, or refraction. He makes tests of visual function and examines the interior of the eye as part of a general physical examination for symptoms of systemic or neurologic diseases. He prescribes medical treatment for eye disease and glasses for refraction and performs surgical operations where indicated.

An ophthalmologist is a medical or osteopathic doctor who specializes in eye and vision care. Ophthalmologists differ from optometrists and opticians in their levels of training and in what they can diagnose and treat.

When it's time to get your eyes checked, make sure you are seeing the right eye care professional for your needs. Each member of the eye care team plays an important role in providing eye care, but many people confuse the different providers and their roles in maintaining your eye health. The levels of training and expertise—and what they are allowed to do for you—are the major difference between the types of eye care provider.

Ophthalmologists are eye physicians with advanced medical and surgical training

Ophthalmologists complete 12 to 13 years of training and education, and are licensed to practice medicine and surgery. This advanced training allows ophthalmologists to diagnose and treat a wider range of conditions than optometrists and opticians. Typical training includes a four-year college degree followed by at least eight years of additional medical training.

An ophthalmologist diagnoses and treats all eye diseases, performs eye surgery and prescribes and fits eyeglasses and contact lenses to correct vision problems. Many ophthalmologists are also involved in scientific research on the causes and cures for eye diseases and vision disorders. Because they are medical doctors, ophthalmologists can sometimes recognize other health problems that aren't directly related to the eye, and refer those patients to the right medical doctors for treatment.

Some ophthalmologists have specialized expertise in specific eye conditions

While ophthalmologists are trained to care for all eye problems and conditions, some ophthalmologists specialize further in a specific area of medical or surgical eye care. This person is called a subspecialist. He or she usually completes one or two years of additional, more in-depth training (called a Fellowship) in one of the main subspecialty areas such as Glaucoma, Retina, Cornea, Pediatrics, Neurology, Oculo-Plastic Surgery or others. This added training and knowledge prepares an ophthalmologist to take care of more complex or specific conditions in certain areas of the eye or in certain groups of patients.

Optometrists provide vision tests, prescribe lenses and treat certain eye conditions

Optometrists are healthcare professionals who provide primary vision care ranging from vision testing and correction to the diagnosis, treatment, and management of vision changes. An optometrist is not a medical doctor. An optometrist receives a doctor of optometry (OD) degree after completing 2 to 4 years of college-level education, followed by four years of optometry school. They are licensed to practice optometry, which primarily involves performing eye exams and vision tests, prescribing and dispensing corrective lenses, detecting certain eye abnormalities and prescribing medications for certain eye diseases. Many ophthalmologists and optometrists work together in the same offices, as a team. In the United States, what optometrists are licensed to do for patients can vary from state to state.

Opticians fit eyeglasses and contact lenses

Opticians are technicians trained to design, verify and fit eyeglass lenses and frames, contact lenses and other devices to correct eyesight. They use prescriptions supplied by ophthalmologists or optometrists, but do not test vision or write prescriptions for visual correction. Opticians are not permitted to diagnose or treat eye diseases.

Ophthalmic medical assistants help physicians examine and treat patients

These technicians work in the ophthalmologist's office and are trained to perform a variety of tests and help the physician with examining and treating patients.

Ophthalmic technicians/technologists assist with medical tests and minor surgeries

These are highly trained or experienced medical assistants who assist the physician with more complicated or technical medical tests and minor office surgery.

Ophthalmic registered nurses deliver medications and assist with surgeries

These clinicians have undergone special nursing training and may have additional training in ophthalmic nursing. They may assist the physician in more technical tasks, such as injecting medications or assisting with hospital or office surgery. Some ophthalmic registered nurses also serve as clinic or hospital administrators.

Ophthalmic photographers use cameras to document a patient's eyes

These individuals use specialized cameras and photographic methods to document patients' eye conditions in photographs.

See the right eye care provider at the right time

Without healthy vision it can be hard to work, play, drive or even recognize a face. Many factors can affect eyesight, including other health problems like high blood pressure or diabetes. Having a family member with eye disease can make you more prone to having that condition. Sight-stealing eye disease can appear at any time. Often vision changes are unnoticeable at first and difficult to detect.

If you've never had a complete, dilated eye exam, the American Academy of Ophthalmology recommends that everyone have a complete medical eye exam by age 40, and then as often as recommended by your ophthalmologist. Even if you're healthy, it's important to have a baseline eye exam, to compare against in the future and help spot changes or problems.

There are many possible symptoms of eye disease. If you have any concerns about your eyes or vision, visit an ophthalmologist. A complete, medical eye exam by an ophthalmologist could be the first step toward saving your sight.

Ophthalmology Subspecialists

From Aqueous to Zonules: Subspecialists Focus on Different Parts of the Eye

When you visit an ophthalmologist, you are seeing the only kind of doctor who is trained in all aspects of eye care. A comprehensive ophthalmologist (also known as a general ophthalmologist) can diagnose and treat eye diseases, perform eye surgery and prescribe and fit eyeglasses and contact lenses. Many comprehensive ophthalmologists have additional training to treat specific eye conditions, such as glaucoma or cataracts. But if your comprehensive ophthalmologist finds you have a condition that requires more specific care for a certain part of the eye, he or she will have you see a subspecialist.

More Training for More Focus

Subspecialists have intensive training in a particular area of the eye. To become subspecialists, ophthalmologists add a fellowship to their years of medical training. A fellowship prepares an ophthalmologist to treat more specific or complex conditions in certain parts of the eye or in certain types of patients. Fellowship-trained ophthalmologists have a total of 9 to 10 years of training after they finish college.

What Subspecialist Should You See?

If your comprehensive ophthalmologist decides you need to see a subspecialist for your condition, he or she may refer you to one for follow-up care.

Cornea

The cornea is the clear, dome-shaped covering in front of the iris and pupil. A cornea subspecialist diagnoses and manages corneal eye disease, including Fuchs’ dystrophy and keratoconus. Many cornea subspecialists also perform refractive surgery (such as LASIK), as well as corneal transplants. They also handle corneal trauma as well as complicated contact lens fittings.

Retina

The retina is the light-sensitive tissue lining the back of the eye. The macula is a small area of the retina responsible for your central, detail vision. A retina specialist diagnoses and manages retinal diseases, including macular degeneration and diabetic eye disease. They surgically repair torn and detached retinas and treat problems with the vitreous, the gel-like substance in the middle of the eyeball.

Glaucoma

Glaucoma is a disease that affects the optic nerve, which connects the eye to the brain. If your eye does not circulate fluid inside the eye properly, pressure builds inside the eye and damages the optic nerve. Glaucoma subspecialists use medicine, laser and surgery to manage eye pressure.

Pediatrics

Pediatric ophthalmologists treat eye conditions in infants and children. They diagnose and treat misalignment of the eyes, uncorrected refractive errors and vision differences between the two eyes, as well as childhood eye diseases and other conditions. Strabismus specialists also treat adults with eyes that do not work properly together.

Oculoplastics

Oculoplastic surgeons repair damage to or problems with the eyelids, bones and other structures around the eyeball, and in the tear drainage system. They do medical injections around the eyes and face to improve the look and function of facial structures.

Neurology

Neuro-ophthalmologists take care of vision problems related to how the eyes interact with the brain, nerves and muscles. Among other conditions, they diagnose and treat optic nerve problems, various types of vision loss, double vision, abnormal eye movements, unequal pupil size, and eyelid abnormalities. Diseases which can cause these problems include strokes, brain tumors, multiple sclerosis, and thyroid eye disease.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1163 2021-10-18 00:09:02

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

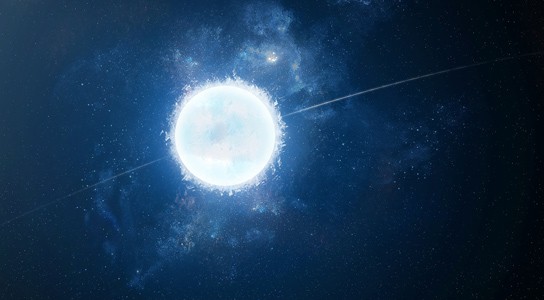

1140) Neutron star

Neutron star, any of a class of extremely dense, compact stars thought to be composed primarily of neutrons. Neutron stars are typically about 20 km (12 miles) in diameter. Their masses range between 1.18 and 1.97 times that of the Sun, but most are 1.35 times that of the Sun. Thus, their mean densities are extremely high—about {10}^{14} times that of water. This approximates the density inside the atomic nucleus, and in some ways a neutron star can be conceived of as a gigantic nucleus. It is not known definitively what is at the centre of the star, where the pressure is greatest; theories include hyperons, kaons, and pions. The intermediate layers are mostly neutrons and are probably in a “superfluid” state. The outer 1 km (0.6 mile) is solid, in spite of the high temperatures, which can be as high as 1,000,000 K. The surface of this solid layer, where the pressure is lowest, is composed of an extremely dense form of iron.

Another important characteristic of neutron stars is the presence of very strong magnetic fields, upward of {10}^{12} gauss (Earth’s magnetic field is 0.5 gauss), which causes the surface iron to be polymerized in the form of long chains of iron atoms. The individual atoms become compressed and elongated in the direction of the magnetic field and can bind together end-to-end. Below the surface, the pressure becomes much too high for individual atoms to exist.

The discovery of pulsars in 1967 provided the first evidence of the existence of neutron stars. Pulsars are neutron stars that emit pulses of radiation once per rotation. The radiation emitted is usually radio waves, but pulsars are also known to emit in optical, X-ray, and gamma-ray wavelengths. The very short periods of, for example, the Crab (NP 0532) and Vela pulsars (33 and 83 milliseconds, respectively) rule out the possibility that they might be white dwarfs. The pulses result from electrodynamic phenomena generated by their rotation and their strong magnetic fields, as in a dynamo. In the case of radio pulsars, neutrons at the surface of the star decay into protons and electrons. As these charged particles are released from the surface, they enter the intense magnetic field that surrounds the star and rotates along with it. Accelerated to speeds approaching that of light, the particles give off electromagnetic radiation by synchrotron emission. This radiation is released as intense radio beams from the pulsar’s magnetic poles.

Many binary X-ray sources, such as Hercules X-1, contain neutron stars. Cosmic objects of this kind emit X-rays by compression of material from companion stars accreted onto their surfaces.

Neutron stars are also seen as objects called rotating radio transients (RRATs) and as magnetars. The RRATs are sources that emit single radio bursts but at irregular intervals ranging from four minutes to three hours. The cause of the RRAT phenomenon is unknown. Magnetars are highly magnetized neutron stars that have a magnetic field of between {10}^{14} and {10}^{15} gauss.

Most investigators believe that neutron stars are formed by supernova explosions in which the collapse of the central core of the supernova is halted by rising neutron pressure as the core density increases to about {10}^{15} grams per cubic cm. If the collapsing core is more massive than about three solar masses, however, a neutron star cannot be formed, and the core would presumably become a black hole.

What Is a Neutron Star?

Neutron stars are the remnants of giant stars that died in a fiery explosion known as a supernova. After such an outburst, the cores of these former stars compact into an ultradense object with the mass of the sun packed into a ball the size of a city.

How do neutron stars form?

Ordinary stars maintain their spherical shape because the heaving gravity of their gigantic mass tries to pull their gas toward a central point, but is balanced by the energy from nuclear fusion in their cores, which exerts an outward pressure, according to NASA. At the end of their lives, stars that are between four and eight times the sun's mass burn through their available fuel and their internal fusion reactions cease. The stars' outer layers rapidly collapse inward, bouncing off the thick core and then blasting out again as a violent supernova.

But the dense core continues to collapse, generating pressures so high that protons and electrons are squeezed together into neutrons, as well as lightweight particles called neutrinos that escape into the distant universe. The end result is a star whose mass is 90% neutrons, which can't be squeezed any tighter, and therefore the neutron star can't break down any further.

Characteristics of a neutron star

Astronomers first theorized about the existence of these bizarre stellar entities in the 1930s, shortly after the neutron was discovered. But it wasn't until 1967 that scientists had good evidence for neutron stars in reality. A graduate student named Jocelyn Bell at the University of Cambridge in England noticed strange pulses in her radio telescope, arriving so regularly that at first she thought they might be a signal from an alien civilization, according to the American Physical Society. The patterns turned out not to be E.T. but rather radiation emitted by rapidly spinning neutron stars.

The supernova that gives rise to a neutron star imparts a great deal of energy to the compact object, causing it to rotate on its axis between 0.1 and 60 times per second, and up to 700 times per second. The formidable magnetic fields of these entities produce high-powered columns of radiation, which can sweep past the Earth like lighthouse beams, creating what's known as a pulsar.

The properties of neutron stars are utterly out of this world — a single teaspoon of neutron-star material would weigh a billion tons. If you were to somehow stand on their surface without dying, you'd experience a force of gravity 2 billion times stronger than what you feel on Earth.

An ordinary neutron star's magnetic field might be trillions of times stronger than Earth's. But some neutron stars have even more extreme magnetic fields, a thousand or more times the average neutron star. This creates an object known as a magnetar.

Starquakes on the surface of a magnetar — the equivalent of crustal movements on Earth that generate earthquakes — can release tremendous amounts of energy. In one-tenth of a second, a magnetar might produce more energy than the sun has emitted in the last 100,000 years, according to NASA.

Research on neutron stars

Researchers have considered using the stable, clock-like pulses of neutron stars to aid in spacecraft navigation, much like GPS beams help guide people on Earth. An experiment on the International Space Station called Station Explorer for X-ray Timing and Navigation Technology (SEXTANT) was able to use the signal from pulsars to calculate the ISS’s location to within 10 miles (16 km).

But a great deal remains to be understood about neutron stars. For instance, in 2019, astronomers spotted the most massive neutron star ever seen — with about 2.14 times the mass of our sun packed into a sphere most likely around 12.4 miles (20 km) across. At this size, the object is just at the limit where it should have collapsed into a black hole, so researchers are examining it closely to better understand the odd physics potentially at work holding it up.

Researchers are also gaining new tools to better study neutron-star dynamics. Using the Laser Interferometer Gravitational-Wave Observatory (LIGO), physicists have been able to observe the gravitational waves emitted when two neutron stars circle one another and then collide. These powerful mergers might be responsible for making many of the precious metals we have on Earth, including platinum and gold, and radioactive elements, such as uranium.

It appears to me that if one wants to make progress in mathematics, one should study the masters and not the pupils. - Niels Henrik Abel.

Nothing is better than reading and gaining more and more knowledge - Stephen William Hawking.

Offline

#1164 2021-10-19 00:07:36

- Jai Ganesh

- Administrator

- Registered: 2005-06-28

- Posts: 52,697

Re: Miscellany

1141) Red Giant Star

Red giant stars: Facts, definition & the future of the sun

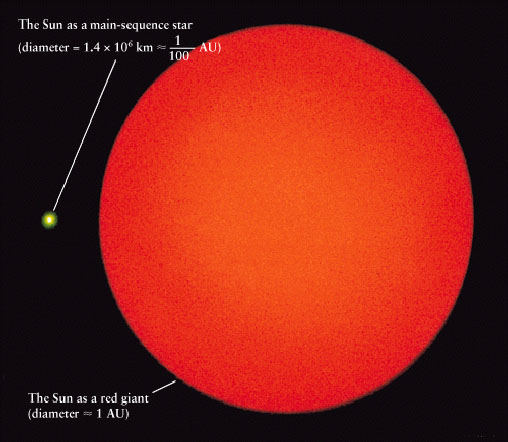

A red giant star is a dying star in the last stages of stellar evolution. In only a few billion years, our own sun will turn into a red giant star, expand and engulf the inner planets, possibly even Earth. What does the future hold for the light of our solar system and others like it?

Forming a giant

Most of the stars in the universe are main sequence stars — those converting hydrogen into helium via nuclear fusion. A main sequence star may have a mass between a third to eight times that of the sun and eventually burn through the hydrogen in its core. Over its life, the outward pressure of fusion has balanced against the inward pressure of gravity. Once the fusion stops, gravity takes the lead and compresses the star smaller and tighter.

Temperatures increase with the contraction, eventually reaching levels where helium is able to fuse into carbon. Depending on the mass of the star, the helium burning might be gradual or might begin with an explosive flash.

"Although fusion is no longer taking place in the core, the rise in temperature heats up the shell of hydrogen surrounding the core until it is hot enough to start hydrogen fusion, producing more energy than when it was a main sequence star," the Australia Telescope National Facility says on their website.

Red giant stars reach sizes of 100 million to 1 billion kilometers in diameter (62 million to 621 million miles), 100 to 1,000 times the size of the sun today. Because the energy is spread across a larger area, surface temperatures are actually cooler, reaching only 2,200 to 3,200 degrees Celsius (4,000 to 5,800 degrees Fahrenheit), a little over half as hot as the sun. This temperature change causes stars to shine in the redder part of the spectrum, leading to the name red giant, though they are often more orangish in appearance.

In 2017, an international team of astronomers identified the surface of the red giant π Gruis in detail using the European Southern Observatory's Very Large Telescope. They found that the red giant's surface has just a few convective cells, or granules, that are each about 75 million miles (120 million kilometers) across. By comparison, the sun has about two million convective cells about 930 miles (1,500 km) across.