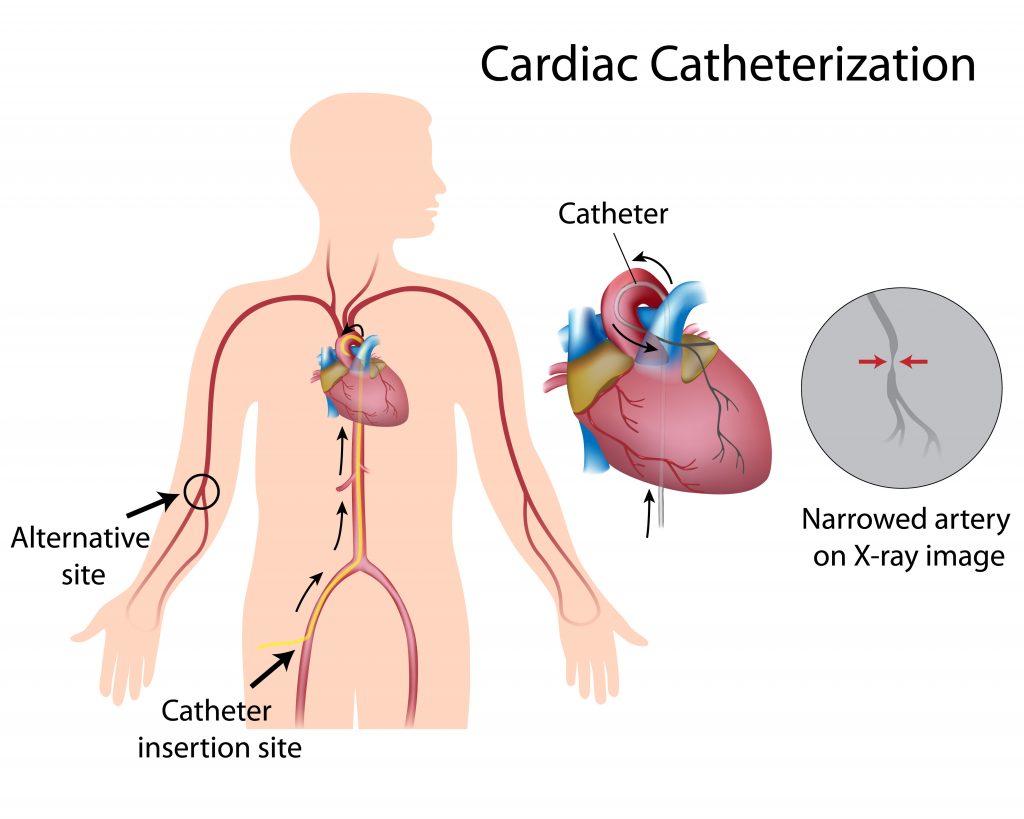

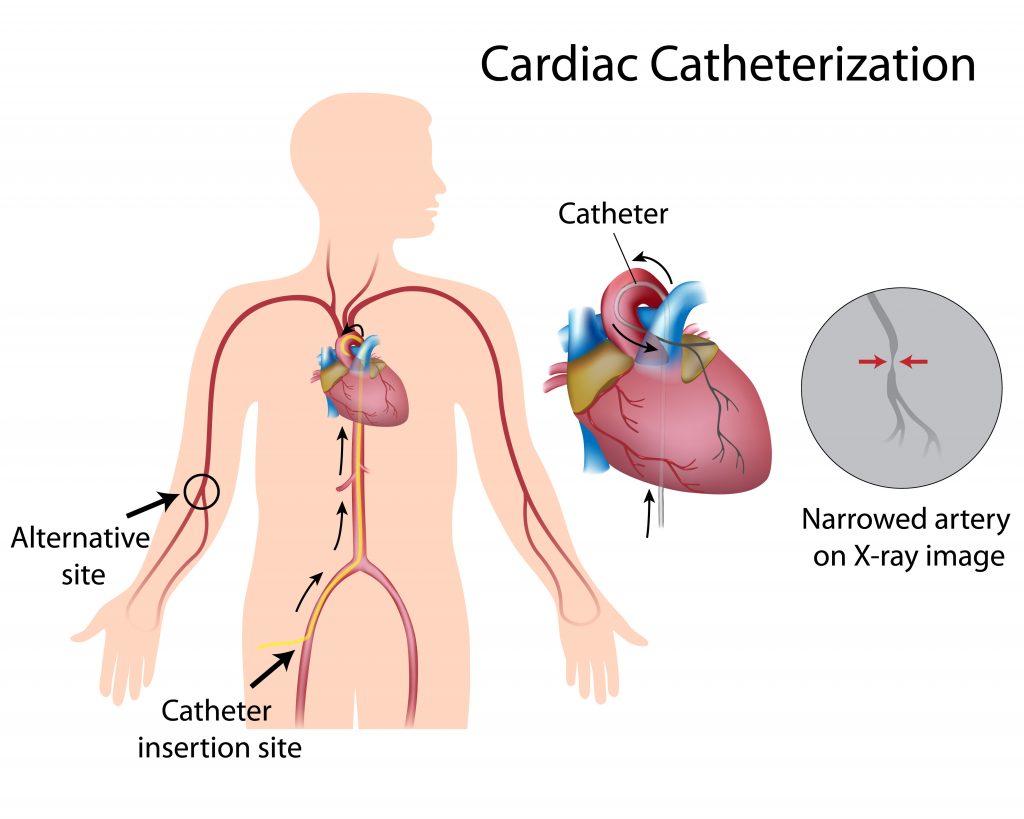

GistCardiac catheterization is a minimally invasive procedure where a thin, flexible tube (catheter) is inserted into a blood vessel in the groin, arm, or neck and guided to the heart to diagnose or treat conditions like clogged arteries, valve issues, or arrhythmia. It allows doctors to measure pressures, take samples, perform angioplasty, and place stents.

In cardiac catheterization (or cath), your healthcare provider puts a very small, flexible, hollow tube (catheter) into a blood vessel in the groin, arm, wrist, or in rare cases the neck. Then your provider threads it through the blood vessel into the aorta and into the heart.

Summary

Cardiac catheterization, also known as cardiac cath or heart catheterization, is a medical procedure used to diagnose and treat some heart conditions. It lets doctors take a close look at the heart to identify problems and to perform other tests or procedures.

Your healthcare provider may recommend cardiac catheterization to find out the cause of symptoms such as chest pain or irregular heartbeat. Before the procedure, you may need to diagnostic tests, such as blood tests, heart imaging tests, or a stress test, to determine how well your heart is working and to help guide the procedure.

During cardiac catheterization, a long, thin, flexible tube called a catheter is put into a blood vessel in your arm, groin or upper thigh, or neck. The catheter is then threaded through the blood vessels to your heart. It may be used to examine your heart valves or take samples of blood or heart muscle. Your doctor may also use ultrasound, a test that uses sound waves to create an image, or they may inject a dye into your coronary arteries to see whether your arteries are narrowed or blocked. Cardiac catheterization may also be used instead of some heart surgeries to repair heart defects and replace heart valves.

Cardiac catheterization is safe for most people. Problems following the procedure are rare but can include bleeding and blood clots. Your healthcare provider will monitor your condition and may recommend medicines to prevent blood clots.

Details

Cardiac catheterization (heart cath) is the insertion of a catheter into a chamber or vessel of the heart. This is done both for diagnostic and interventional purposes.

A common example of cardiac catheterization is coronary catheterization that involves catheterization of the coronary arteries for coronary artery disease and myocardial infarctions ("heart attacks"). Catheterization is most often performed in special laboratories with fluoroscopy and highly maneuverable tables. These "cath labs" are often equipped with cabinets of catheters, stents, balloons, etc. of various sizes to increase efficiency. Monitors show the fluoroscopy imaging, electrocardiogram (ECG), pressure waves, and more.

Procedure

"Cardiac catheterization" is a general term for a group of procedures. Access to the heart is obtained through a peripheral artery or vein. Commonly, this includes the radial artery, internal jugular vein, and femoral artery/vein. Each blood vessel has its advantages and disadvantages. Once access is obtained, plastic catheters (tiny hollow tubes) and flexible wires are used to navigate to and around the heart. Catheters come in numerous shapes, lengths, diameters, number of lumens, and other special features such as electrodes and balloons. Once in place, they are used to measure or intervene. Imaging is an important aspect to catheterization and commonly includes fluoroscopy but can also include forms of echocardiography (TTE, TEE, ICE) and ultrasound (IVUS).

TTE: Transthoracic echocardiogram

TEE: Transesophageal echocardiogram

ICE: Intracardiac echocardiogram

UVUS : Intravascular ultrasound

Obtaining access uses the Seldinger technique by puncturing the vessel with a needle, placing a wire through the needle into the lumen of the vessel, and then exchanging the needle for a larger plastic sheath. Finding the vessel with a needle can be challenging and both ultrasound and fluoroscopy can be used to aid in finding and confirming access. Sheaths typically have a side port that can be used to withdraw blood or inject fluids/medications, and they also have an end hole that permits introducing the catheters, wires, etc. coaxially into the blood vessel.

Once access is obtained, what is introduced into the vessel depends on the procedure being performed. Some catheters are formed to a particular shape and can really only be manipulated by inserting/withdrawing the catheter in the sheath and rotating the catheter. Others may include internal structures that permit internal manipulation (e.g., intracardiac echocardiography).

Finally, when the procedure is completed, the catheters are removed and the sheath is removed. With time, the hole made in the blood vessel will heal. Vascular closure devices can be used to speed along hemostasis.

Equipment

Much equipment is required for a facility to perform the numerous possible procedures for cardiac catheterization.

General:

* Catheters

* Film or Digital Camera

* Electrocardiography monitors

* External defibrillator

* Fluoroscopy

* Pressure transducers

* Sheaths

Percutaneous coronary intervention:

* Coronary stents: bare-metal stent (BMS) and drug-eluting stent (DES)

* Angioplasty balloons

* Atherectomy lasers and rotational devices

* Left atrial appendage occlusion devices

Electrophysiology:

* Ablation catheters: radiofrequency (RF) and cryo

* Pacemakers

* Defibrillators

Additional Information:

What is cardiac catheterization?

In cardiac catheterization (or cath), your healthcare provider puts a very small, flexible, hollow tube (catheter) into a blood vessel in the groin, arm, wrist, or in rare cases the neck. Then your provider threads it through the blood vessel into the aorta and into the heart. Once the catheter is in place, several tests may be done. Your provider can place the tip of the catheter into various parts of the heart to measure the pressures in the heart chambers. Or they can take blood samples to measure oxygen levels.

Your healthcare provider can guide the catheter into the coronary arteries and inject contrast dye to check blood flow through them. The coronary arteries are the vessels that carry blood to the heart muscle. This is called coronary angiography.

These are some of the other procedures that may be done during or after a cardiac cath:

* Angioplasty. In this procedure, your healthcare provider can inflate a tiny balloon at the tip of the catheter. This presses any plaque buildup against the artery wall and improves blood flow through the artery.

* Stent placement. In this procedure, your provider expands a tiny metal mesh coil or tube at the end of the catheter inside an artery to keep it open.

* Fractional flow reserve. This is a pressure management method that’s used in catheterization to see how much blockage is in an artery.

* Intravascular ultrasound (IVUS). This test uses a computer and a transducer to send out ultrasonic sound waves to make images of the blood vessels. By using IVUS, your healthcare provider can see and measure the inside of the blood vessels.

* Biopsy. Your provider may take out a small tissue sample and examine it under the microscope for abnormalities.

During the procedure, you will be awake. But a small amount of sedating medicine will be given before starting to help keep you comfortable.

Why might I need cardiac catheterization?

Your healthcare provider may use cardiac cath to help diagnosis these heart conditions:

* Atherosclerosis. This is a gradual clogging of the arteries by fatty materials and other substances in the blood stream.

* Cardiomyopathy. This is an enlargement of the heart due to thickening or weakening of the heart muscle

* Congenital heart disease. Defects in 1 or more heart structures that occur during fetal development, such as a ventricular septal defect (hole in the wall between the 2 lower chambers of the heart), are called congenital heart defects. This may lead to abnormal blood flow within the heart.

* Heart failure. This condition is when the heart muscle has become too weak to pump blood well. It causes fluid buildup (congestion) in the blood vessels and lungs, and edema (swelling) in the feet, ankles, and other parts of the body.

* Heart valve disease. This is when 1 or more of the heart valves isn't working right, affecting blood flow within the heart.

* Rejection after heart transplant. A biopsy is a common procedure after a heart transplant to monitor for rejection. Rejection is a process of your body's immune system attacking the donor heart. Medicines must be taken life-long following a transplant to prevent rejection.

You may have a cardiac cath if you have recently had 1 or more of these symptoms:

* Chest pain (angina)

* Shortness of breath

* Dizziness

* Extreme tiredness

If a screening exam, such as an electrocardiogram (ECG) or stress test, suggests there may be a heart condition that needs to be explored further, your healthcare provider may order a cardiac cath.

Another reason for a cath procedure is to evaluate blood flow to the heart muscle if chest pain occurs after the following:

* Heart attack

* Coronary artery bypass surgery

* Coronary angioplasty. This is opening a coronary artery using a balloon or other method.

* Placement of a stent. A stent is a tiny metal coil or tube placed inside an artery to keep the artery open.

There may be other reasons for your healthcare provider to recommend a cardiac cath.

What are the risks of cardiac catheterization?

Possible risks of cardiac cath include:

* Bleeding or bruising where the catheter is put into the body (the groin, arm, neck, or wrist)

* Pain where the catheter is put into the body

* Blood clot or damage to the blood vessel that the catheter is put into

* Infection where the catheter is put into the body

* Problems with heart rhythm (usually temporary)

More serious but rare complications include:

* Less blood flow to the heart tissue (ischemia), chest pain, or heart attack

* Sudden blockage of a coronary artery

* A tear in the lining of an artery

* Kidney damage from the dye used

* Bleeding from the heart itself

* Stroke

* Need for heart surgery

If you are pregnant or think you could be, tell your healthcare provider. There is a risk of injury to the unborn baby from a cardiac cath. Radiation exposure during pregnancy may lead to birth defects. Also be sure to tell your provider if you are lactating or breastfeeding.

There is a risk for allergic reaction to the dye used during the cardiac cath. If you are allergic to or sensitive to medicines, contrast dye, iodine, or latex, tell your healthcare provider. Also, tell them if you have kidney failure or other kidney problems.

For some people, having to lie still on the cardiac cath table for the length of the procedure may cause some discomfort or pain.

There may be other risks depending on your specific health problem. Be sure to talk about any concerns with your healthcare provider before the procedure.

How do I get ready for cardiac catheterization?

* Your healthcare provider will explain the procedure to you and give you a chance to ask any questions.

* You will be asked to sign a consent form that gives your permission to do the test. Read the form carefully and ask questions if anything is unclear.

* Tell your healthcare provider if you have ever had a reaction to any contrast dye, if you are allergic to iodine, or if you are sensitive to or are allergic to any medicines, latex, tape, and anesthetic agents (local and general).

* You will need to fast (not eat or drink) for a certain period before the procedure. Your provider will tell you how long to fast, usually overnight.

* If you are pregnant or think you could be, tell your provider.

* Tell your provider if you have any body piercings on your chest or belly (abdomen).

* Tell your provider about all the medicines (prescription and over-the-counter), vitamins, herbs, and supplements that you are taking.

* You may be asked to stop certain medicines before the procedure. Your provider will give you detailed instructions.

* Let your provider know if you have a history of bleeding disorders or if you are taking any anticoagulant (blood-thinning) medicines, aspirin, or other medicines that affect blood clotting. You may need to stop some of these medicines before the procedure.

* Let you provider know if you have any kidney problems. The contrast dye used during the cardiac cath can cause kidney damage in people who have poor kidney function. In some cases, blood tests may be done before and after the test to be sure that your kidneys are working correctly.

* Your provider may request a blood test before the procedure to see how long it takes your blood to clot. Other blood tests may be done as well.

* Tell your provider if you have heart valve disease.

* Tell your provider if you have a pacemaker or any other implanted cardiac devices.

* You may get a sedative before the procedure to help you relax. If a sedative is used, you will need someone to drive you home afterward.

Based on your medical condition, your healthcare provider may request other specific preparations.

What happens during a cardiac catheterization?

A cardiac cath can be done on an outpatient basis or as part of your stay in a hospital. Procedures may vary depending on your condition and your healthcare provider's practices.

Generally, a cardiac cath follows this process:

* You'll remove any jewelry or other objects that may interfere with the procedure. You may wear your dentures or hearing aids if you use either of these.

* Before the procedure, you should empty your bladder then change into a hospital gown.

* A healthcare provider may shave the area where the catheter will be put in. The catheter is most often put in at the groin area. But other places used are the wrist, inside the elbow, or the neck.

* A healthcare provider will start an IV (intravenous) line in your hand or arm before the procedure to give you IV fluids and medicines, if needed.

* You will lie on your back on the procedure table.

* You will be connected to an ECG monitor that records the electrical activity of your heart and keeps track of your heart during the procedure using small electrodes that stick to your skin. Your vital signs (heart rate, blood pressure, breathing rate, and oxygen level) will be tracked during the procedure.

* Several monitor screens in the room will show your vital signs, the images of the catheter being moved through your body into your heart, and the structures of your heart as the dye is injected.

* You will get a sedative in your IV line before the procedure to help you relax. But you will likely be awake during the procedure.

* Your pulses below the catheter insertion site will be checked and marked so that the circulation to the limb can be checked after the procedure.

* Your healthcare provider will inject a local anesthetic (numbing medicine) into the skin where the catheter will be put in. You may feel some stinging at the site for a few seconds after the local anesthetic is injected.

* Once the local anesthetic has taken effect, your healthcare provider inserts a sheath, or introducer, into the blood vessel. This is a plastic tube through which the catheter is thread into the blood vessel and advanced into the heart. If the arm is used, your provider may make a small incision (cut) to expose the blood vessel and put in the sheath.

* Your healthcare provider will advance the catheter through the aorta to the left side of the heart. They may ask you to hold your breath, cough, or move your head a bit to get clear views and advance the catheter. You may be able to watch this process on a computer screen.

* Once the catheter is in place, your provider will inject contrast dye to visualize the heart and the coronary arteries. You may feel some effects when the contrast dye is injected into the catheter. These effects may include a flushing sensation, a salty or metallic taste in the mouth, nausea, or a brief headache. These effects usually last for only a few moments.

* Tell the provider if you feel any breathing difficulties, sweating, numbness, nausea or vomiting, chills, itching, or heart palpitations.

* After the contrast dye is injected, a series of rapid X-ray images of the heart and coronary arteries will be made. You may be asked to take a deep breath and hold it for a few seconds during this time. It’s important to be very still as the X-rays are taken.

* Once the procedure is done, your provider will remove the catheter and close the insertion site. They may close it using either collagen to seal the opening in the artery, sutures, a clip to bind the artery together, or by holding pressure over the area to keep the blood vessel from bleeding. Your provider will decide which method is best for you.

* If a closure device is used, a sterile dressing will be put over the site. If manual pressure is used, your healthcare provider (or an assistant) will hold pressure on the site so that a clot will form. Once the bleeding has stopped, a very tight bandage will be placed on the site.

* The staff will help you slide from the table onto a stretcher so that you can be taken to the recovery area. Note: If the catheter was placed in your groin, you will not be allowed to bend your leg for several hours. If the insertion site was in your arm, your arm will be elevated on pillows and kept straight by placing it in an arm guard (a plastic arm board designed to immobilize the elbow joint). In addition, a tight plastic band may be put around your arm near the insertion site. The band will be loosened over time and removed before you go home.

What happens after cardiac catheterization?

* In the hospital:

After the cardiac cath, you may be taken to a recovery room or returned to your hospital room. You will stay flat in bed for several hours. A nurse will keep track of your vital signs, the insertion site, and circulation in the affected leg or arm.

Let your nurse know right away if you feel any chest pain or tightness, or any other pain, as well as any feelings of warmth, bleeding, or pain at the insertion site.

Bedrest may vary from 4 to 6 hours. If your healthcare provider placed a closure device, your bedrest may be shorter.

In some cases, the sheath or introducer may be left in the insertion site. If so, you will be on bedrest until your provider or another team member removes the sheath. After the sheath is removed, you may be given a light meal.

You may feel the urge to urinate often because of the effects of the contrast dye and increased fluids. You will need to use a bedpan or urinal while on bedrest, so you don't bend the affected leg or arm.

After the period of bed rest, you may get out of bed. The nurse will help you the first time you get up. They may check your blood pressure while you are lying in bed, sitting, and standing. You should move slowly when getting up from the bed to prevent any dizziness from the long period of bed rest.

You may be given medicine for pain or discomfort related to the insertion site or having to lie flat and still for a prolonged period.

Drink plenty of water and other fluids to help flush the contrast dye from your body.

You may go back to your usual diet after the procedure, unless your healthcare provider tells you otherwise.

After the recovery period, you may be discharged home unless your healthcare provider decides otherwise. In many cases, you may spend the night in the hospital for careful observation. If the cardiac cath was done on an outpatient basis and a sedative was used, you must have another person drive you home.

* At home

Once at home, you should check the insertion site for bleeding, unusual pain, swelling, and abnormal discoloration or temperature change. A small bruise is normal. If you notice a constant or large amount of blood at the site that cannot be contained with a small dressing, contact your healthcare provider.

If your healthcare provider used a closure device at your insertion site, you will be given instructions on how to take care of the site. There may be a small knot, or lump, under the skin at the site. This is normal. The knot should go away over a few weeks.

It will be important to keep the insertion site clean and dry. Your healthcare provider will give you specific bathing instructions. In general, don't soak the access site in water (no bathtubs, hot tubs, or swimming) until the skin is healed at the site.

Your healthcare provider may advise you not to do any strenuous activities for a few days after the procedure. They'll tell you when it's OK to go back to work, drive, and resume normal activities.

Contact your healthcare provider if you have any of the following:

* Fever or chills

* Increased pain, redness, swelling, or bleeding or other drainage from the insertion site

* Coolness, numbness or tingling, or other changes in the affected arm or leg

* Chest pain or pressure, nausea or vomiting, profuse sweating, dizziness, or fainting

Your healthcare provider may give you other instructions after the procedure, depending on your situation.

Next steps

Before you agree to the test or procedure, make sure you know:

* The name of the test or procedure

* The reason you are having the test or procedure

* What results to expect and what they mean

* The risks and benefits of the test or procedure

* What the possible side effects or complications are

* When and where you are to have the test or procedure

* Who will do the test or procedure and what that person’s qualifications are

* What would happen if you did not have the test or procedure

* Any alternative tests or procedures to think about

* When and how you will get the results

* Who to call after the test or procedure if you have questions or problems

* How much you will have to pay for the test or procedure

]]>